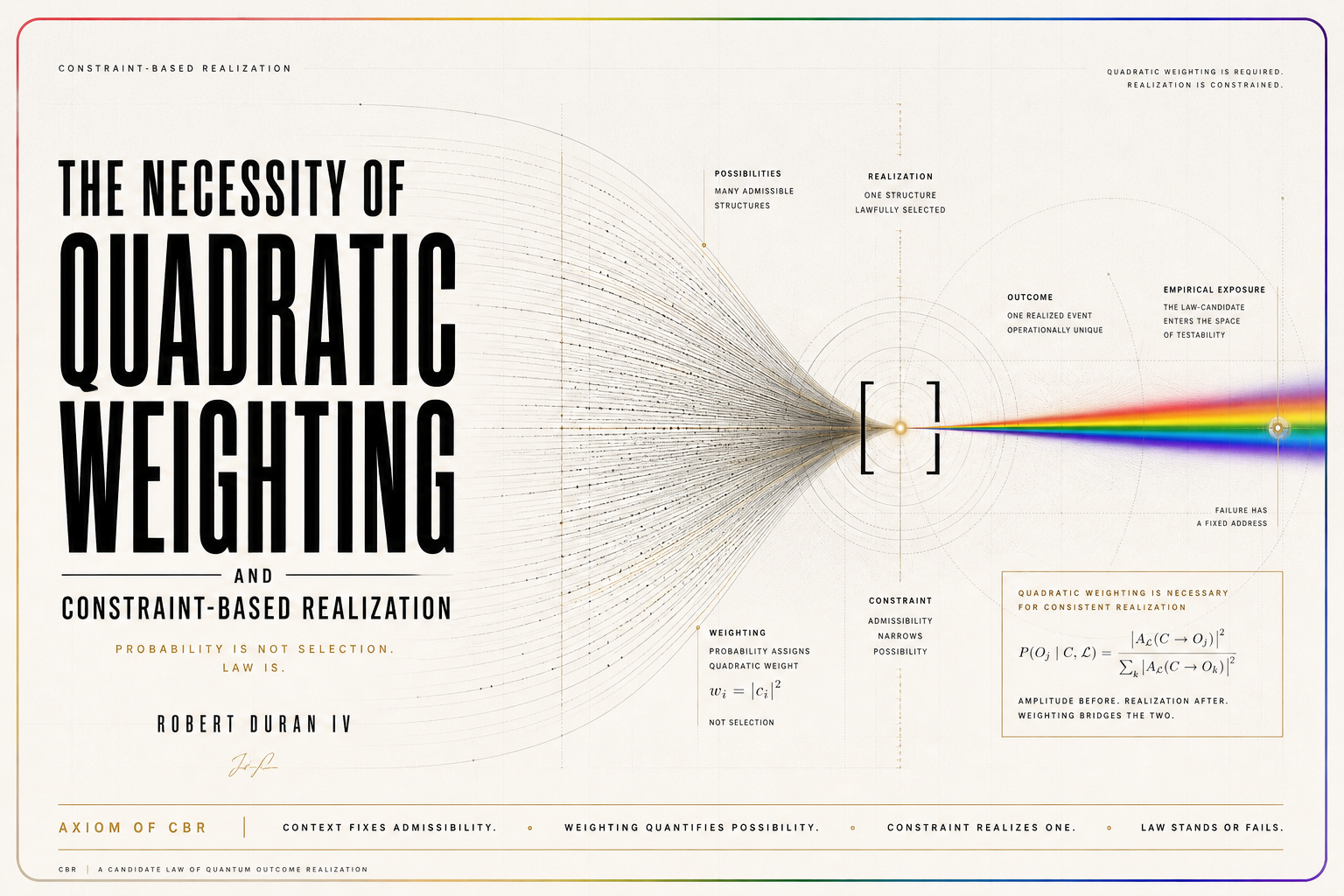

The Necessity of Quadratic Weighting and Constraint-Based Realization

Abstract

This paper addresses the remaining probability burden in Constraint-Based Realization by asking whether quadratic modulus weighting is merely sufficient within canonical CBR or instead necessary for any realization-weighting framework that aims to preserve stable physical probability. The paper’s central claim is that, within an operationally acceptable theorem class, quadratic weighting is not optional: it is forced.

To make that claim precise, the paper defines a class of realization-weighting frameworks over admissible decompositions ψ = ∑ᵢ αᵢeᵢ, with probabilities of the form Pᵢ = W(αᵢ)/∑ⱼ W(αⱼ). It then identifies the structural conditions required for physically meaningful realization probability, including phase insensitivity, admissible refinement consistency, coarse-graining consistency, symmetry, operational invariance, normalization, nontriviality, regularity, and non-circular admissibility. These are treated not as optional design choices, but as the minimal constraints any viable realization-weighting rule must satisfy.

Within that admissible class, the paper proves a Quadratic Necessity Theorem: the weighting rule must reduce to dependence on amplitude modulus, must be additive on squared modulus under admissible refinement, and—given regularity and normalization—collapses to the unique normalized form W(α) = │α│². On this account, quadratic weighting is not merely recovered inside canonical CBR; it is the unique weighting form available to any realization-weighting framework that preserves the stated operational constraints.

The claim is deliberately narrow. The paper does not assert unrestricted universality across all conceivable mathematical or interpretive frameworks, nor does it claim empirical confirmation of CBR. Its stronger point is conditional: any nonquadratic alternative survives only by rejecting one or more of the structural conditions required for stable physical probability, and therefore assumes the burden of explaining how probability retains physical meaning after that loss.

If successful, the result materially strengthens the CBR program. It shifts quadratic weighting from a local internal feature of canonical CBR to a necessity result for the full operationally acceptable realization-weighting class.

1. Introduction

1.1 The remaining probability burden

The Core Theorem Paper established local probability closure inside canonical Constraint-Based Realization. Within the canonical admissibility structure developed there, normalized nonquadratic weighting does not survive once admissible refinement, operational invariance, symmetry, normalization, nontriviality, and regularity are imposed. That result was already significant because it removed the probability rule from the category of optional internal design choices. Inside canonical CBR, the weighting structure is not left free.

That result, however, does not by itself settle the stronger question. A local closure theorem establishes that quadratic weighting follows within a given canonical admissibility class. It does not yet show that the structural assumptions generating that result are necessary for any realization-weighting framework that claims stable physical probability meaning. A critic may therefore grant the internal CBR derivation while still asking whether the assumptions used there are merely sufficient, rather than required by the very idea of operationally meaningful realization probability.

The present paper addresses exactly that remaining burden. It asks whether the conditions that force quadratic modulus weighting are simply a disciplined package internal to canonical CBR, or whether they are in fact necessary for any realization-weighting framework that purports to assign stable probabilities to admissible realization alternatives. The question is therefore not whether a nonquadratic formula can be written down. Many such formulas can. The question is whether any such formula can survive as a physically acceptable probability rule once the elementary structural demands of realization weighting are made explicit.

The target can be stated sharply:

Are the conditions that force quadratic weighting merely sufficient, or are they necessary for any realization-weighting framework that claims physical probability meaning?

The answer developed here is conditional but strong. It is conditional because the paper does not claim unrestricted universality across every conceivable mathematical or interpretive scheme. It is strong because, within the admissible theorem class defined below, the probability rule is no longer merely recovered locally; it is forced by the requirements of operational probability acceptability.

1.2 Central thesis

The central thesis of the paper is:

Any operationally acceptable realization-weighting framework must either reduce to quadratic modulus weighting or abandon one of the structural conditions required for stable physical probability.

This thesis should be read with care. It is not the claim that quadratic weighting is aesthetically preferable, historically conventional, or merely compatible with canonical CBR. It is the claim that once a framework attempts to assign realization probabilities to admissible branch decompositions in a physically stable way, its room to deviate from quadratic weighting collapses sharply.

The argument does not proceed by direct appeal to the Born rule as an unexplained target. It proceeds by isolating the conditions without which realization weighting ceases to function as probability at all. If a rule depends on physically irrelevant phase, if it changes total probability under admissible refinement, if it changes under coarse-graining, if it depends on branch labels, if it varies across operationally equivalent decompositions, if it fails normalization, if it ignores amplitude structure, if it permits pathological additive behavior, or if it derives its conclusion only by building that conclusion into admissibility, then it fails to satisfy the minimum structural requirements of operational probability acceptability.

The paper therefore treats quadratic weighting not as a preferred internal rule of canonical CBR, but as the unique normalized weighting form available within the operationally acceptable realization-weighting class.

1.3 The two theorem centers

The paper has two major theorem centers, and the distinction between them is essential.

The first is the Probability Acceptability Theorem. Its role is to establish that the structural assumptions often treated as premises in weighting derivations are not merely optional conveniences. They are forced by the requirement that a realization-weighting framework assign stable operational probability. More specifically, the theorem shows that any such framework must satisfy, or possess structural equivalents of, phase insensitivity, admissible refinement consistency, coarse-graining consistency, symmetry, operational invariance, normalization, nontriviality, regularity, and non-circular admissibility.

The second is the Quadratic Necessity Theorem. Once the acceptable theorem class has been fixed, the weighting rule reduces to dependence on amplitude modulus, induces additivity on squared modulus, and—under regularity and normalization—collapses to the unique form

W(α) = │α│².

These two theorem centers correspond to two different burdens. The first burden is structural: what conditions must hold before a weighting rule can count as stable physical probability? The second burden is mathematical: once those conditions hold, what form can the weighting rule take? A large part of the paper’s force comes from refusing to conflate these two burdens.

In the Core Theorem Paper, local probability closure established the second burden inside canonical CBR. The present paper strengthens the program by addressing the first burden directly. It asks whether the assumptions underwriting the local closure result are not merely sufficient inside canonical CBR, but necessary for the broader class of operationally acceptable realization-weighting frameworks.

1.4 Formal definition of the admissible theorem class

The main results of this paper apply to a definite theorem class, which should be stated at the outset.

Definition 1.1 — Admissible decomposition.

An admissible decomposition is a representation of the form

ψ = ∑ᵢ αᵢeᵢ

in which the components eᵢ correspond to physically meaningful outcome-defining alternatives for the realization-weighting problem under consideration, and in which admissible refinement, admissible coarse-graining, and operational equivalence can be defined.

Definition 1.2 — Realization-weighting rule.

A realization-weighting rule is a map

W : α ↦ ℝ≥0

that assigns a nonnegative branch weight to each admissible amplitude contribution, with normalized probabilities given by

Pᵢ = W(αᵢ)∕∑ⱼW(αⱼ)

whenever the admissible decomposition is under evaluation as a probability-bearing realization structure.

Definition 1.3 — Operational equivalence.

Two admissible decompositions are operationally equivalent if no admissible realization-relevant physical test distinguishes them at the level of probability assignment or realization consequence.

Definition 1.4 — Operationally acceptable realization-weighting framework.

A realization-weighting framework is operationally acceptable if its probability assignments are invariant under physically irrelevant phase changes, stable under admissible refinement, stable under admissible coarse-graining, symmetric under labels lacking operational content, invariant under operationally equivalent decompositions, normalized, nontrivially sensitive to amplitude structure, regular enough to exclude pathological additive constructions, and non-circular in its admissibility definitions.

Definition 1.5 — Admissible theorem class of the paper.

The admissible theorem class consists of realization-weighting frameworks that assign probabilities to admissible decompositions and satisfy, or claim to satisfy, operational probability acceptability in the sense of Definition 1.4.

This definition block is not ornamental. It prevents a common misunderstanding. The paper is not trying to prove that every imaginable mathematical rule on amplitudes is impossible unless it is quadratic. It is proving something narrower and stronger: within the theorem class of operationally acceptable realization-weighting frameworks, quadratic weighting is structurally forced.

The definition block also makes clear that the argument is not automatically directed at every interpretation of quantum mechanics. A framework that denies the relevance of single-outcome realization weighting may fall outside the theorem class. Such a framework is not refuted by this paper. It is simply not the target of the theorem.

1.5 What this paper does not claim

The strength of the present paper depends in part on what it refuses to claim.

First, it does not claim that every imaginable mathematical weighting scheme is impossible. Nonquadratic functions can easily be written down. The paper’s claim is not that such functions cannot exist, but that they cannot function as operationally acceptable realization-probability rules without sacrificing at least one structural condition required for stable physical probability.

Second, the paper does not claim that every interpretation of quantum mechanics is refuted. In particular, it does not force Everettian frameworks to accept single-outcome realization. A framework may reject the target problem itself and thereby leave the theorem class rather than contradict the theorem.

Third, the paper does not claim empirical validation of CBR. It is a probability-structure paper, not an experiment paper. Empirical vulnerability belongs to the protocol-level work developed separately.

Fourth, the paper does not claim that its result is identical to Gleason-style, envariance-style, or Everettian decision-theoretic derivations of the Born rule. Those derivations operate over different domains, under different assumptions, and for different conceptual targets. The present theorem concerns realization-weighting frameworks over admissible outcome-branch decompositions.

Fifth, the paper does not claim unrestricted universality. It is best understood as a near-global structural theorem for the operationally acceptable realization-weighting class, not as an unrestricted theorem over every conceivable interpretive or mathematical framework.

These non-claims do not weaken the paper. They keep it within the bounds of what the proof can actually support.

1.6 Proof strategy

The proof strategy is direct and staged.

First, the paper defines realization-weighting frameworks and the admissible theorem class in a form explicit enough to support theorem-level claims rather than rhetorical plausibility.

Second, it develops the notion of operational probability acceptability and treats it as the primary structural burden. This is the level at which the paper argues that phase insensitivity, refinement consistency, coarse-graining consistency, symmetry, operational invariance, normalization, nontriviality, regularity, and non-circularity are not merely convenient assumptions, but requirements of physically stable realization probability.

Third, it proves these requirements one by one as necessity lemmas. Each lemma identifies the pathology created by rejecting the relevant condition.

Fourth, it shows that the acceptable theorem class induces additive measure structure on squared modulus. This is the point at which the weighting rule ceases to be an arbitrary branch assignment and becomes a constrained measure-like object.

Fifth, it proves the Probability Acceptability Theorem and then the Quadratic Necessity Theorem. The first theorem fixes the structural class. The second theorem fixes the weighting form.

Sixth, it classifies nonquadratic escape routes. This is not an appendix-like afterthought. It is a central consequence of the theorem. A nonquadratic rule can survive only by rejecting one of the structural conditions required for operational probability acceptability, and it must then explain why doing so does not destroy stable physical probability.

This proof order matters. It makes clear that the paper is not simply another Born-rule derivation in which a desired answer is reached from a convenient list of assumptions. It is a theorem about what realization weighting must look like if it is to function as operational probability in the first place.

1.7 Why this matters

If successful, the present paper changes the status of the probability side of CBR.

Without this paper, the Core Theorem Paper shows that quadratic weighting is locally forced inside canonical CBR. That is already important. But a critic can still ask whether the assumptions behind that local closure are only internal to the CBR architecture.

With this paper, the burden shifts. The conclusion becomes:

Quadratic weighting is not merely recovered inside canonical CBR. It is forced for any realization-weighting framework in the operationally acceptable theorem class.

That shift matters because probability is one of the central vulnerabilities of any proposed completion or realization-law framework. A theory that specifies a law of realization but leaves its weighting rule arbitrary has not completed its own burden. A theory that shows why the weighting rule is structurally forced has done much more.

The result also matters for alternatives. A nonquadratic proposal can still be made, but after this paper it must become explicit about its cost. It must say which condition of operational probability acceptability it rejects and why rejecting that condition does not destroy the physical meaning of probability.

That is the paper’s core contribution. It does not settle all of quantum foundations. It does not claim final unrestricted universality. It does, however, make the probability side of CBR substantially more difficult to dismiss as internal stipulation.

2. From Local Closure to Necessity

2.1 Local probability closure in canonical CBR

The Core Theorem Paper established a local probability-closure result within canonical CBR. The result was local because it was proved inside the canonical admissibility structure of the theory. It assumed a law-selection framework in which realization is defined over an admissible class and constrained by operational equivalence, refinement stability, coarse-graining consistency, and admissibility discipline.

Within that structure, the weighting rule is not freely chosen. The relevant assumptions are as follows. First, admissible refinement must preserve the total weight of a physical alternative under finer description. Second, operationally equivalent decompositions must induce the same normalized weighting. Third, symmetric branches with no relevant physical distinction must receive equal treatment. Fourth, the total probability assignment must normalize. Fifth, the rule must be nontrivial, meaning that amplitude structure cannot be erased into branch-count uniformity. Sixth, the weighting function must satisfy enough regularity to exclude pathological additive constructions.

Under those conditions, no normalized nonquadratic weighting survives. The local result therefore closes a major internal gap in canonical CBR: probability is not an arbitrary addition to the law form, but a consequence of the admissibility structure.

Nevertheless, the result remains local in an important sense. It establishes closure inside the canonical CBR theorem class. It does not yet show that the assumptions behind the closure are necessary for every operationally meaningful realization-weighting framework. That is the task of the present paper.

2.2 Generalized weighting uniqueness

The Core Theorem Paper’s appendix-level generalization pushed the result beyond the strict canonical CBR setting. It considered a generalized admissible weighting framework in which branch amplitudes are assigned nonnegative weights, refinement and coarse-graining are available, operational equivalence can be assessed, and normalized total weight is defined.

Under the generalized assumptions B1–B9, the only normalized admissible weighting rule is:

W(α) = │α│².

Those assumptions include phase insensitivity, refinement consistency, coarse-graining consistency, permutation symmetry, operational invariance, normalization, nontriviality, regularity, and non-circular admissibility. The generalized result is already stronger than a canon-specific derivation, because it shows that quadratic weighting follows across a broader structural class.

However, even this generalized uniqueness theorem remains formally conditional. It states that if a framework satisfies the listed assumptions, then quadratic weighting follows. It does not yet prove that a physically acceptable realization-weighting framework must satisfy them.

The difference between “if accepted, then quadratic” and “must be accepted for physical probability” is the difference between sufficiency and necessity. The present paper is written to close that gap as far as the realization-weighting framework permits.

2.3 The remaining gap

A sufficiency theorem has the following form:

If the assumptions hold, quadratic weighting follows.

Such a theorem is valuable, but it leaves open a natural objection. A nonquadratic theorist may reject one or more assumptions and claim that the quadratic conclusion has been avoided. The question then becomes whether the rejected assumption was optional, or whether rejecting it destroys the rule’s claim to physical probability meaning.

The present paper therefore seeks a necessity result of the following form:

If a weighting rule claims stable physical probability meaning, then the assumptions that force quadratic weighting, or their structural equivalents, must hold.

The phrase “or their structural equivalents” is important. The theorem does not require every framework to use the same vocabulary as CBR. A framework may express refinement, invariance, or regularity in different formal language. What matters is whether it preserves the same physical function: probability must not change under operationally irrelevant phase, relabeling, refinement, aggregation, or equivalent redescription.

Thus the remaining gap is not merely technical. It is conceptual and structural. The paper must show that the assumptions leading to quadratic weighting are not arbitrary restrictions chosen to force a desired answer, but conditions required for any realization-weighting rule to count as operational probability.

2.4 Proof strategy

The proof strategy proceeds in six stages.

First, the paper defines realization-weighting frameworks. These are frameworks that assign normalized probabilities to admissible outcome-branch decompositions intended to represent realization likelihood.

Second, the paper defines operational probability acceptability. A weighting rule is acceptable only if its assignments are invariant under physically irrelevant representation changes, stable under admissible refinement and coarse-graining, symmetric under labels with no operational content, normalized, nontrivially sensitive to amplitude structure, regular, and non-circular.

Third, the paper proves each acceptability condition as a necessity lemma. Each lemma has the same form: state the requirement, prove that rejecting it generates a specific pathology, identify the failure mode, and state the dependency of the result.

Fourth, the paper derives additive measure representation. Phase insensitivity reduces W(α) to a function of │α│. Refinement and coarse-graining consistency then produce additivity on squared modulus.

Fifth, the paper proves quadratic necessity. Regularity forces the additive function to be linear on squared modulus, and normalization fixes the constant. The result is W(α) = │α│².

Sixth, the paper classifies nonquadratic escape routes. A nonquadratic alternative may reject one of the structural requirements, but it must then accept the corresponding physical cost: phase-label dependence, branch-splitting arbitrariness, aggregation instability, label sensitivity, representation dependence, non-normalization, pathology, or circularity.

The structure is intentionally proof-disciplined. The paper does not rely on the rhetorical claim that the assumptions are “reasonable.” It argues that without them, the weighting rule ceases to function as stable operational probability.

3. Realization-Weighting Frameworks

3.1 Admissible branch decompositions

Let an admissible branch decomposition be written:

ψ = ∑ᵢ αᵢeᵢ.

Here eᵢ denotes an outcome-defining component of the decomposition, and αᵢ denotes the complex amplitude associated with that component. The notation is intentionally minimal. The paper does not require that every formal decomposition of ψ be admissible. It requires only that the decomposition under consideration belong to the class of physically meaningful outcome-branch decompositions relevant to realization-weighting.

The branch components eᵢ should therefore be understood as physically interpretable alternatives within the stated context, not merely arbitrary basis labels. A decomposition is admissible only when the alternatives support the probability-assignment task at issue. In the CBR setting, the alternatives are not merely mathematical branches; they are candidate realization alternatives or outcome-defining components relevant to a realization law.

The weighting rule assigns to each amplitude αᵢ a branch weight W(αᵢ). The present paper asks what form W can take if the resulting weights are to function as stable physical probabilities.

3.2 Weighting rule

A realization-weighting rule is a map:

W : α ↦ ℝ≥0.

The nonnegativity condition is required because W is intended to contribute to probability assignment. Negative probability weights would require a different interpretive structure and are not part of the theorem class considered here.

For an admissible decomposition ψ = ∑ᵢ αᵢeᵢ, normalized probabilities are written:

Pᵢ = W(αᵢ)∕∑ⱼW(αⱼ).

When the decomposition is already normalized and W is itself normalized over the admissible branch set, this reduces to the usual condition:

∑ᵢPᵢ = 1.

The purpose of this definition is not to assume the Born rule. At this stage W is arbitrary except for nonnegativity and the claim that it is to be used for probability assignment. The paper will ask which additional constraints are forced by operational probability acceptability.

3.3 Admissible decompositions

A decomposition is admissible only if it satisfies five conditions.

First, its branches must correspond to physically meaningful outcome alternatives. A merely formal expansion in an arbitrary basis is not automatically realization-relevant.

Second, refinements must be well-defined. If a branch is subdivided into admissible subbranches representing a finer description of the same physical alternative, the framework must be able to state how weights behave under that subdivision.

Third, coarse-grainings must be well-defined. If subbranches are aggregated into a parent branch representing a lower-resolution alternative, the framework must be able to state how total weight behaves under that aggregation.

Fourth, operational equivalence must be assessable. If two decompositions differ only by representation and cannot be distinguished by any admissible test relevant to realization probability, the framework must identify them as equivalent at the probability-assignment level.

Fifth, probability assignment must not be merely representational. A weighting rule that changes its probabilities under changes in notation, labeling, or arbitrary branch resolution is not assigning physical probabilities. It is assigning description-dependent scores.

These admissibility requirements are intentionally modest. They do not determine the Born rule by themselves. They define the class of decompositions over which the weighting problem is physically meaningful.

3.4 Operational equivalence

Two decompositions are operationally equivalent if no admissible physical test distinguishes them at the level of probability assignment or realization-relevant consequence.

This definition should be read carefully. Operational equivalence does not require syntactic identity. Two decompositions may be written differently, use different labels, or represent alternatives at different descriptive resolutions while remaining equivalent for the probability question at hand. Conversely, two decompositions that look formally similar may be operationally inequivalent if they differ in a way that changes realization-relevant predictions.

Operational equivalence is therefore the relation that prevents the weighting rule from responding to mere notation. It is also the relation that allows the paper to state necessity results without requiring exact identity of formal representations.

The theorem class assumes that physical probability must be invariant under operational equivalence. If a framework rejects that assumption, it leaves the class of operationally acceptable realization-weighting rules and must explain how representation-dependent probabilities retain physical meaning.

3.5 Realization-weighting framework

A realization-weighting framework is a rule assigning normalized probabilities to admissible branch decompositions intended to represent realization likelihood.

This definition places the present paper in a specific domain. It is not addressing every possible interpretation of quantum mechanics. It is addressing frameworks that assign probability weights to outcome-defining alternatives in connection with physical realization. In particular, the paper is not directed at frameworks that deny the need for single-outcome realization weighting altogether. Such frameworks may be important, but they do not fall inside the theorem class.

Within the theorem class, a weighting framework must answer a specific question:

Given an admissible outcome-branch decomposition ψ = ∑ᵢ αᵢeᵢ, what probability weight should be assigned to each branch?

The paper argues that any answer preserving operational probability acceptability must reduce to W(α) = │α│².

3.6 Proof discipline requirement

Every necessity claim in this paper follows the same structure.

First, the requirement is stated. Second, a necessity lemma is formulated. Third, a proof is given or, where appropriate, the strongest proof sketch consistent with the stated assumptions is supplied. Fourth, the failure mode produced by rejecting the requirement is identified. Fifth, a dependency note records what the result depends on.

This structure is deliberate. The paper does not treat “physical reasonableness” as an unexplained black box. It identifies the specific operational pathology that appears when a requirement is removed.

For example, refinement consistency is not defended merely because it is elegant. It is defended because rejecting it allows probability to be changed by branch subdivision. Operational invariance is not defended merely because it is standard. It is defended because rejecting it allows equivalent descriptions to produce different probabilities.

This proof discipline is the central methodological standard of the paper. Each assumption must earn its place by blocking a specific failure mode.

4. Operational Probability Acceptability

4.1 Definition

A realization-weighting framework is operationally probability-acceptable if its probability assignments satisfy the following requirements.

First, they must be invariant under physically irrelevant phase changes. If a phase transformation does not alter realization-relevant physical content, it must not alter probability.

Second, they must be stable under admissible refinement. If a physical alternative is described at finer resolution, the total probability assigned to that alternative must be preserved.

Third, they must be stable under admissible coarse-graining. If subbranches are aggregated into a parent alternative, the total probability assigned to the parent must equal the total probability assigned to the admissible subbranches.

Fourth, they must be symmetric under labels with no operational content. Equal-modulus, operationally indistinguishable alternatives cannot receive unequal probabilities merely because they carry different labels.

Fifth, they must be invariant under operationally equivalent decompositions. If two decompositions cannot be distinguished by admissible realization-relevant tests, their normalized probabilities must agree.

Sixth, they must be normalized. A probability rule must support total probability one over mutually exclusive admissible alternatives.

Seventh, they must be nontrivially sensitive to amplitude structure. A rule that ignores all unequal-amplitude structure does not solve the amplitude-weighting problem.

Eighth, they must be regular enough to exclude pathological additive solutions. Minimal continuity or measurability is required for physical interpretability.

Ninth, they must be non-circular. The admissibility rules used to derive weighting cannot secretly encode the target conclusion.

These conditions define the theorem class. A framework outside them may still be a mathematical construction, but it does not count here as an operationally acceptable realization-probability theory.

4.2 Acceptability principle

Principle 4.1 — Operational Probability Acceptability.

A weighting rule that claims physical probability meaning may not assign different probabilities to descriptions that differ only by operationally irrelevant representation, refinement, aggregation, labeling, or pathological construction.

This principle is not an additional Born-rule assumption. It does not mention │α│². It says only that physical probability must not be hostage to description choices that do not alter the physical alternative being weighted.

The principle is also weaker than unrestricted universality. It does not require every possible mathematical object to behave this way. It applies only to weighting rules that claim to assign stable physical probabilities in realization-law frameworks.

The remainder of the paper shows that this principle is strong enough to force the structural conditions that yield quadratic weighting.

4.3 The acceptability filter

The acceptability filter excludes rules that fail to function as stable operational probability.

A representation-sensitive rule is excluded because it assigns different probabilities to physically equivalent descriptions.

A branch-splitting-sensitive rule is excluded because it allows probability to change under admissible refinement.

An aggregation-sensitive rule is excluded because it allows probability to change under coarse-graining.

A label-sensitive rule is excluded because it permits names or symbolic positions to alter probability without physical distinction.

A non-normalized rule is excluded from the class of probability rules, although it may define some other kind of score or tendency.

A pathological rule is excluded because it has no stable empirical or physical dependence on amplitude.

A circular rule is excluded because it hides the desired weighting conclusion inside the admissibility structure.

The filter is not designed to make alternatives impossible by definition. It is designed to force alternatives to identify the cost of their escape. If a nonquadratic theory rejects refinement consistency, for example, it must explain why branch subdivision may alter probability without rendering probability representation-dependent.

4.4 Acceptability versus universality

Operational probability acceptability should not be confused with unrestricted mathematical universality.

The theorem does not claim that every possible mathematical function must pass the acceptability filter. It does not claim that every interpretation of quantum mechanics must adopt the same realization target. It does not claim that a framework which rejects single-outcome realization is internally incoherent simply because it falls outside the theorem class.

The claim is narrower and stronger within its domain. A rule failing the acceptability filter does not function as stable operational probability in a realization-law framework. If it changes probabilities under physically irrelevant phase, refinement, aggregation, labeling, or equivalent redescription, then it is not assigning probability to physical alternatives alone.

This distinction protects the theorem from overclaiming. It also protects it from trivialization. The result is not merely that quadratic weighting follows under assumptions. It is that the assumptions express what operational probability requires in the theorem class.

5. Necessity of Phase Insensitivity

5.1 Requirement

The first requirement is phase insensitivity.

For every phase θ whose transformation is operationally irrelevant at the realization-probability level, the weighting rule must satisfy:

W(α) = W(eⁱᶿα).

This requirement does not say that phase is never physically relevant in quantum theory. Relative phase can be physically significant in interference contexts. The requirement is narrower. It concerns phase transformations that do not alter the realization-relevant probability content of the branch under the admissible equivalence relation.

Thus the requirement should be read as operational phase insensitivity: weighting cannot depend on a phase label when that phase label has no physical probability significance in the stated context.

5.2 Lemma 5.1 — Phase Insensitivity Necessity

Lemma 5.1 — Phase Insensitivity Necessity.

Any operationally probability-acceptable realization-weighting framework must be phase-insensitive under physically irrelevant phase transformations.

5.3 Proof

Assume that there exists an amplitude α and a phase θ such that α and eⁱᶿα are operationally equivalent at the realization-probability level, but:

W(α) ≠ W(eⁱᶿα).

By hypothesis, the transformation from α to eⁱᶿα introduces no admissible physical distinction relevant to realization probability. The two descriptions therefore represent the same probability-relevant content under the operational equivalence relation.

If the weighting rule assigns different weights to them, then the rule is responding to a phase label rather than to a physical difference in the realization alternative. That violates operational probability acceptability, which requires probability assignments to remain invariant under physically irrelevant redescription.

Therefore, whenever α and eⁱᶿα are operationally equivalent at the realization-probability level, the weighting rule must satisfy:

W(α) = W(eⁱᶿα).

This proves phase insensitivity under the stated operational condition.

5.4 Failure mode if rejected

Rejecting phase insensitivity permits probability to depend on phase labels without physical distinction. Such a rule can assign different probabilities to two descriptions that are operationally equivalent for the weighting question.

A simple example would be a rule of the form:

W(α) = │α│²(1 + ε cos arg α),

where ε ≠ 0. If arg α can be changed by an operationally irrelevant phase transformation, then the assigned weight changes without a physical probability difference. The result is representation-sensitive probability.

That failure mode is not merely aesthetic. It means probability can be altered by changing a descriptive phase convention. Such a rule cannot function as stable operational probability in the theorem class.

5.5 Dependency note

Lemma 5.1 depends on the operational equivalence relation and on the requirement that probability assignments be invariant under physically irrelevant redescription. It does not require the Born rule. It does not assume W(α) = │α│². It establishes only that W must factor through amplitude modulus whenever phase carries no realization-probability content.

The result is therefore a necessity condition, not a probability derivation by itself. Its role is to support the later reduction:

W(α) = f(│α│),

which will become one input to the additive measure argument.

6. Necessity of Refinement Consistency

6.1 Requirement

The second requirement is refinement consistency.

Suppose an admissible branch α is refined into admissible subbranches α₁, …, αₘ such that the refined family represents a finer-grained description of the same physical alternative and satisfies

∑ⱼ │αⱼ│² = │α│².

The requirement is that total weight be preserved under that admissible refinement:

W(α) = ∑ⱼ W(αⱼ).

This requirement should be read carefully. It does not say that every arbitrary formal subdivision of an amplitude must count as physically meaningful refinement. It applies only to admissible refinements: refinements that preserve the relevant physical alternative while altering only its descriptive resolution. The point is not to impose a mathematical convenience. It is to prevent probability from depending on branch subdivision that introduces no new realization-relevant physical content.

Refinement consistency is therefore a conservation principle for physical probability under finer admissible description.

6.2 Lemma 6.1 — Refinement Consistency Necessity

Lemma 6.1 — Refinement Consistency Necessity.

Any operationally probability-acceptable weighting rule must preserve total weight under admissible refinement of the same physical alternative.

6.3 Proof

Let α be an admissible branch corresponding to a physical alternative A. Let α₁, …, αₘ be an admissible refinement of α such that the refined family represents the same physical alternative A at finer descriptive resolution.

By the meaning of admissible refinement, the refinement does not create new mutually exclusive physical alternatives that were absent before. It replaces one admissible description of A by a finer admissible description of A. Therefore the total probability assigned to A must remain invariant under that change of descriptive resolution if the weighting rule is to function as stable physical probability.

Assume, to the contrary, that

W(α) ≠ ∑ⱼ W(αⱼ).

Then the total probability assigned to A depends on whether A is represented in coarse or refined form. That means the weighting rule permits the same physical alternative to change probability under a purely admissible subdivision of description. The probability assignment is then no longer tracking physical content alone. It is tracking branch bookkeeping.

This violates operational probability acceptability, because operational probability must remain invariant under admissible redescription that does not alter realization-relevant physical content. Therefore any operationally acceptable realization-weighting framework must satisfy

W(α) = ∑ⱼ W(αⱼ)

for admissible refinements.

This establishes refinement consistency as a necessary condition.

6.4 Failure mode if rejected

Rejecting refinement consistency creates branch-splitting arbitrariness.

A simple pathology illustrates the problem. Suppose a rule assigns one probability to a parent branch α and a different total probability to a refined version α₁, …, αₘ of that same branch. Then an agent could alter the assigned probability of a physical alternative merely by choosing to describe it with more or fewer admissible subbranches. Such a theory would make probability depend on representational granularity rather than physical structure.

This failure is not minor. It means that realization probability can be manipulated by descriptive refinement. A probability rule with that property cannot be regarded as stable physical probability in the theorem class.

6.5 Dependency note

Lemma 6.1 depends on three elements.

First, it depends on the notion of admissible refinement: refinement must preserve the relevant physical alternative while changing only its descriptive resolution.

Second, it depends on operational probability acceptability: the principle that physically irrelevant changes of description cannot alter the assigned probability.

Third, it depends on the identification of the refined family with the same realization-relevant alternative as the parent branch. Without that identification, the refinement would not be admissible in the sense required here.

The lemma does not assume the Born rule. It establishes only that whatever the weighting rule is, it must preserve total weight under admissible branch refinement.

6.6 Scope control

The result is not an unrestricted statement about every formal decomposition of a state vector. It applies only to admissible refinements inside realization-weighting frameworks that claim operational probability meaning. A framework may reject admissible refinement as a legitimate operation, but then it leaves the theorem class and must explain how probability remains stable under changes in descriptive resolution. The present paper does not forbid that move. It identifies its cost.

7. Necessity of Coarse-Graining Consistency

7.1 Requirement

The third requirement is coarse-graining consistency.

Suppose admissible subbranches α₁, …, αₘ are aggregated into a parent branch α such that the parent represents the same physical alternative at lower descriptive resolution and satisfies

│α│² = ∑ⱼ │αⱼ│².

The requirement is that total weight be preserved under admissible aggregation:

W(α) = ∑ⱼ W(αⱼ).

Coarse-graining consistency is the converse stability requirement to refinement consistency. If refinement moves from a coarse description to a finer one, coarse-graining moves from a finer description to a coarser one. If the weighting rule is to remain physically meaningful across admissible changes of resolution, both directions must preserve total probability.

7.2 Lemma 7.1 — Coarse-Graining Consistency Necessity

Lemma 7.1 — Coarse-Graining Consistency Necessity.

Any operationally probability-acceptable weighting rule must preserve total weight under admissible aggregation.

7.3 Proof

Let α₁, …, αₘ be admissible subbranches representing finer-grained descriptions of a single physical alternative A, and let α be the admissible parent branch representing that same alternative A at lower resolution.

Since the parent and refined families refer to the same realization-relevant alternative, operational probability acceptability requires that the probability assigned to A not depend on whether A is described in aggregated or resolved form.

Assume, to the contrary, that

W(α) ≠ ∑ⱼ W(αⱼ).

Then total probability changes when the description is coarse-grained. This means the weighting rule is sensitive to observational granularity rather than only to physical content. The same physical alternative receives one probability under refined description and a different probability under aggregated description.

That violates the requirement that operationally equivalent descriptions induce the same realization probability. Therefore any operationally acceptable weighting rule must satisfy

W(α) = ∑ⱼ W(αⱼ)

for admissible coarse-grainings.

This proves coarse-graining consistency as a necessary condition.

7.4 Failure mode if rejected

Rejecting coarse-graining consistency creates aggregation instability.

A weighting rule with this defect allows probability to change simply because one chooses to aggregate subbranches into a parent description. That means the probability assignment is not invariant under a basic change in descriptive resolution. Such a rule fails to represent stable physical probability. It instead represents a bookkeeping-sensitive quantity whose value depends on whether branches are counted separately or jointly.

This is the inverse pathology of branch-splitting arbitrariness. Together, the two pathologies show that stable probability requires both refinement and coarse-graining consistency.

7.5 Dependency note

Lemma 7.1 depends on the same core principle as Lemma 6.1: admissible changes of descriptive resolution may not alter the total probability assigned to a fixed physical alternative.

It depends on the existence of admissible coarse-graining maps, on the identification of the subbranch family with the same parent alternative, and on operational probability acceptability. It does not assume any specific functional form for W.

7.6 Scope control

As before, the claim is restricted. It concerns admissible coarse-grainings in realization-weighting frameworks that claim to assign stable physical probabilities. A formalism that denies the legitimacy of admissible aggregation may escape the theorem class, but only by accepting that probability depends on description at different observational scales. The present theorem does not forbid that position; it classifies it as a departure from operational probability acceptability.

8. Necessity of Symmetry and Operational Invariance

8.1 Symmetry requirement

The fourth requirement is symmetry under labels without operational content.

If two admissible branches have equal modulus and are operationally indistinguishable at the realization-probability level, then they must receive equal weight. Formally, if α₁ and α₂ satisfy

│α₁│ = │α₂│

and the corresponding branches are operationally equivalent apart from labeling, then

W(α₁) = W(α₂).

This requirement does not say that equal modulus always suffices for equal weight in every imaginable context. It says that when equal-modulus branches are also operationally indistinguishable for the probability question at hand, unequal weighting would amount to assigning physical significance to labels or symbolic placement alone.

8.2 Lemma 8.1 — Symmetry Necessity

Lemma 8.1 — Symmetry Necessity.

Any label-invariant weighting framework must assign equal weights to equal-modulus operationally equivalent branches.

8.3 Proof

Let α₁ and α₂ be branch amplitudes associated with admissible branches that are operationally equivalent for realization probability and satisfy

│α₁│ = │α₂│.

Assume, for contradiction, that

W(α₁) ≠ W(α₂).

Because the branches are operationally equivalent, the difference in assigned weight cannot be attributed to any admissible physical distinction relevant to realization. Because the moduli are equal, it also cannot be attributed to unequal amplitude magnitude. The only remaining basis for unequal weighting is label, symbolic position, or other representational residue.

But a label-invariant weighting framework may not assign different probabilities on that basis. Therefore unequal weighting violates the framework’s own claim to label invariance.

Hence any operationally acceptable label-invariant framework must satisfy

W(α₁) = W(α₂)

for equal-modulus operationally equivalent branches.

8.4 Operational invariance requirement

The fifth requirement is operational invariance.

If two admissible decompositions are operationally equivalent at the level relevant to realization probability, then they must induce identical normalized probabilities. Formally, if two decompositions D and D′ are operationally equivalent, then their induced probability assignments must agree branchwise under the relevant equivalence map.

Operational invariance is the broader principle of which symmetry is a special case. Symmetry handles equal-modulus branches under label change. Operational invariance handles equivalent decompositions more generally, including different but probability-equivalent resolutions of the same physical content.

8.5 Lemma 8.2 — Operational Invariance Necessity

Lemma 8.2 — Operational Invariance Necessity.

Any physically meaningful realization-weighting rule must assign identical normalized probabilities to operationally equivalent decompositions.

8.6 Proof

Let D and D′ be admissible decompositions that are operationally equivalent for realization probability. By definition, no admissible realization-relevant test distinguishes them at the level of probability assignment.

Assume, for contradiction, that the normalized probabilities induced by D and D′ differ. Then there exists at least one branch-equivalence class whose assigned probability changes under passage from D to D′.

Since D and D′ are operationally equivalent, this difference cannot reflect a physical difference relevant to realization probability. The weighting rule is therefore responding to representation rather than to physical content. That is incompatible with the claim that the rule assigns physical probabilities.

Therefore operationally equivalent decompositions must induce identical normalized probabilities.

8.7 Failure mode if rejected

Rejecting symmetry yields label-sensitive probability. Equal alternatives may receive unequal weights solely because they are named or positioned differently.

Rejecting operational invariance yields representation-sensitive probability more broadly. Equivalent decompositions can then produce different probabilities without a physical difference in the realization alternatives.

In both cases, the weighting rule ceases to represent stable physical probability. It instead becomes sensitive to symbolic structure, descriptive placement, or decomposition choice.

8.8 Dependency note

Lemma 8.1 depends on label invariance, equal modulus, and operational indistinguishability of the relevant branches. Lemma 8.2 depends on the operational equivalence relation and on the requirement that probability track physical alternatives rather than representational form.

Neither lemma assumes the Born rule. They establish only that physically meaningful probability must remain invariant under label-preserving and decomposition-preserving equivalence.

8.9 Scope control

These lemmas do not claim that every equal-modulus branch in every possible interpretive framework must receive equal weight. They apply inside realization-weighting frameworks that accept operational probability as their target and that recognize operational equivalence as a meaningful constraint.

A framework that rejects operational invariance may still define some weighting rule, but it must then explain why probability may vary across operationally equivalent descriptions without ceasing to be physical probability. That burden is the cost of escape.

9. Necessity of Normalization and Nontriviality

9.1 Normalization requirement

The sixth requirement is normalization.

For a normalized admissible decomposition, the assigned probabilities must satisfy

∑ᵢ Pᵢ = 1,

with

Pᵢ = W(αᵢ)∕∑ⱼ W(αⱼ).

Normalization is not optional if W is to function as a probability-bearing rule. Without it, W may define a ranking, burden, salience score, or some other scalar assignment, but not probability in the ordinary operational sense.

9.2 Lemma 9.1 — Normalization Necessity

Lemma 9.1 — Normalization Necessity.

Any probability-bearing realization-weighting rule must support normalized total probability.

9.3 Proof

A probability assignment over a set of mutually exclusive admissible alternatives must exhaust the total probability space represented by that set. If the assigned total is not normalizable to one, then the rule fails to produce a complete probability distribution over the admissible alternatives.

Formally, suppose W assigns nonnegative branch weights but no normalization is available. Then the resulting assignment does not determine probabilities Pᵢ satisfying

∑ᵢ Pᵢ = 1.

In that case, W may still rank branches or measure relative tendency, but it does not by itself constitute a probability rule.

Since the theorem class concerns realization-weighting frameworks that claim physical probability meaning, normalization is required.

9.4 Nontriviality requirement

The seventh requirement is nontriviality.

The weighting rule must distinguish at least some unequal-amplitude decompositions. A rule that assigns equal probabilities to all branches regardless of amplitude does not solve the amplitude-weighting problem. It erases it.

More precisely, if the framework claims that amplitude structure is realization-relevant, then there must exist admissible unequal-amplitude decompositions for which the assigned probabilities are not branch-count uniform.

9.5 Lemma 9.2 — Nontriviality Necessity

Lemma 9.2 — Nontriviality Necessity.

Any realization-weighting rule intended to track amplitude structure must not collapse all admissible alternatives into branch-count uniformity.

9.6 Proof

Assume a weighting rule claims to represent realization probability in a framework where branch amplitudes are physically meaningful inputs. Suppose, however, that for every admissible decomposition the rule assigns weights independently of amplitude structure and depends only on branch count.

Then unequal amplitudes never affect the probability assignment. The rule therefore fails to track the very structure it is supposed to weight. In such a case, the weighting rule does not resolve the amplitude problem. It bypasses it by refusing to let amplitude matter.

A realization-weighting framework that claims amplitude-sensitive probability must therefore satisfy nontriviality: there must exist at least one admissible unequal-amplitude decomposition for which amplitude structure affects the assigned probabilities.

9.7 Failure modes

Without normalization, W is not a probability rule. It may be a score or ordering function, but it cannot represent a complete probability assignment over mutually exclusive admissible alternatives.

Without nontriviality, amplitude structure becomes irrelevant. The rule degenerates into branch-count uniformity or some other amplitude-insensitive assignment. Such a rule cannot claim to have derived or explained physical probability from amplitude structure.

9.8 Dependency note

Lemma 9.1 depends only on the fact that the theorem class concerns probability-bearing frameworks. Lemma 9.2 depends on the additional claim that amplitude structure is realization-relevant. If a framework denies that amplitudes are relevant to probability, it exits the theorem class rather than defeating the result.

9.9 Scope control

These lemmas do not prove quadratic weighting by themselves. They establish two necessary preconditions for any probability-bearing realization-weighting rule: the assignment must normalize, and it must be nontrivially sensitive to amplitude structure if it claims to solve the amplitude-weighting problem.

They therefore constrain the theorem class without yet determining W(α) uniquely.

10. Necessity of Regularity and Non-Circularity

10.1 Regularity requirement

The eighth requirement is regularity.

The weighting rule W must be measurable or continuous as a function of │α│. Equivalently, once phase insensitivity reduces W to a function of amplitude modulus, that function must satisfy enough regularity to exclude pathological additive constructions.

Regularity is required because the later additive-measure argument will produce a functional equation on squared modulus. Without continuity or measurability, that equation admits pathological solutions that are mathematically definable in a highly nonconstructive sense but physically uninterpretable.

10.2 Lemma 10.1 — Regularity Necessity

Lemma 10.1 — Regularity Necessity.

Any physically interpretable realization-weighting rule must satisfy minimal regularity sufficient to exclude pathological additive solutions.

10.3 Proof

Suppose W is intended to assign physically meaningful probabilities as a function of branch amplitude. Physical interpretability requires that small, controlled changes in admissible amplitude structure do not produce uncontrolled, nonmeasurable, or radically discontinuous changes in assigned probability unless some independently justified physical threshold is present.

If W lacks minimal regularity, then once the functional equation for additivity is obtained, there exist pathological additive solutions whose values cannot be tracked by ordinary physical continuity, calibration, or empirical approximation. Such functions may satisfy formal algebraic constraints while failing to provide stable dependence on experimentally meaningful input.

A rule with that property cannot function as a physically interpretable realization-probability law. Therefore at least minimal regularity—measurability or continuity—is required.

10.4 Non-circularity requirement

The ninth requirement is non-circularity.

The admissibility conditions used to derive the weighting rule must not already encode the target conclusion. If admissibility is defined in such a way that only quadratic weighting can satisfy it by construction, then the derivation does not derive the Born rule. It presupposes it in disguised form.

Non-circularity therefore requires that admissibility, refinement, and coarse-graining be defined independently of the target conclusion W(α) = │α│².

10.5 Lemma 10.2 — Non-Circularity Necessity

Lemma 10.2 — Non-Circularity Necessity.

Any genuine derivation of a weighting rule must define admissibility independently of the target weighting conclusion.

10.6 Proof

Assume, to the contrary, that admissibility is defined so that quadratic weighting is already privileged at the level of branch grammar, refinement rule, metric structure, or normalization convention.

Then the subsequent theorem showing that quadratic weighting follows from admissibility does not derive the rule from independent premises. It merely reformulates the conclusion already built into the definitions.

A result of that kind cannot count as a genuine derivation. It is structurally circular. Therefore any proof claiming to derive a weighting rule from admissibility must define the admissibility structure independently of the target rule.

This establishes non-circularity as a necessary condition of derivational legitimacy.

10.7 Failure modes

Rejecting regularity permits pathological additive solutions. These may satisfy the algebraic letter of the later derivation while lacking physical continuity, measurability, or empirical interpretability.

Rejecting non-circularity makes the derivation vacuous. The conclusion is no longer earned from independent constraints. It is built into the admissibility definitions from the outset.

These failure modes are distinct. Regularity protects physical interpretability. Non-circularity protects logical legitimacy.

10.8 Dependency note

Lemma 10.1 depends on the requirement that the weighting rule be physically interpretable rather than merely formally definable. Lemma 10.2 depends on the requirement that the theorem be a genuine derivation rather than a definitional restatement.

Neither lemma presupposes quadratic weighting. Each constrains the form and legitimacy of any candidate derivation.

10.9 Scope control

The regularity requirement does not insist on full smoothness, analyticity, or any unnecessarily strong functional regularity. Measurability or continuity is enough for the theorem class.

The non-circularity requirement does not forbid every use of structural constraints. It forbids only those formulations in which the target weighting rule is already encoded in the admissibility definitions. A framework may use strong admissibility conditions so long as those conditions are independently motivated and do not presuppose the conclusion.

Together, Lemmas 10.1 and 10.2 complete the necessity side of the theorem class. The next step is to show that these conditions yield additive measure representation and, from there, quadratic necessity.

11. Additive Measure Representation

11.1 Modulus reduction

The preceding necessity lemmas establish the structural conditions required for operational probability acceptability. The first mathematical consequence of those conditions is modulus reduction.

By Lemma 5.1, the weighting rule is phase-insensitive under physically irrelevant phase transformations. Therefore, on the operational equivalence class relevant to realization weighting, the value of W(α) may depend only on the modulus of α. There thus exists a function f defined on [0, 1] such that

W(α) = f(│α│).

This reduction is not yet the Born rule. It states only that once operationally irrelevant phase dependence has been excluded, the weighting problem becomes a problem in real-valued dependence on amplitude modulus.

It is convenient to transfer the problem to squared modulus. Define

g(x) = f(√x)

for x ∈ [0, 1].

The weighting rule may then be written equivalently as

W(α) = g(│α│²).

This reformulation is mathematically natural because refinement and coarse-graining conditions are expressed in terms of preservation of squared-modulus structure. The rest of the derivation will show that operational probability acceptability forces g to be additive and, under regularity, linear.

11.2 Lemma 11.1 — Additivity on squared modulus

Lemma 11.1 — Additivity on Squared Modulus.

Under admissible refinement consistency and admissible coarse-graining consistency, the induced function g satisfies

g(x + y) = g(x) + g(y)

for admissible x, y ≥ 0 with x + y ≤ 1.

This lemma is the bridge from qualitative structural constraints to a precise functional equation. It states that once weight is conserved under refinement and coarse-graining of the same physical alternative, the induced weighting function must behave additively on squared modulus.

11.3 Proof

Let α be an admissible parent branch whose squared modulus satisfies

│α│² = x + y,

with x, y ≥ 0 and x + y ≤ 1.

Suppose α admits an admissible refinement into two subbranches α₁ and α₂ such that

│α₁│² = x

and

│α₂│² = y.

By refinement consistency, because α₁ and α₂ constitute an admissible refinement of the same physical alternative represented by α, one must have

W(α) = W(α₁) + W(α₂).

By modulus reduction,

W(α) = g(│α│²) = g(x + y),

W(α₁) = g(│α₁│²) = g(x),

W(α₂) = g(│α₂│²) = g(y).

Substituting into the refinement-consistency equation yields

g(x + y) = g(x) + g(y).

The same identity is reinforced by coarse-graining consistency. If α₁ and α₂ are first given as admissible subbranches and α is their admissible aggregation, then coarse-graining requires exactly the same preservation of total weight. Thus the additive identity is not an artifact of one direction of description change; it is stable under both refinement and aggregation.

This proves the lemma.

11.4 Theorem 11.1 — Additive Measure Representation

Theorem 11.1 — Additive Measure Representation.

The weighting rule induces a finitely additive nonnegative measure over admissible branch-refinement classes.

This theorem gives the weighting rule its first genuinely measure-theoretic form. Once the structural conditions of operational probability acceptability are imposed, weighting is no longer a free assignment to symbols or branches. It becomes an additive measure over admissible refinement structure.

11.5 Proof

By Lemma 5.1, W depends only on modulus and therefore may be written as g(│α│²). By Lemmas 6.1 and 7.1, total weight is preserved under admissible refinement and admissible coarse-graining. By Lemmas 8.1 and 8.2, the weight assignment is invariant under label changes and operationally equivalent decompositions. It therefore descends from a rule on syntactic branch representatives to a rule on admissible branch-refinement classes modulo operational equivalence.

Define μ on admissible branch-refinement classes by

μ([α]) = W(α),

where [α] denotes the operational equivalence class of admissible representatives corresponding to the same realization-weighting alternative. This is well-defined because operational invariance ensures that equivalent representatives receive the same weight.

Now consider a finite family of admissibly disjoint refinement classes [α₁], …, [αₘ] which together refine a parent admissible class [α]. By refinement consistency,

W(α) = ∑ⱼ W(αⱼ).

Equivalently,

μ([α]) = ∑ⱼ μ([αⱼ]).

Thus μ is finitely additive over admissible refinement classes. Nonnegativity follows from the codomain of W. Therefore μ is a finitely additive nonnegative measure over the admissible branch-refinement structure.

This proves the theorem.

11.6 Consequence

The consequence of Theorem 11.1 is substantial. The weighting rule is no longer an arbitrary assignment attached to branches one by one. It is constrained to act as an additive measure over admissible refinement structure. That fact sharply narrows the remaining space of acceptable weighting rules.

The theorem does not yet prove that μ must be the standard squared-modulus measure. Additional regularity is still needed. But it establishes the decisive intermediate result: operationally acceptable weighting rules are measure-like in exactly the sense required for the later linearity argument.

11.7 Failure mode if rejected

If additive measure representation is rejected, then weighting ceases to be stable under admissible composition and decomposition of physical alternatives. Probability can then change under refinement or aggregation, and the weighting rule no longer represents a measure over admissible alternatives. Instead it becomes a description-sensitive branch assignment.

That failure would undo the entire rationale of the preceding necessity lemmas. The present theorem therefore marks the point at which the structural side of the argument becomes a genuine measure-theoretic constraint.

11.8 Dependency note

Theorem 11.1 depends on:

phase insensitivity, to reduce W to dependence on │α│ or │α│²;

refinement consistency, to preserve total weight under admissible subdivision;

coarse-graining consistency, to preserve total weight under admissible aggregation;

symmetry and operational invariance, to ensure the resulting assignment descends to branch-refinement classes rather than remaining notation-sensitive.

It does not yet depend on normalization to derive additivity itself, nor on regularity to derive linearity. Those enter later.

11.9 Scope control

The theorem does not claim countable additivity, nor does it establish a full measure on all subspaces of a Hilbert space. Its domain is narrower: admissible branch-refinement classes in realization-weighting frameworks. That restriction is intentional. The present paper is not rederiving general measure theory on Hilbert spaces. It is deriving the probability structure forced by admissible realization decompositions.

12. Probability Acceptability Theorem

12.1 Purpose

This is the first principal theorem of the paper.

Its function is to convert the preceding necessity lemmas into a single structural result. The goal is to show that the assumptions commonly treated as premises in Born-style derivations are, in the present theorem class, consequences of a more primitive requirement: stable operational probability acceptability.

The theorem therefore answers a foundational question: what must a realization-weighting rule look like before it can count as physically meaningful probability at all?

12.2 Theorem 12.1 — Probability Acceptability Theorem

Theorem 12.1 — Probability Acceptability Theorem.

Any realization-weighting framework that claims stable operational probability meaning must satisfy, or possess structural equivalents of, the following conditions:

phase insensitivity, refinement consistency, coarse-graining consistency, symmetry, operational invariance, normalization, nontriviality, regularity, and non-circular admissibility.

This is a theorem about necessity, not convenience. It does not say that these conditions are elegant or standard. It says that without them, the framework cannot maintain stable physical probability in the realization-weighting setting.

12.3 Proof

Assume a realization-weighting framework claims to assign stable operational probabilities to admissible outcome-branch decompositions.

By the requirement that physically irrelevant phase changes not alter realization probability, and by Lemma 5.1, the framework must satisfy phase insensitivity under operationally irrelevant phase transformations.

By the requirement that admissible finer descriptions of the same physical alternative not alter total probability, and by Lemma 6.1, the framework must satisfy refinement consistency.

By the requirement that admissible aggregations of subbranches into a coarser description preserve the same physical alternative’s total probability, and by Lemma 7.1, the framework must satisfy coarse-graining consistency.

By the requirement that labels without operational content not change probability, and by Lemma 8.1, the framework must satisfy symmetry for equal-modulus operationally equivalent branches.

By the requirement that operationally equivalent decompositions induce the same probabilities, and by Lemma 8.2, the framework must satisfy operational invariance.

By the requirement that the weighting rule actually define probability, and by Lemma 9.1, the framework must satisfy normalization.

By the requirement that amplitude structure matter to a realization-weighting theory, and by Lemma 9.2, the framework must satisfy nontriviality.

By the requirement that the weighting rule be physically interpretable rather than pathological, and by Lemma 10.1, the framework must satisfy regularity.

By the requirement that the derivation be genuine rather than definitional, and by Lemma 10.2, the framework must satisfy non-circular admissibility.

Each condition therefore follows from the claim that the framework assigns stable operational probability meaning. Accordingly, any realization-weighting framework that makes that claim must satisfy the listed conditions or possess structural equivalents that perform the same physical function.

This proves the theorem.

12.4 Consequence

The consequence of Theorem 12.1 is decisive for the logic of the paper. The structural assumptions used in the weighting derivation are no longer merely a chosen package of sufficient premises. They are imposed by the very demand that the weighting rule function as stable operational probability.

This shifts the burden on alternative frameworks. A nonquadratic proposal cannot simply reject one of the assumptions and move on. It must explain why the rejected condition was not actually required for stable physical probability, despite the corresponding pathology identified in the necessity lemmas.

12.5 Failure mode if rejected

If the theorem is rejected, the rejection must take one of two forms.

Either one rejects the claim that the framework is assigning stable operational probability at all, in which case the framework exits the theorem class.

Or one rejects one of the necessity lemmas, in which case one must accept the associated pathology: phase-label dependence, branch-splitting arbitrariness, aggregation instability, label sensitivity, representation dependence, non-normalization, pathological irregularity, or circular derivation.

In either case, the framework no longer occupies the theorem class defined by operational probability acceptability.

12.6 Dependency note

Theorem 12.1 depends on the full chain of necessity lemmas from Sections 5–10. It does not yet depend on additive measure representation or on the later linearity argument. Its role is structural: it states what conditions are forced before the mathematical uniqueness argument begins.

12.7 Scope control

The theorem is not a universal statement over all conceivable interpretations of quantum mechanics. It applies only to realization-weighting frameworks that claim stable operational probability meaning. Frameworks that deny the target problem, deny admissible refinement, or reject operational probability as such do not fall within its scope. The theorem does not refute those frameworks. It states that they are outside the present necessity program.

13. Quadratic Necessity Theorem

13.1 Purpose

This is the second principal theorem of the paper.

Where Theorem 12.1 established the necessary structural conditions of operational probability acceptability, Theorem 13.1 derives the unique weighting rule forced by those conditions. It is the culmination of the paper’s probability argument.

The mathematical route is now short and controlled: modulus reduction, additive measure representation on squared modulus, regularity, and normalization.

13.2 Lemma 13.1 — Regular Additive Solution

Lemma 13.1 — Regular Additive Solution.

If g : [0, 1] → ℝ≥0 satisfies

g(x + y) = g(x) + g(y)

for admissible x, y ≥ 0 with x + y ≤ 1, and if g is measurable or continuous, then

g(x) = cx

for some c ≥ 0.

This is the standard linearity result for regular additive functions on a bounded interval. In the present paper it serves a precise role: it excludes the pathological nonlinear additive possibilities that would otherwise remain after Theorem 11.1.

13.3 Proof of Lemma 13.1

The proof proceeds inside the admissible domain already fixed by the paper. The function g is not an arbitrary function on an unrestricted mathematical space. It is the squared-modulus representation of an operationally acceptable realization-weighting rule. Its domain is the bounded interval [0, 1], corresponding to admissible squared-modulus weights in normalized branch decompositions. Its values lie in ℝ≥0, because the weighting rule is probability-bearing.

By Lemma 11.1, admissible refinement and coarse-graining imply the additivity relation

g(x + y) = g(x) + g(y)

for admissible x, y ≥ 0 with x + y ≤ 1.

First, set y = 0. Additivity gives

g(x) = g(x) + g(0),

so

g(0) = 0.

Next, for any positive integer n with nx ≤ 1, repeated additivity gives

g(nx) = ng(x).

In particular, for rational subdivisions of the unit interval, let q = m∕n, with 0 ≤ m ≤ n. Since

1 = n(1∕n),

additivity gives

g(1) = ng(1∕n),

so

g(1∕n) = g(1)∕n.

Then

g(m∕n) = mg(1∕n) = (m∕n)g(1).

Thus, on rational points in [0, 1],

g(q) = cq,

where

c = g(1).

The remaining question is whether this rational linearity extends to all real x ∈ [0, 1]. This is precisely where the regularity requirement enters. If g is continuous, the extension follows because every real x ∈ [0, 1] is the limit of rational sequences qₙ → x, and continuity gives

g(x) = lim g(qₙ) = lim cqₙ = cx.

If g is measurable rather than continuous, the standard Cauchy-additivity pathology is excluded by measurability on the bounded interval. A measurable additive function on an interval is linear on that interval. Hence again

g(x) = cx

for all x ∈ [0, 1].

Because g(x) ∈ ℝ≥0, one has c ≥ 0. Nontriviality excludes the degenerate case c = 0 for a probability-bearing amplitude-sensitive weighting rule. Normalization later fixes the scale to c = 1.

Therefore the only regular, nonnegative, additive squared-modulus representation compatible with the admissible realization-weighting domain is

g(x) = cx.

This proves Lemma 13.1.

13.4 Theorem 13.1 — Quadratic Necessity Theorem

Theorem 13.1 — Quadratic Necessity Theorem.

Any operationally probability-acceptable realization-weighting framework assigns normalized branch weights according to

W(α) = │α│².

This is the main probability theorem of the paper.

13.5 Proof