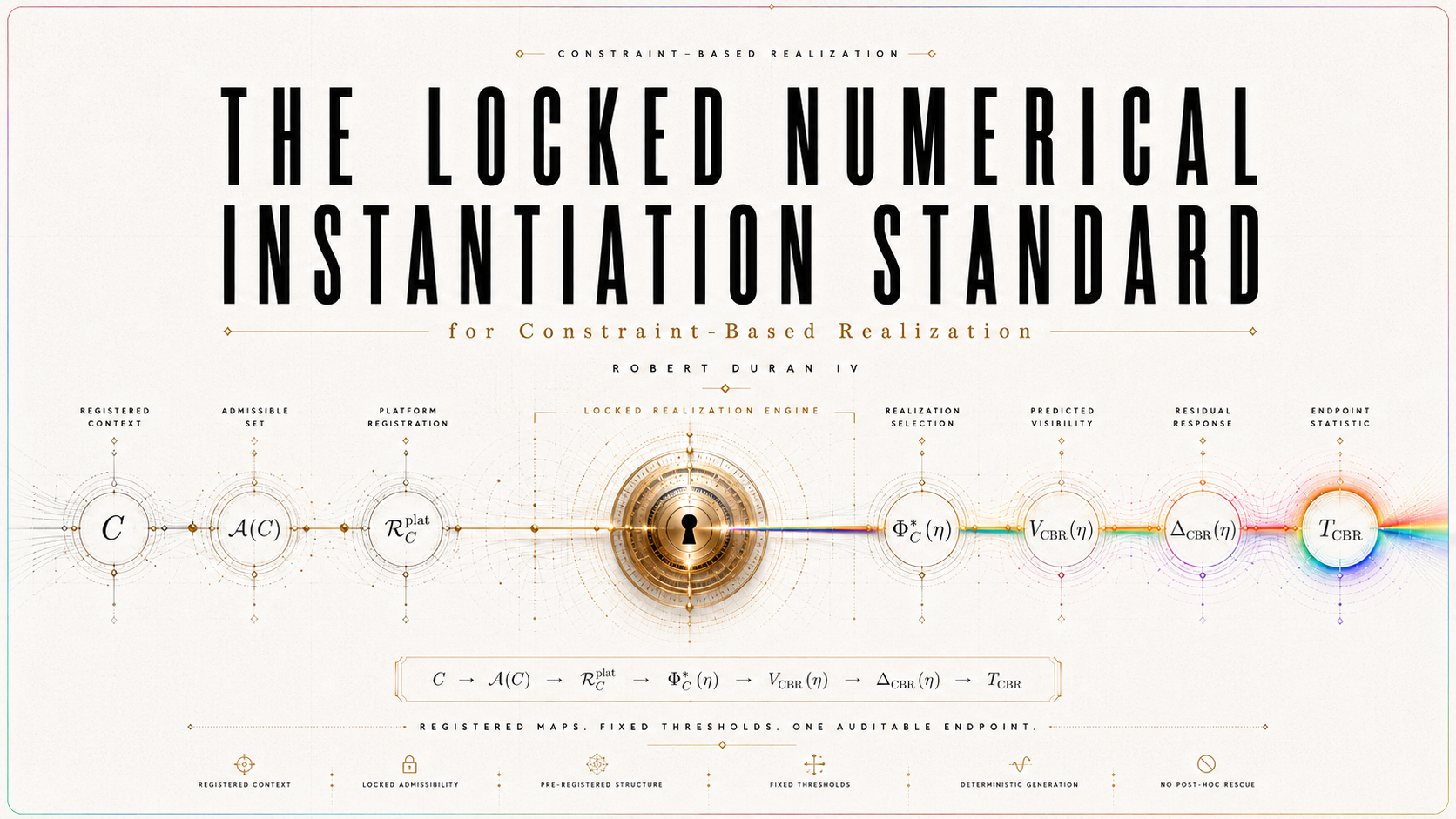

The Locked Numerical Instantiation Standard for Constraint-Based Realization | Completeness, Identifiability, Simulation Readiness, and Empirical Adjudication in a Platform-Specific CBR Dossier

Abstract

This paper develops a locked numerical instantiation standard for Constraint-Based Realization (CBR), a candidate law-form for individual quantum outcome realization. CBR treats realization as a distinct explanatory target: probability weights possible outcomes, decoherence stabilizes records, and registration makes records operationally available, but none of these by itself supplies a law of which admissible outcome is realized. In canonical form, CBR represents realization as context-fixed constrained selection,

Φ∗C ∈ argmin{Φ ∈ 𝒜(C)} ℛ_C(Φ), up to ≃_C,

where C is the measurement context, 𝒜(C) is the admissible candidate class, ≃_C is operational equivalence, and ℛ_C is a realization-burden functional.

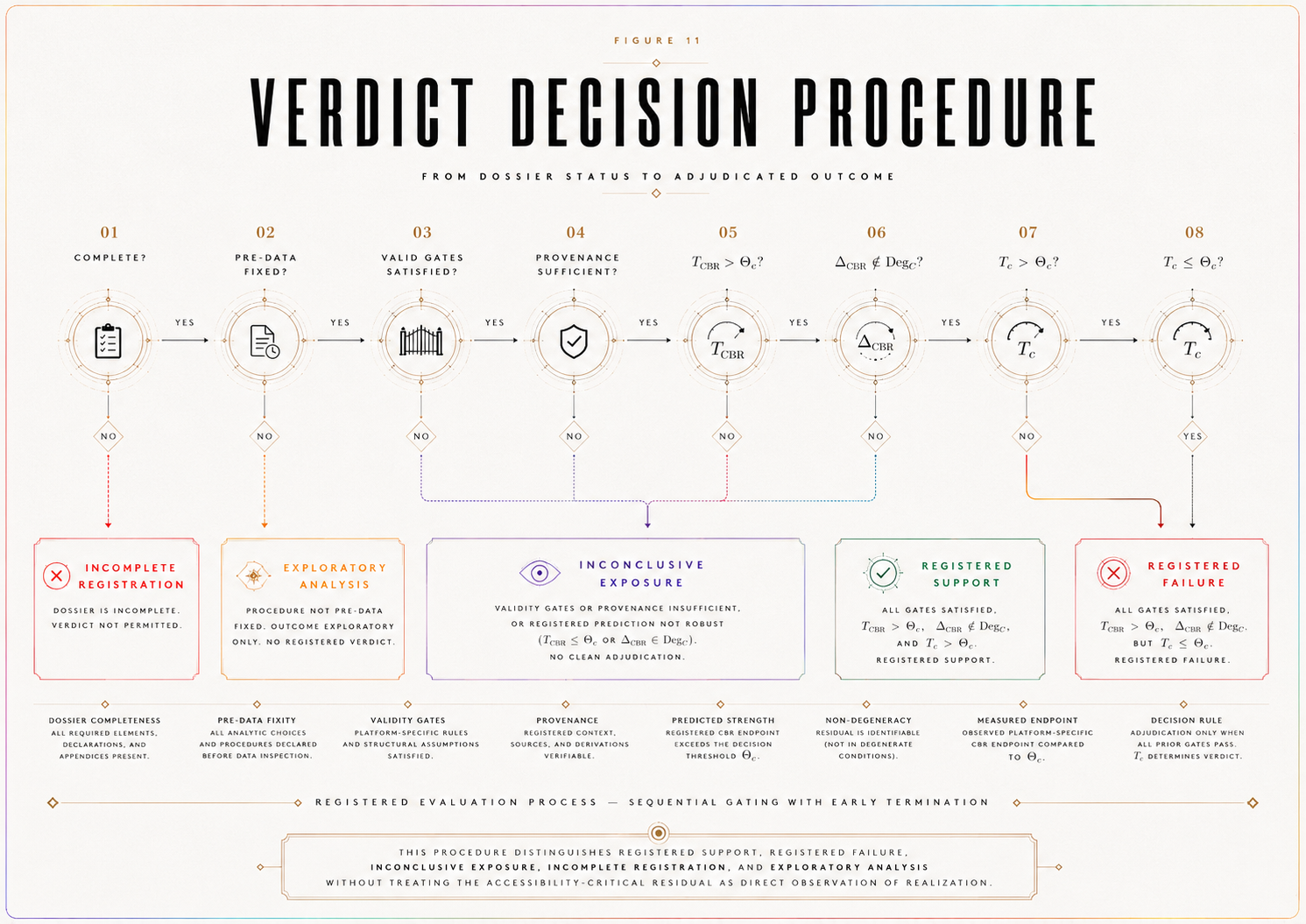

The aim of this paper is not to confirm CBR empirically, derive a universal realization law, or infer realization directly from data. Its purpose is narrower and more operational: to define when a platform-specific CBR instantiation is sufficiently specified to be simulated, audited, and prepared for empirical adjudication without post hoc alteration. The paper introduces a locked dossier standard requiring prior registration of the law-form objects, accessibility bridge, ordinary baseline, nuisance envelope, detectability threshold, endpoint functional, predicted endpoint, degeneracy operator, statistical rule, provenance certificates, validity gates, and verdict procedure. In this structure, the empirical target is not realization itself but a registered accessibility-critical endpoint: the predicted residual structure that remains after comparison with ordinary quantum, decoherence, detector, calibration, sampling, and nuisance effects.

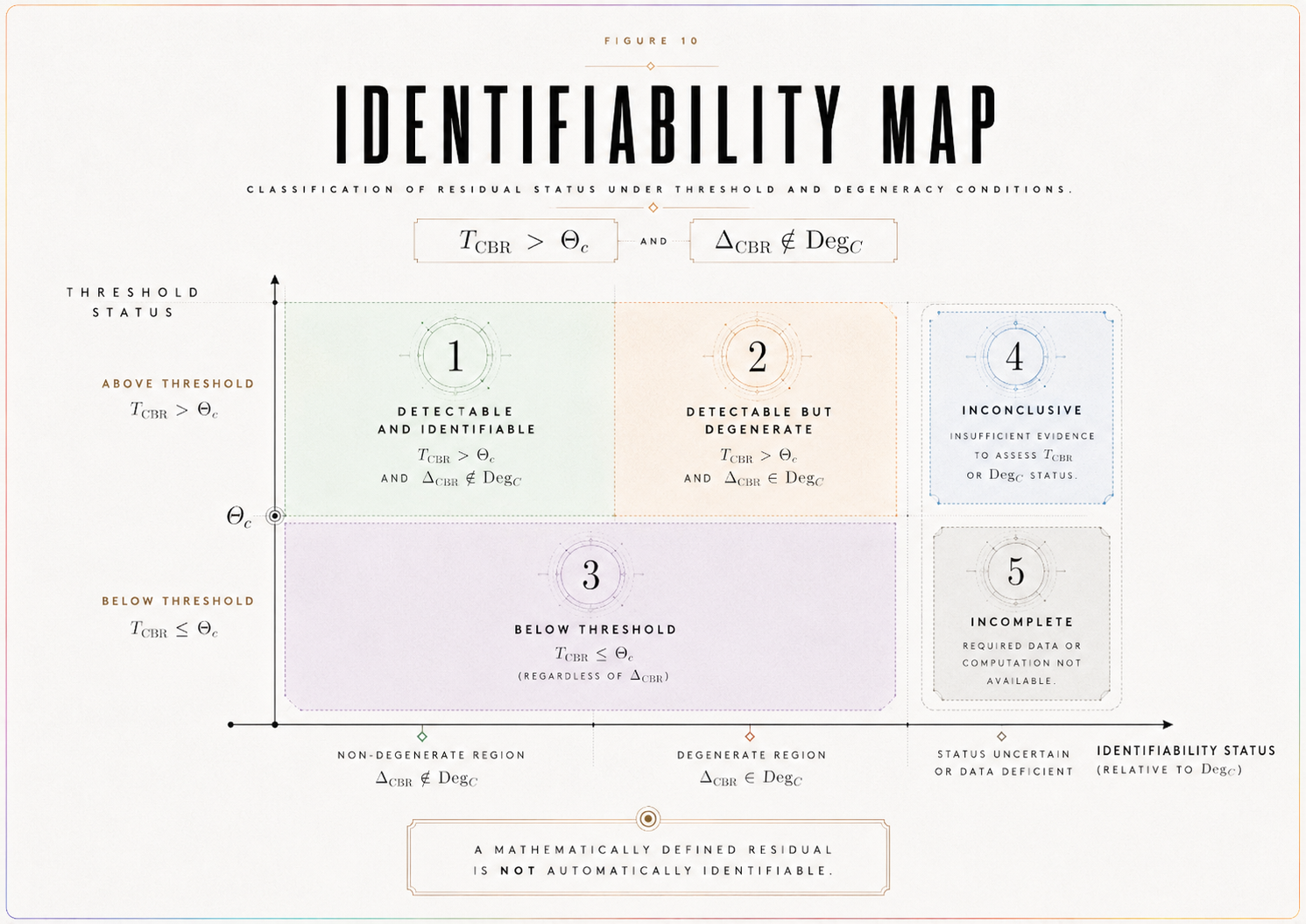

The central contribution is a theorem spine connecting numerical completeness, endpoint identifiability, simulation readiness, and adjudication readiness. A platform-specific CBR instantiation is numerically complete only when all adjudication-relevant objects are fixed before endpoint interpretation. Its endpoint is identifiable only if the predicted CBR endpoint exceeds the registered decision threshold, T_CBR > Θ_c, and the predicted residual is not absorbed by the ordinary-degeneracy operator, Δ_CBR ∉ Deg_C. It is simulation-ready only when baseline-only, CBR-positive, strong-null, inconclusive, and degeneracy scenarios can be generated from the locked dossier without adding new primary test objects. It is adjudication-ready only when the critical-path objects have sufficient provenance and the locked statistical rule can issue a verdict from an observed endpoint comparison.

The framework distinguishes completion, simulation, support, failure, and inconclusive exposure. Numerical completeness does not imply empirical confirmation. Simulation can test detectability, false-support risk, false-failure risk, nuisance sensitivity, sampling adequacy, degeneracy behavior, and strong-null logic, but it does not adjudicate nature. Public-data reanalysis can motivate constraints or test design, but it is not decisive unless accessibility calibration, baseline modeling, nuisance accounting, endpoint reconstruction, degeneracy analysis, and statistical validity are adequate. The resulting standard makes a CBR platform instantiation test-ready in a disciplined sense: it specifies what must be locked, what can count as support, what would constitute failure, what remains inconclusive, and why post hoc rescue is not permitted.

SECTION 1. Introduction — From Law-Form to Computable Instantiation

The locked-dossier protocol specifies how a CBR test must be registered before data interpretation. It fixes the empirical endpoint, baseline model class, nuisance envelope, decision threshold, endpoint statistic, predicted endpoint, validity gates, statistical rule, and verdict rule. That protocol prevents post hoc rescue and anomaly hunting.

A further question remains: What exactly is being computed in a concrete platform?

A locked dossier can state that η, I_c, V_ℬ(η), B_𝓝(η), Θ_c, T_c, and T_CBR must be fixed. But a mathematically serious CBR instantiation must do more than list those objects. It must define how they are generated from a declared measurement context.

This paper provides that next step.

It constructs a platform-specific numerical instantiation of CBR in a record-accessibility interferometric context. The aim is not to prove CBR, not to report empirical confirmation, and not to define the final universal realization-burden functional. The aim is narrower and more technical: to show how a declared context C can generate a candidate class 𝒜(C), a computable platform-level burden proxy ℛ_C^plat, an accessibility bridge from η to the observable endpoint, a baseline model class 𝔅, a nuisance envelope B_𝓝(η), a decision threshold Θ_c, and a predicted endpoint T_CBR.

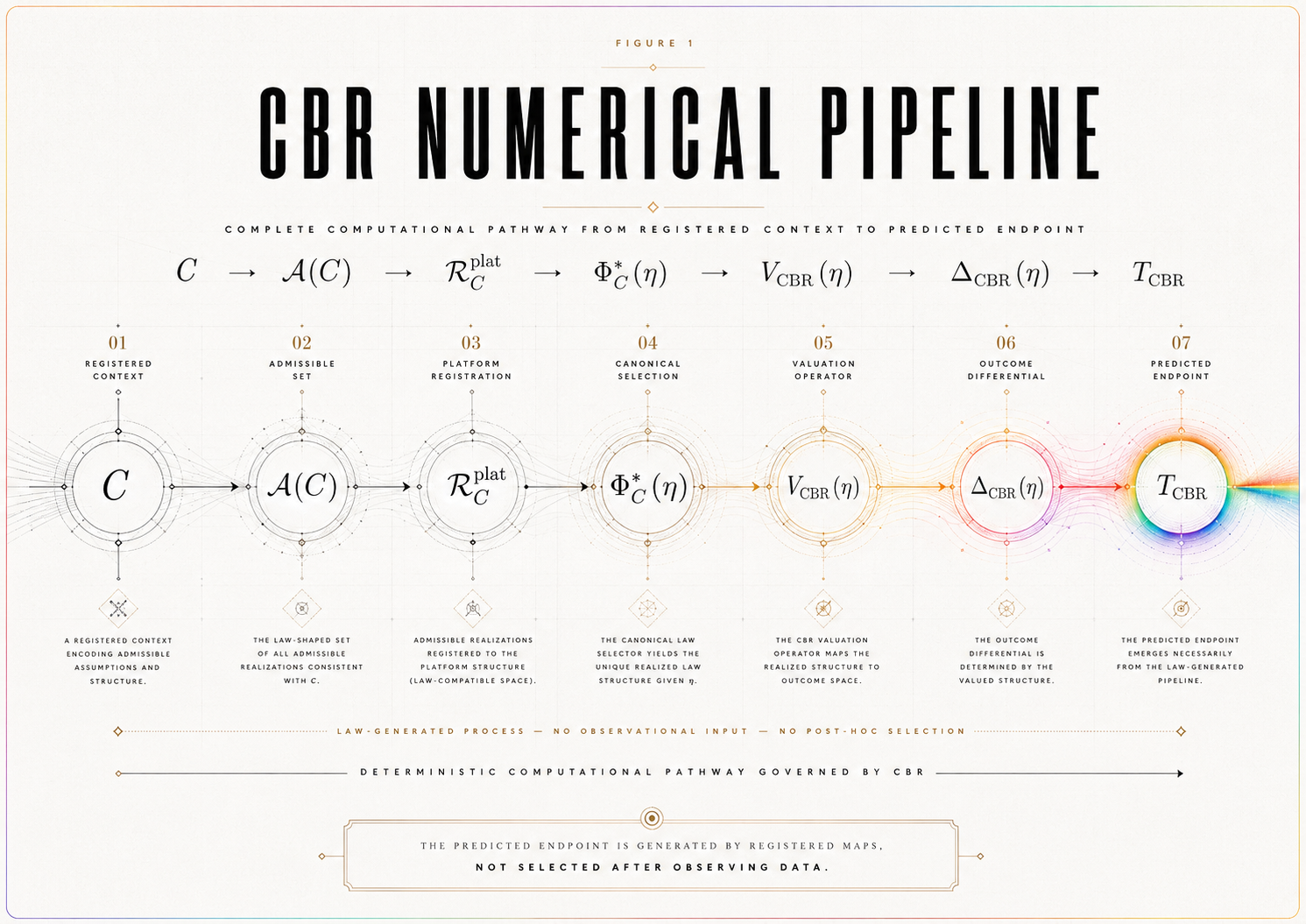

The governing pathway is:

C → 𝒜(C) → ℛ_C^plat → η bridge → Δ_CBR(η) → T_CBR.

This pathway is the paper’s central contribution. It moves CBR from locked empirical architecture to computable platform-specific instantiation.

1.1 The Problem

CBR’s canonical law-form is:

Φ∗C ∈ argmin{Φ ∈ 𝒜(C)} ℛ_C(Φ), up to ≃_C.

This expression supplies the formal structure of constrained selection. However, an empirical test requires platform-level objects that can be computed, bounded, simulated, or compared with data.

A critic can therefore ask: Given an actual interferometric context, how are 𝒜(C), ℛ_C^plat, η, I_c, 𝔅, V_ℬ(η), B_𝓝(η), Θ_c, T_c, and T_CBR obtained?

If those objects are not generated by registered rules, the model remains under-specified. If they are selected after data inspection, the analysis becomes exploratory. If they are too flexible, the model risks absorbing any outcome. If they are too narrow, it risks false support.

This paper addresses that problem by defining a numerical instantiation standard.

1.2 The Central Advancement

The paper’s central advancement is the construction of a computable platform-level CBR instantiation.

It provides:

a declared measurement context C,

a candidate-generation rule for 𝒜(C),

an operational equivalence relation ≃_C,

a computable burden proxy ℛ_C^plat,

an accessibility bridge from η to V_CBR(η),

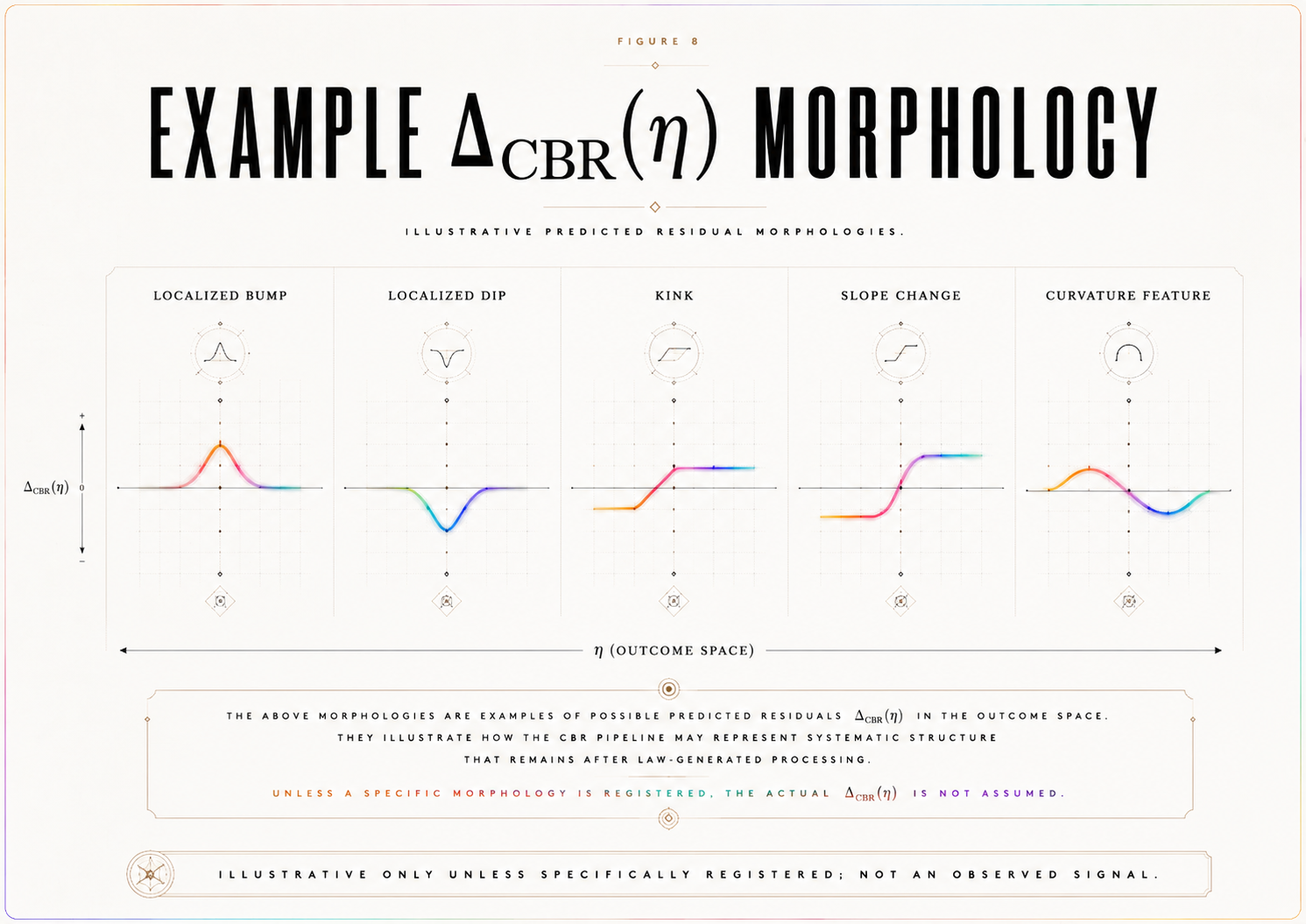

a predicted residual Δ_CBR(η),

a predicted endpoint T_CBR,

a baseline model class 𝔅,

a nuisance envelope B_𝓝(η),

a decision threshold Θ_c,

and an identifiability rule governing when the predicted residual is distinguishable from ordinary baseline and nuisance behavior.

This is stronger than a checklist. It is a generative model of the registered test objects.

1.3 Scope

This paper is platform-specific.

It does not claim to define the final universal burden functional ℛ_C for all measurement contexts. Instead, it defines a registered platform-level burden proxy ℛ_C^plat sufficient for numerical modeling, simulation, and future empirical comparison in a declared record-accessibility interferometric context.

The distinction matters. A platform proxy is not a universal law. It is a controlled instantiation of the CBR law-form under a declared context and registered assumptions.

Accordingly, the paper’s claim is conditional: If a platform-specific CBR instantiation supplies a constructively generated candidate class, a computable burden proxy, a registered accessibility bridge, and a predicted endpoint, then it becomes numerically executable in that declared context.

1.4 Main Contribution

The paper contributes eight objects to the CBR empirical-execution sequence.

First, it gives a constructive rule for 𝒜(C).

Second, it defines a computable platform burden proxy ℛ_C^plat.

Third, it states how η enters the burden proxy nontrivially.

Fourth, it defines the bridge:

η → ℛ_C^plat → Φ∗_C(η) → V_CBR(η) → Δ_CBR(η).

Fifth, it defines a baseline model class 𝔅, not merely a single idealized baseline curve.

Sixth, it defines the nuisance envelope and critical nuisance bound.

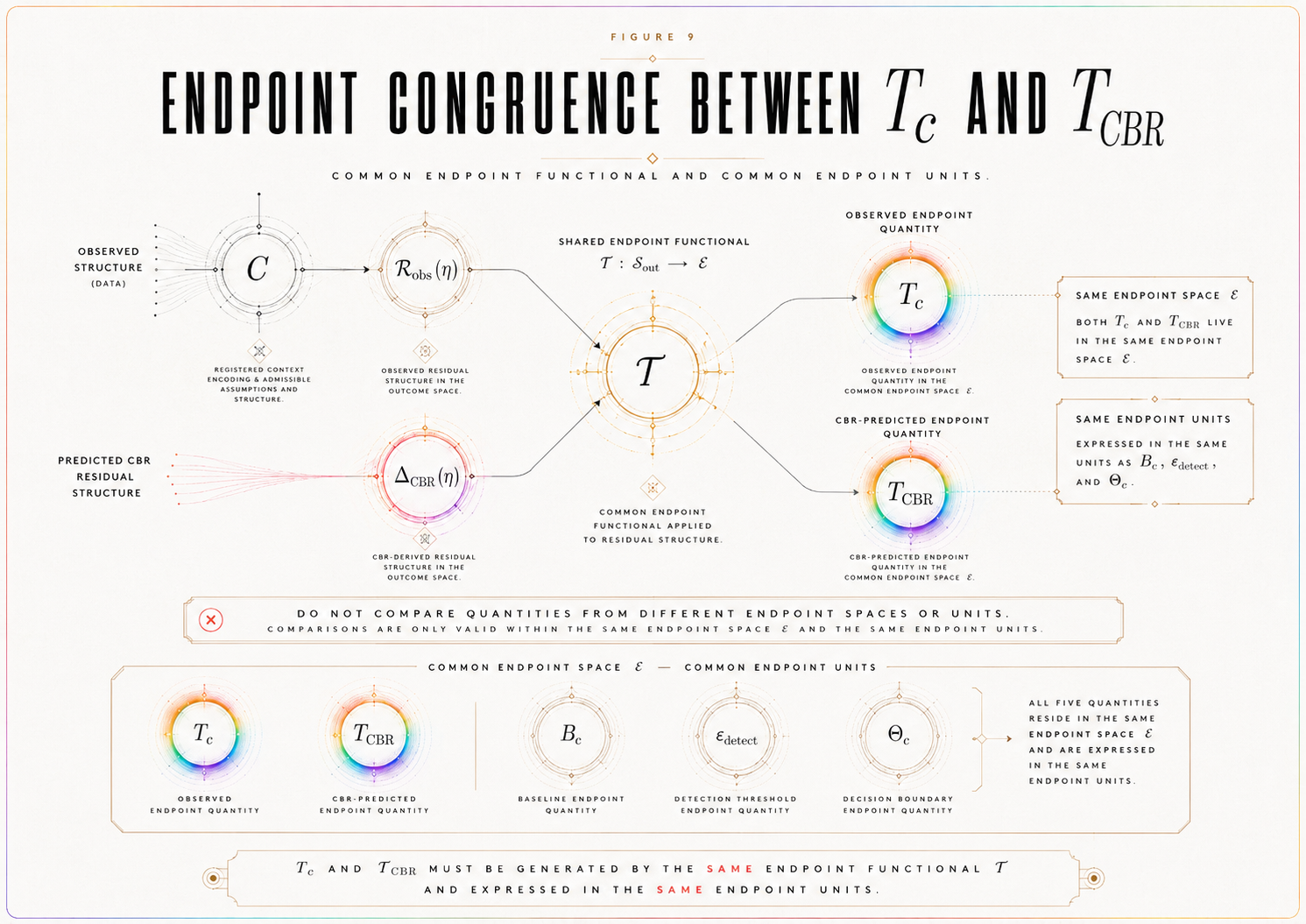

Seventh, it defines endpoint congruence between T_c and T_CBR.

Eighth, it states the numerical-completeness, bridge-computability, and platform-executability conditions required before the model can be simulated or compared with data.

1.5 Principle — Computable Instantiation

A platform-specific CBR instantiation is computable only if every primary object in the pathway C → 𝒜(C) → ℛ_C^plat → η bridge → Δ_CBR(η) → T_CBR is defined by a registered functional rule before data interpretation.

This principle prevents the paper from mistaking notation for computation. Naming 𝒜(C), ℛ_C^plat, or T_CBR is not enough. The model must specify how those objects are generated, evaluated, and locked.

1.6 Transition

The first required object is the declared measurement context C. Without a fixed platform context, none of the numerical objects can be generated without discretion.

SECTION 2. Declared Platform Context C

A platform-specific numerical instantiation begins by fixing the measurement context C.

For this paper, C is a record-accessibility interferometric context. This includes delayed-choice, quantum eraser, which-path marking, wave-particle duality, or related interferometric arrangements in which an interference visibility observable is evaluated while outcome-defining record-accessibility is varied.

The context C is not merely a background description. It is the root object of the numerical model. It determines which candidates are admissible, which distinctions are operationally meaningful, how η is calibrated, which baseline model class is relevant, what nuisance sources must be bounded, and what data are sufficient for adjudication.

2.1 Platform Choice

The declared platform class is: record-accessibility interferometry.

This class is appropriate because it contains the basic ingredients needed for an accessibility-critical residual test: a visibility observable, a record-accessibility control variable, a standard quantum/decoherence baseline, ordinary detector and nuisance mechanisms, and a possible critical accessibility regime in which a registered CBR residual may be evaluated.

The platform may be instantiated through a specific delayed-choice, quantum eraser, which-path marking, or wave-particle duality arrangement. The present paper defines the numerical structure required for such a platform. A later empirical or public-data paper may instantiate it with a particular dataset.

2.2 Measurement Context C

Let C include all registered features needed to define the model:

state preparation,

interferometric alternatives,

record channel,

which-path or record marker,

record-accessibility control,

visibility readout,

timing window or coincidence rule where applicable,

detector model,

calibration protocol,

data-inclusion rule,

visibility estimator,

validity gates,

and statistical rule.

A change to any of these objects after data interpretation changes the test object. It may define a new dossier version, but it cannot rescue or reinterpret the original registered instantiation.

2.3 Why This Platform Is Suitable

The platform is suitable because it allows the core CBR empirical objects to be defined in a physically disciplined way.

The visibility observable supplies V_obs(η).

The record-accessibility control supplies η.

The ordinary quantum/decoherence account supplies the baseline model class 𝔅 and baseline curve V_ℬ(η).

Detector behavior, calibration uncertainty, phase drift, finite sampling, and environmental noise supply nuisance components for B_𝓝(η).

The critical accessibility region supplies I_c or N(η_c).

The registered CBR bridge supplies the predicted endpoint T_CBR.

Thus, the platform is suitable not because it proves CBR, but because it is rich enough to express the locked empirical structure.

2.4 Platform Limitation

This model does not apply to all quantum measurements.

It applies only to the declared platform class C. A different measurement context would require its own candidate-generation rule, burden proxy, accessibility bridge, baseline model class, nuisance envelope, endpoint statistic, and predicted endpoint.

This limitation is a strength rather than a weakness. CBR’s empirical claims must be context-fixed. A platform-specific instantiation should not pretend to be universal.

2.5 Definition — Declared Numerical Context

A declared numerical context C is a fixed record-accessibility interferometric context containing enough operational structure to generate 𝒜(C), ≃_C, ℛ_C^plat, η, I_c, 𝔅, V_ℬ(η), B_𝓝(η), T_c, and T_CBR under registered rules.

This definition makes C the root object of the numerical model.

2.6 Context Completeness Condition

A declared context is complete for numerical execution only if it supplies enough information to determine:

the preliminary candidate space Ω_C,

the admissibility filters generating 𝒜(C),

the operational equivalence relation ≃_C,

the domain of ℛ_C^plat,

the calibration of η,

the visibility-response map V(Φ, C),

the baseline model class 𝔅,

and the data-adequacy requirements for T_c.

If any of these are missing, the context may still be conceptually meaningful, but it is not yet numerically executable.

2.7 Transition

Once C is fixed, the paper can construct the admissible candidate class 𝒜(C). Without a constructive candidate class, the burden functional would have no well-defined domain.

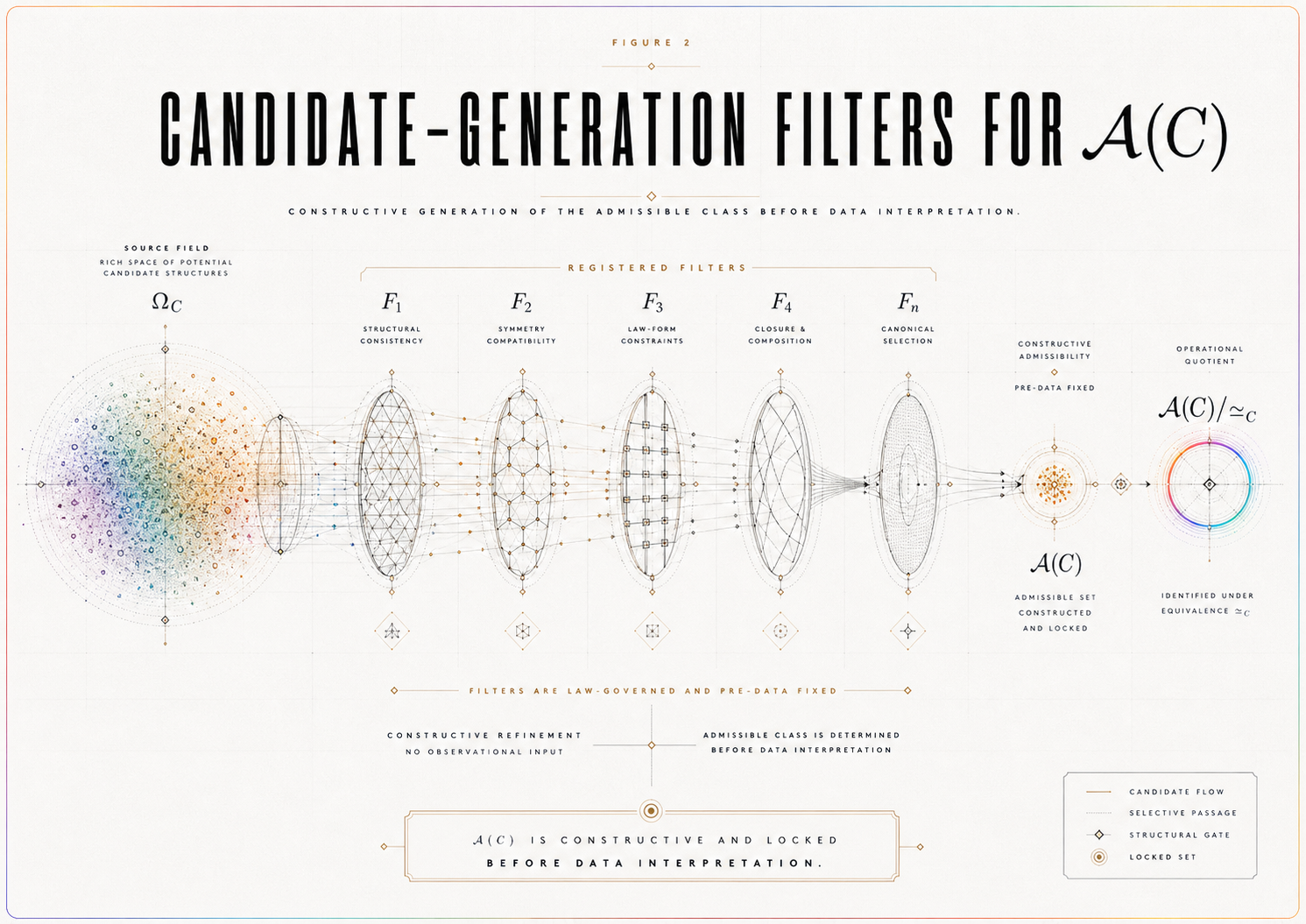

SECTION 3. Candidate-Generation Rule for 𝒜(C)

Let 𝒜(C) denote the platform-generated admissible candidate class for the declared context C.

The purpose of this section is to make 𝒜(C) constructive rather than nominal. The candidate class cannot be a vague set of possible outcomes. It must be generated by registered filters applied to a declared platform candidate space.

3.1 Candidate Space and Admissibility

Let Ω_C denote the preliminary platform candidate space: the set of candidate descriptions that can be formulated for the declared context C before admissibility filters are applied.

A candidate Φ ∈ Ω_C belongs to 𝒜(C) only if it satisfies all registered admissibility filters.

The admissible class is therefore not:

all imaginable possibilities,

all mathematically writable alternatives,

or all post hoc candidate descriptions compatible with the observed result.

It is the class of candidates that survive the registered constraints of the context before data interpretation.

3.2 Candidate Filters

A candidate Φ is admissible only if it satisfies the following filters.

Context compatibility.

Φ must be defined within the fixed measurement context C.

Instrument compatibility.

Φ must respect the registered preparation, measurement, record, and visibility-readout structure.

Record-structure compatibility.

Φ must specify how outcome-defining record information is represented within the platform.

Visibility-response definability.

Φ must determine, or be compatible with, a defined visibility response V_Φ(η).

Operational evaluability.

Φ must be evaluable by the registered endpoint statistic and statistical rule.

Born-discipline constraint.

Φ must not arbitrarily violate the ensemble-level quantum probability structure unless the instantiation explicitly registers a scoped deviation.

Decoherence-baseline compatibility.

Φ must respect the registered standard quantum/decoherence baseline structure except where the CBR instantiation explicitly predicts a residual.

Burden-evaluability.

Φ must be evaluable by ℛ_C^plat.

Non-post-hoc definability.

Φ must be specified before data interpretation.

These filters are not decorative. They prevent 𝒜(C) from becoming an adjustable possibility space.

3.3 Candidate-Generation Rule

The candidate-generation rule is:

𝒜(C) = {Φ ∈ Ω_C : F_i(Φ, C) = 1 for every registered admissibility filter F_i}.

Equivalently, a candidate is admissible only if it passes all registered filters:

Φ ∈ 𝒜(C) ⇔ Φ ∈ Ω_C and F₁(Φ, C) = ⋯ = F_n(Φ, C) = 1.

This makes 𝒜(C) a generated object, not an interpretive label.

3.4 Candidate Evaluability Condition

The generated candidate class must be evaluable.

For every Φ ∈ 𝒜(C), the following must be defined:

ℛ_C^plat(Φ; η),

V_Φ(η) or the rule by which Φ determines visibility,

the operational equivalence class of Φ under ≃_C,

and the endpoint contribution of Φ under the registered endpoint functional.

If any candidate admitted into 𝒜(C) cannot be evaluated by the burden proxy or visibility-response map, then the candidate-generation rule is incomplete.

3.5 Operational Equivalence ≃_C

The operational equivalence relation ≃_C identifies candidates that differ formally but not operationally within the declared test.

Define:

Φ₁ ≃_C Φ₂

if Φ₁ and Φ₂ are indistinguishable under the registered observables, uncertainty convention, endpoint statistic, and statistical rule.

For the present platform, a sufficient operational-equivalence criterion is:

Φ₁ ≃_C Φ₂ if T_c(Φ₁) and T_c(Φ₂) are indistinguishable under the registered statistical rule.

If the endpoint is morphology-sensitive, operational equivalence must also include morphology equivalence under the registered comparison rule.

The selection object is therefore 𝒜(C)/≃_C, not the unquotiented candidate list.

3.6 Candidate-Class Lock Rule

The candidate class cannot be expanded, narrowed, re-filtered, or reinterpreted after data interpretation.

If new filters are added, old filters are removed, or candidate definitions are changed after observing V_obs(η) or r(η), the original numerical instantiation has not been preserved. A new dossier version has been created.

This rule prevents candidate engineering after the outcome.

Proposition 1 — Candidate-Class Constructibility

For a platform-specific CBR instantiation to be numerically adjudicable, 𝒜(C) must be generated by registered admissibility filters applied to a declared platform candidate space Ω_C before data interpretation.

Proof Sketch

The burden proxy ℛ_C^plat requires a domain. If 𝒜(C) is not generated by registered rules, then the domain of minimization can be changed after the result. If the domain can be changed after the result, the selection rule is not exposed to failure. Therefore, numerical adjudication requires a pre-data candidate-generation rule.

Corollary — Candidate Evaluability

A generated candidate class is numerically complete only if every candidate Φ ∈ 𝒜(C) is evaluable by ℛ_C^plat, classifiable under ≃_C, and connected to the registered visibility-response map.

Proof Sketch

A candidate that cannot be evaluated by ℛ_C^plat cannot enter the minimization rule. A candidate that cannot be classified under ≃_C cannot be compared at the operational level. A candidate that cannot be connected to visibility cannot contribute to T_CBR. Therefore, candidate admissibility requires evaluability, not merely formal inclusion.

3.7 Transition

With 𝒜(C) defined as a generated and evaluable admissible class, the next step is to define the computable burden proxy that orders candidates within 𝒜(C)/≃_C.

SECTION 4. Computable Platform Burden Proxy ℛ_C^plat

CBR’s canonical law-form requires a realization-burden functional:

Φ∗C ∈ argmin{Φ ∈ 𝒜(C)} ℛ_C(Φ), up to ≃_C.

For a platform-specific numerical instantiation, this paper defines a computable burden proxy:

ℛ_C^plat(Φ; η).

This proxy is not claimed to be the final universal burden functional for CBR. It is the registered platform-level functional used to generate a numerical prediction in the declared context C.

The proxy must satisfy five requirements.

It must be defined on 𝒜(C)/≃_C.

It must be computable before data interpretation.

It must allow η to enter nontrivially.

It must be stable under the registered coefficient and normalization rules.

It must generate a predicted endpoint T_CBR through a registered bridge.

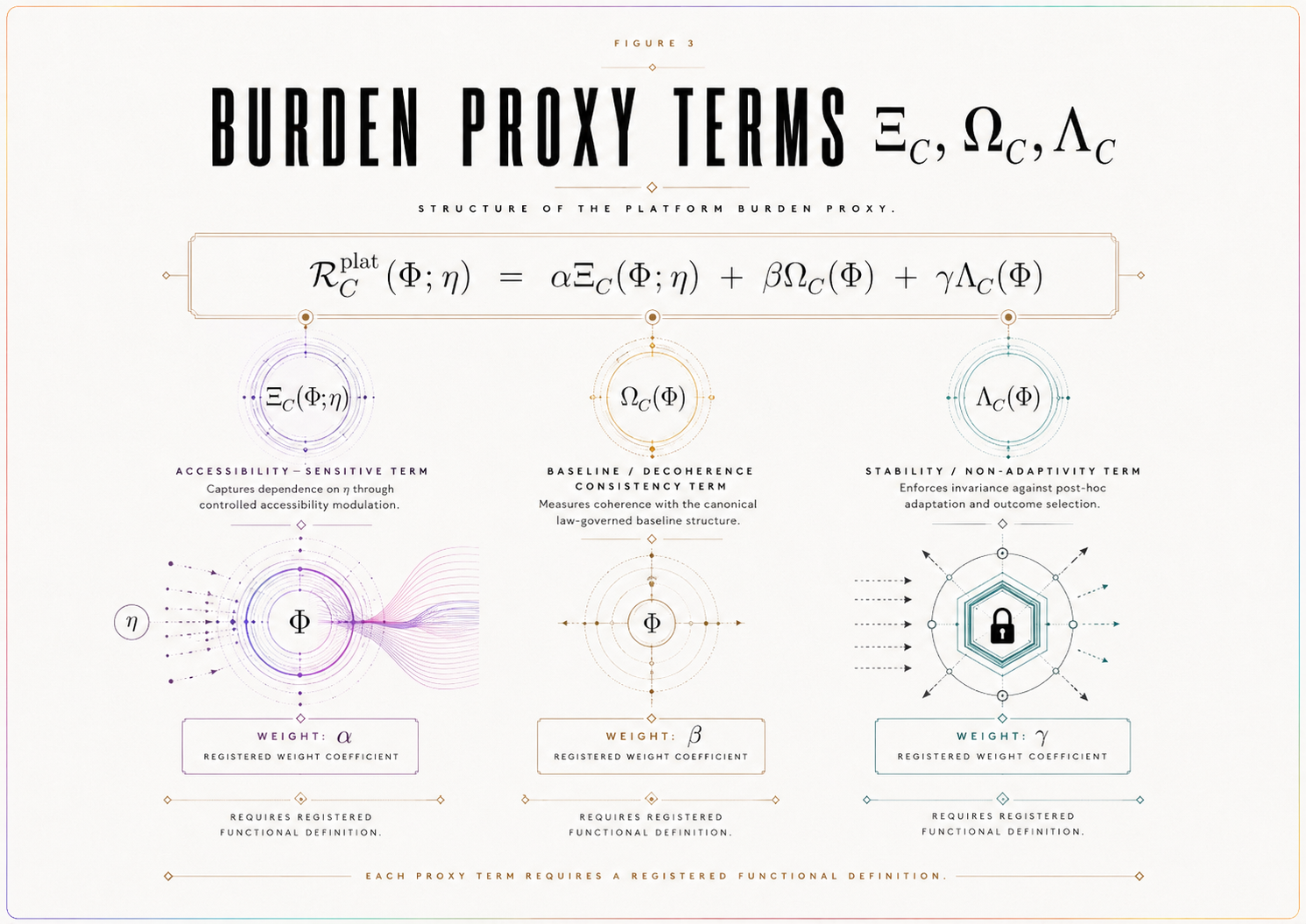

4.1 Proposed Functional Form

A platform-level burden proxy may be represented as:

ℛ_C^plat(Φ; η) = αΞ_C(Φ; η) + βΩ_C(Φ) + γΛ_C(Φ).

Here:

Ξ_C(Φ; η) is the accessibility burden term.

Ω_C(Φ) is the baseline/decoherence consistency term.

Λ_C(Φ) is the stability, non-adaptivity, or complexity-control term.

α, β, γ are registered coefficients.

This expression is a platform-specific modeling form. It should not be presented as the final universal CBR law unless a later paper proves that status.

4.2 Principle — Burden-Term Definition Obligation

A platform-specific burden proxy ℛ_C^plat is not numerically complete merely because its terms are named. Each term Ξ_C, Ω_C, and Λ_C must be defined by a registered functional rule with a specified domain, range, normalization convention, coefficient rule, and data-independence condition. If any term cannot be evaluated for every Φ ∈ 𝒜(C), the numerical instantiation is incomplete rather than adjudicative.

This principle is essential. Without it, ℛ_C^plat would be a notation scheme rather than a computable object.

4.3 Accessibility Term Ξ_C

The term Ξ_C(Φ; η) represents the part of the burden proxy that changes as record-accessibility changes.

It is the term through which η enters the realization-burden structure nontrivially.

For this term to be admissible, the paper must specify:

the domain of Ξ_C,

the range of Ξ_C,

how η is calibrated,

how η enters Ξ_C,

how Ξ_C changes candidate ordering,

whether Ξ_C predicts a magnitude, morphology, or transition behavior,

how Ξ_C is normalized relative to the other terms,

and how its contribution is locked before data interpretation.

Without this term, η may be descriptive but not dynamically relevant to the registered CBR instantiation.

4.4 Baseline / Decoherence Consistency Term Ω_C

The term Ω_C(Φ) penalizes candidates that violate the registered ordinary baseline structure without a declared CBR residual.

Its role is not to force CBR to reduce to standard quantum/decoherence behavior. Its role is to prevent arbitrary residual fitting.

For this term to be admissible, the paper must specify:

the domain of Ω_C,

the range of Ω_C,

which baseline constraints it enforces,

how it interacts with the baseline model class 𝔅,

how ordinary decoherence consistency is represented,

how deviations are allowed only when registered as a CBR endpoint,

and how Ω_C is normalized.

A candidate may predict an accessibility-critical residual only if that residual is registered as part of the instantiation and later tested against 𝔅, B_𝓝(η), and Θ_c.

Thus, Ω_C enforces ordinary-physics discipline without eliminating the possibility of a CBR-specific endpoint.

4.5 Stability / Non-Adaptivity Term Λ_C

The term Λ_C(Φ) penalizes instability, excessive flexibility, or post hoc adjustability.

A candidate should not become favorable merely because it can be tuned to match whatever residual appears. Λ_C encodes the requirement that the candidate’s structure be stable under the registered modeling assumptions.

For this term to be admissible, the paper must specify:

the domain of Λ_C,

the range of Λ_C,

which forms of flexibility it penalizes,

how it distinguishes legitimate platform parameters from post hoc tuning,

how it is normalized,

and how it remains independent of the observed residual.

This term helps prevent overfitting and failure rescue.

4.6 Coefficient Fixity

The coefficients α, β, γ must be fixed by registered rules before data interpretation.

Permitted sources include:

normalization conventions,

platform calibration,

prior theoretical commitments,

simulation conventions,

sensitivity constraints,

or declared illustrative modeling assumptions.

Forbidden sources include:

outcome fitting,

post hoc residual matching,

coefficient adjustment after failure,

or tuning to produce support.

If coefficient values are illustrative or simulated, they must be labeled as such. They must not be presented as measured or empirically validated.

4.7 Selection Rule

The platform selection rule is:

Φ∗C(η) ∈ argmin{Φ ∈ 𝒜(C)} ℛ_C^plat(Φ; η), up to ≃_C.

The dependence on η does not mean that the model may adjust itself after the data are known. It means that, for each registered value of record-accessibility, the platform proxy orders admissible candidates according to a fixed functional rule.

4.8 Burden-Proxy Lock Rule

The terms Ξ_C, Ω_C, Λ_C, the coefficients α, β, γ, normalization rules, candidate domain, and data-independence conditions must be fixed before endpoint evaluation.

Any change to the burden proxy after data interpretation creates a new numerical instantiation. It does not rescue or revise the verdict of the prior version.

Proposition 2 — Burden-Proxy Computability

A platform-specific CBR model is numerically executable only if its burden proxy ℛ_C^plat is defined on 𝒜(C)/≃_C, computable under registered rules, and fixed before data interpretation.

Proof Sketch

The selection rule requires a functional ordering over admissible candidates. If the functional is undefined, the selection rule cannot be evaluated. If the functional is not computable, the model cannot generate a numerical endpoint. If the functional is changed after data interpretation, the model is no longer locked. Therefore, numerical execution requires a fixed computable burden proxy.

Corollary — Named Terms Are Insufficient

A platform burden proxy whose terms are named but not mathematically evaluable is incomplete.

Proof Sketch

A term such as Ξ_C, Ω_C, or Λ_C contributes to ℛ_C^plat only if it can be evaluated for admissible candidates. If it cannot be evaluated, then the burden proxy cannot order 𝒜(C)/≃_C. Therefore, named but unevaluable terms do not yet define a numerical model.

4.9 Transition

Once the burden proxy is defined, the model must state how record-accessibility η enters that proxy and how the resulting candidate selection produces a visibility-level prediction.

SECTION 5. Accessibility Bridge: η → ℛ_C^plat → Δ_CBR(η)

The accessibility bridge is the central connection between the CBR law-form and the measurable endpoint.

The bridge must show how record-accessibility η enters ℛ_C^plat, how the minimizer Φ∗_C(η) determines a predicted visibility response, and how that response generates a predicted residual Δ_CBR(η).

Without this bridge, the platform model may be formally defined, but it is not yet empirically exposed.

5.1 Operational Definition of η

Let η denote the operational record-accessibility variable.

η is not consciousness, subjective awareness, observer knowledge, human attention, or metaphysical observation. It is a platform-calibrated measure of accessible outcome-defining record information.

In a record-accessibility interferometric context, η may be instantiated through:

which-path distinguishability,

record-retention probability,

marker strength,

eraser accessibility,

path-knowledge parameter,

coincidence-conditioned accessibility,

or another registered accessibility proxy.

The chosen proxy must be specified before residual evaluation.

5.2 η Calibration

The platform model must define:

the range of η,

the resolution of η,

the uncertainty in η,

the calibration method,

the sampling requirements,

the relationship between η and the physical accessibility-control mechanism,

and the effect of η uncertainty on T_CBR and T_c.

If η is only qualitatively described, the instantiation is incomplete. If η is reconstructed after seeing the residual, the analysis is exploratory.

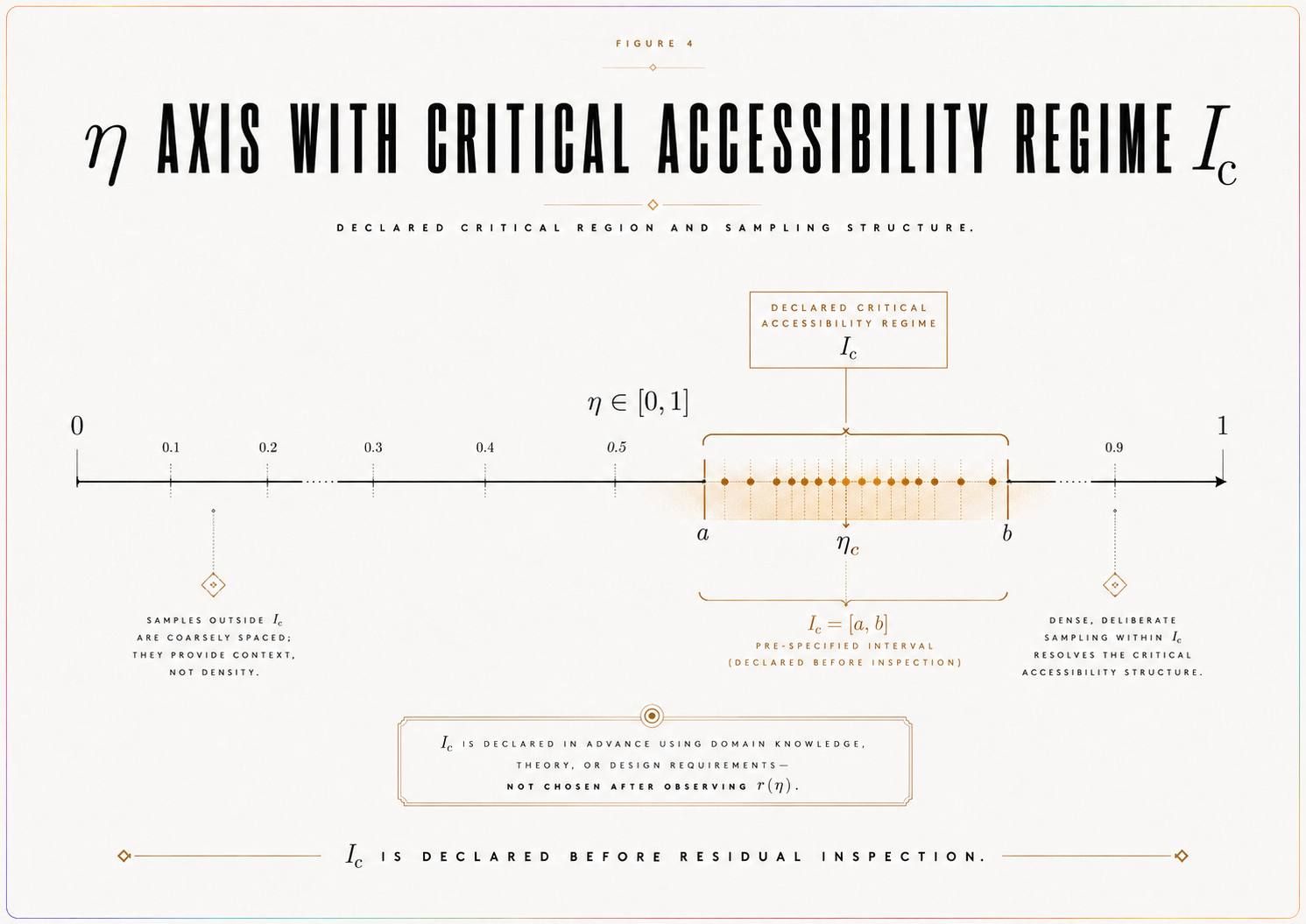

5.3 Critical Accessibility Regime

The model must declare a critical accessibility regime:

I_c = [η₁, η₂]

or:

N(η_c) = {η : |η − η_c| ≤ δ}.

The critical regime is the region where the platform model predicts the accessibility-sensitive endpoint to be strongest, most identifiable, or most decision-relevant.

The justification may be theoretical, numerical, bridge-based, or platform-specific, but it must be fixed before residual inspection.

5.4 Bridge Equation

The numerical bridge is:

η → ℛ_C^plat(Φ; η) → Φ∗_C(η) → V_CBR(η) → Δ_CBR(η).

Define the CBR-predicted visibility response:

V_CBR(η) = V(Φ∗_C(η), C),

where V is the registered visibility-response map for the platform.

Define the predicted residual:

Δ_CBR(η) = V_CBR(η) − V_ℬ(η).

Then define the predicted endpoint:

T_CBR = 𝒯[Δ_CBR(η), η ∈ I_c],

where 𝒯 is the registered endpoint functional.

This is the core numerical pathway of the paper.

5.5 Computability Condition

The bridge η → ℛ_C^plat → Φ∗_C(η) → V_CBR(η) → Δ_CBR(η) → T_CBR is complete only if every arrow is defined by a registered map. If any arrow is interpretive rather than functional, the model remains schematic rather than numerically executable.

This condition prevents the bridge from functioning merely as a conceptual diagram. A numerical instantiation must specify the maps, not only their names.

5.6 Bridge Completeness

The accessibility bridge is complete only if it specifies:

how η enters ℛ_C^plat,

how η can change candidate ordering,

how Φ∗_C(η) determines V_CBR(η),

how Δ_CBR(η) is generated,

which endpoint functional 𝒯 is applied,

how T_CBR is computed,

and what absence of the endpoint would count as registered failure.

If any of these are missing, the bridge is incomplete.

5.7 Bridge Nontriviality

η must enter the burden proxy nontrivially.

A merely cosmetic η-dependence is insufficient. The model must state whether η changes candidate ordering, endpoint magnitude, endpoint morphology, or detectability status.

If η does not affect ℛ_C^plat or T_CBR, then the model does not generate an accessibility-critical prediction.

Proposition 3 — Accessibility-Bridge Completeness

A platform-specific CBR instantiation generates a predicted accessibility-critical residual only if η enters ℛ_C^plat nontrivially, the selected candidate Φ∗_C(η) determines a registered visibility response V_CBR(η), and the predicted residual Δ_CBR(η) yields a computable endpoint T_CBR under the registered endpoint functional.

Proof Sketch

The accessibility-critical residual is an empirical endpoint, not the law itself. To generate that endpoint, the law-form must be connected to an observable. If η does not enter the burden proxy, accessibility variation cannot affect the predicted endpoint. If the selected candidate does not determine a visibility response, no residual can be computed. If no endpoint functional is registered, no prediction can be adjudicated. Therefore, bridge completeness requires nontrivial η entry, a visibility-response map, and a computable T_CBR.

Theorem 1 — Platform Executability

A declared CBR platform model is executable only if 𝒜(C) is constructively generated, ℛ_C^plat is computable on 𝒜(C)/≃_C, η enters ℛ_C^plat nontrivially, Φ∗_C(η) determines V_CBR(η), Δ_CBR(η) is defined relative to V_ℬ(η), and T_CBR is obtained by applying the registered endpoint functional to Δ_CBR(η) over I_c.

Proof Sketch

A platform model is executable only if each step from context to prediction is defined. The context C generates 𝒜(C). The burden proxy ℛ_C^plat orders candidates in 𝒜(C)/≃_C. Nontrivial η-dependence allows accessibility variation to affect the selection structure. The selected candidate must determine a visibility response V_CBR(η). The residual Δ_CBR(η) must be defined relative to the registered baseline V_ℬ(η). The endpoint T_CBR must then be computed by the registered endpoint functional over the declared critical regime. If any step is missing, the platform model remains schematic. Therefore, all listed conditions are required for executability.

5.8 Transition

The bridge defines the CBR-side prediction. The next required object is the ordinary baseline model class 𝔅, which determines what standard quantum/decoherence/nuisance physics can explain before any CBR-specific residual is considered.

SECTION 6. Baseline Model Class 𝔅

The baseline model class defines what ordinary quantum, decoherence, detector, calibration, sampling, and platform effects can explain before any CBR-specific residual is considered. It is therefore one of the central safeguards against false support.

CBR does not receive support by outperforming an idealized or artificially narrow baseline. A CBR residual becomes relevant only after ordinary physics has been given its strongest registered expression in the declared platform context.

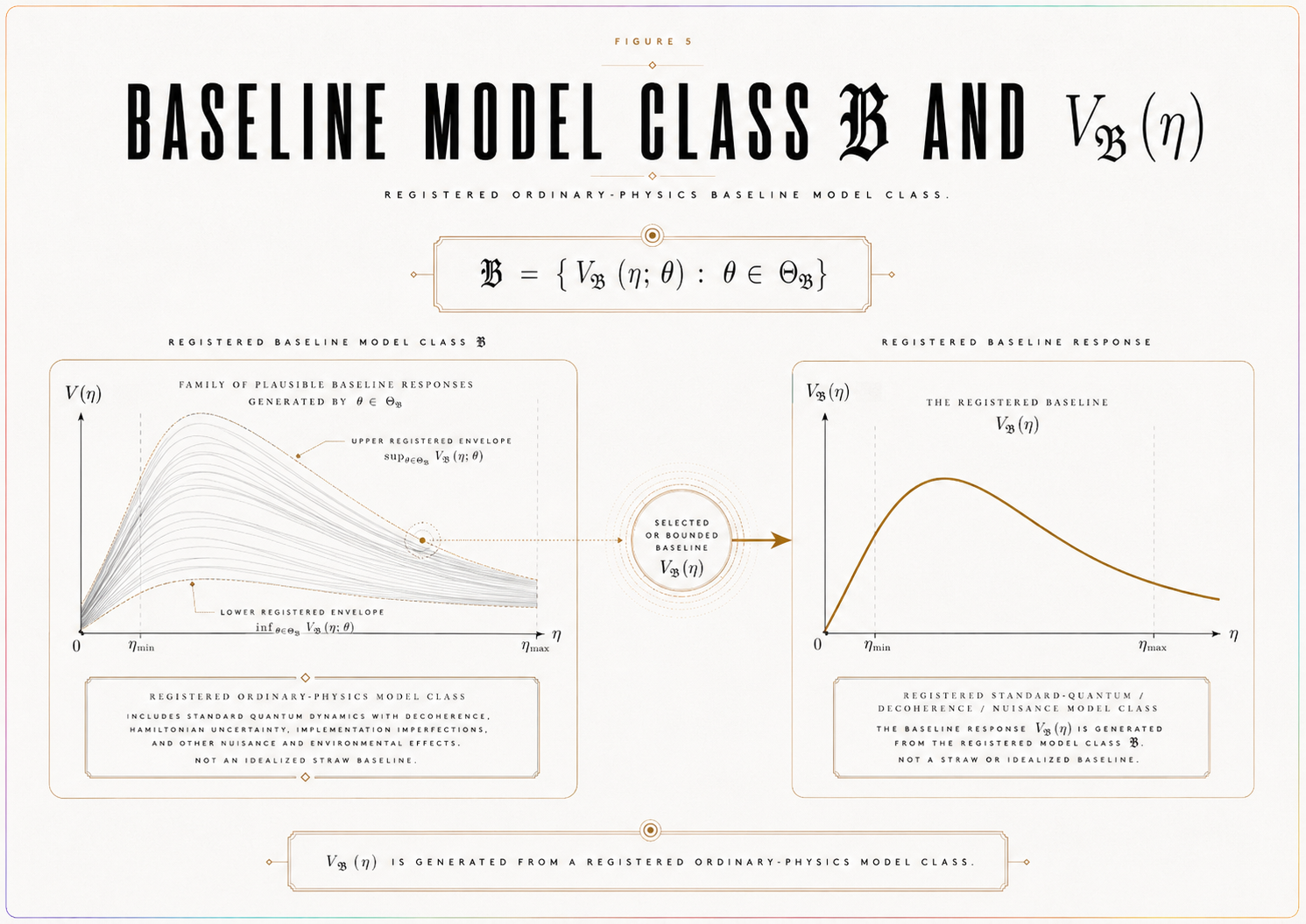

6.1 Definition

Let:

𝔅 = {V_ℬ(η; θ) : θ ∈ Θ_ℬ}

where Θ_ℬ is the registered ordinary-physics parameter space.

Each member of 𝔅 is a candidate baseline visibility function representing standard quantum/decoherence/nuisance-compatible behavior in the declared context C. The selected or bounded baseline visibility curve V_ℬ(η) must be obtained from 𝔅 by a registered selection, fitting, calibration, or bounding rule fixed before endpoint evaluation.

Thus, V_ℬ(η) is not an arbitrary curve. It is the baseline consequence of a registered model class.

6.2 Included Ordinary Effects

The baseline model class should include all ordinary effects that the platform can justify as relevant, including:

standard quantum visibility prediction,

decoherence,

detector inefficiency,

dark counts,

loss,

phase drift,

finite sampling,

calibration uncertainty,

alignment uncertainty,

visibility-estimator uncertainty,

environmental noise,

postselection effects where applicable,

and timing or coincidence-window effects where applicable.

The exact list is platform-specific. The rule is not that every possible effect must be included in every model. The rule is that no legitimate ordinary effect may be excluded merely to make a residual appear more CBR-relevant.

6.3 Baseline Guardrail

The baseline model class must be disciplined in both directions.

It must be broad enough to include legitimate standard quantum/decoherence/nuisance explanations.

It must not be so broad that it can absorb any possible residual by construction.

A baseline class that is too narrow creates false support.

A baseline class that is too elastic prevents possible failure.

The valid baseline class is therefore:

strong enough to protect against false attribution, but constrained enough to preserve adjudication.

6.4 Baseline Selection Rule

The dossier must specify how V_ℬ(η) is selected, fitted, calibrated, or bounded from 𝔅.

Permissible baseline-selection modes include:

fixed-parameter baseline,

calibrated-parameter baseline,

bounded-envelope baseline,

held-out-fit baseline,

control-regime-fit baseline,

or published-parameter baseline.

Whichever mode is used, it must be registered before endpoint evaluation.

The selection rule must specify:

the allowed parameter space Θ_ℬ,

which parameters are fixed, calibrated, fitted, or bounded,

which data, if any, may be used to fit baseline parameters,

whether held-out or control-region data are required,

how baseline uncertainty propagates into the nuisance envelope,

how baseline uncertainty is distinguished from nuisance uncertainty,

and when a candidate residual is considered absorbable by 𝔅.

6.5 Principle — Parameter Provenance

Every numerical quantity entering 𝔅, V_ℬ(η), B_𝓝(η), B_c, ε_detect, Θ_c, T_c, and T_CBR must be assigned a registered provenance label before data interpretation.

Permissible labels include:

measured,

published,

calibrated,

derived,

simulated,

illustrative,

assumed,

or required for future testing.

A quantity with unclear provenance cannot support adjudication. If a value is illustrative, it must not be presented as measured. If a value is simulated, it must not be presented as empirical. If a value is required for future testing, it must not be treated as already available.

This principle prevents numerical modeling from acquiring false empirical authority.

6.6 Baseline Anti-Overfitting Rule

The baseline model class 𝔅 may not be expanded, refit, re-parameterized, or reinterpreted after inspecting the residual curve:

r(η) = V_obs(η) − V_ℬ(η)

in a way that changes the registered verdict.

If a residual is absorbed only by adding new baseline terms, changing the fitting rule, expanding Θ_ℬ, altering the validation standard, or relabeling a CBR-like feature as ordinary behavior after data interpretation, then the original test object has changed.

A revised baseline may be scientifically useful. It may define a new dossier version. It does not rescue the prior version.

The locked rule is:

A new baseline creates a new test object. It does not save the old one.

6.7 Definition — Baseline Degeneracy

A predicted residual Δ_CBR(η) is baseline-degenerate if there exists an allowed baseline member:

V_ℬ(η; θ′) ∈ 𝔅

such that the predicted CBR residual becomes indistinguishable from ordinary baseline behavior under the registered endpoint functional and statistical rule.

Equivalently, Δ_CBR(η) is baseline-degenerate if the CBR-predicted endpoint can be absorbed by an allowed change of baseline parameters without violating the registered baseline-selection rule.

A baseline-degenerate predicted endpoint cannot support CBR, even if it is mathematically defined. It may still motivate better modeling, but it is not identifiable as a CBR endpoint.

6.8 Baseline Adequacy Condition

A baseline class is adequate only if it can answer the following question in the declared context:

What visibility behavior should be expected across I_c or N(η_c) if no CBR-specific accessibility-critical residual is present?

If 𝔅 cannot answer this question with sufficient precision, then the test cannot adjudicate CBR support or failure. A positive-looking residual may be ordinary under-modeling. A null result may be uninterpretable because the expected baseline itself is not stable.

Proposition 4 — Baseline Adequacy

A platform-specific CBR residual test is baseline-adequate only if 𝔅 is registered before endpoint evaluation, includes the strongest ordinary platform explanations that can be justified, supplies or bounds V_ℬ(η) across the declared critical regime, uses provenance-labeled numerical quantities, remains non-adaptive after residual inspection, and does not render the predicted CBR endpoint baseline-degenerate.

Proof Sketch

The accessibility-critical residual is defined relative to V_ℬ(η). If the baseline is weak, a residual may appear only because ordinary physics was under-modeled. If the baseline is too elastic, no residual could ever survive. If the baseline is changed after the residual is known, the verdict applies to a different object. If the predicted residual is degenerate with an allowed baseline parameter shift, the endpoint cannot be identified as CBR-relevant. Therefore, baseline adequacy requires a strong, bounded, registered, provenance-labeled, non-adaptive, non-degenerate model class.

6.9 Transition

The baseline model class defines ordinary expected visibility. The next object defines how much ordinary deviation around that baseline can be absorbed without counting as CBR support.

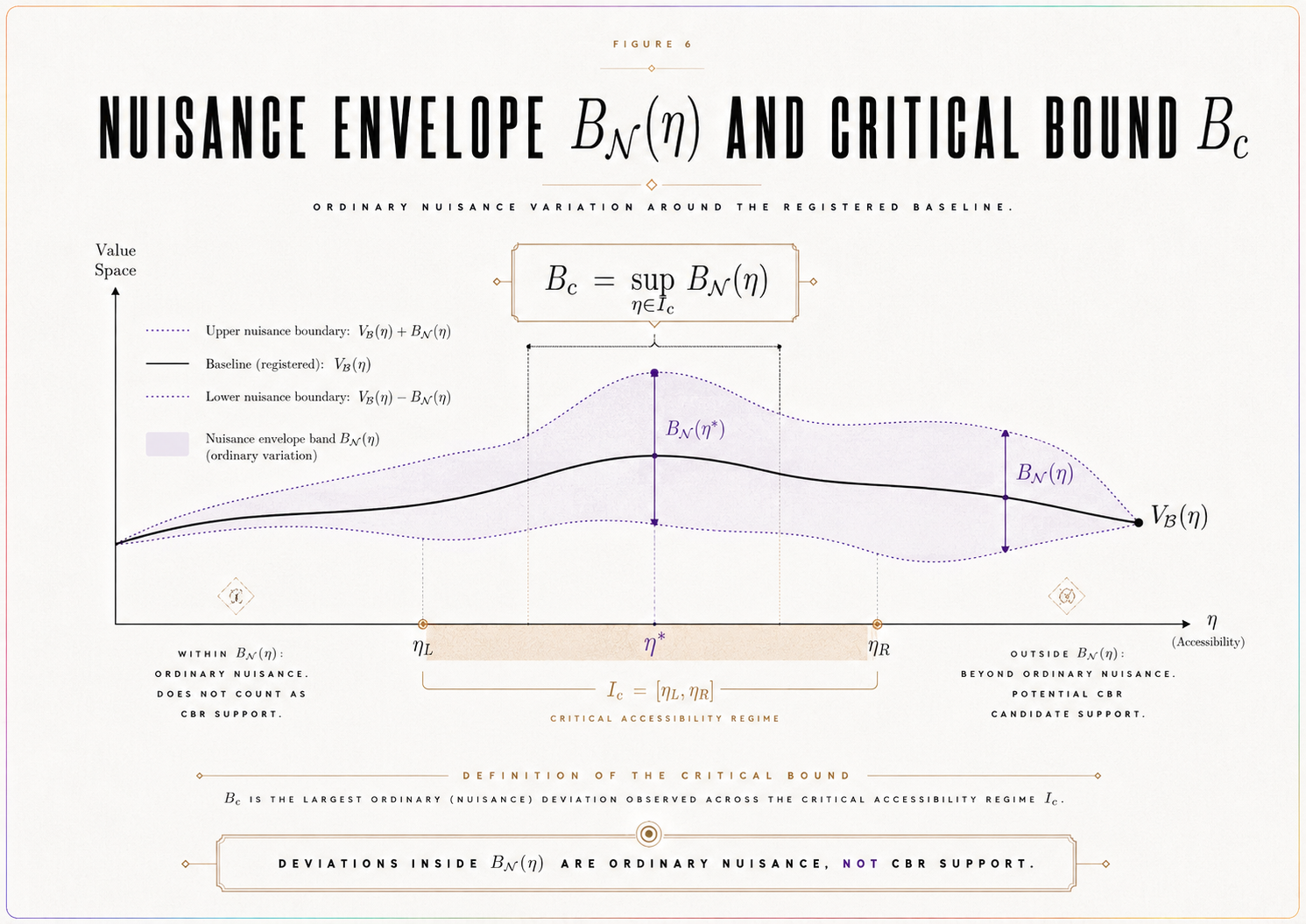

SECTION 7. Nuisance Envelope and Critical Bound

The nuisance envelope specifies the ordinary non-CBR deviations from the registered baseline that the platform can absorb. It prevents CBR from treating detector drift, calibration uncertainty, finite sampling, phase instability, or model uncertainty as evidence for a realization-law endpoint.

The nuisance envelope is therefore not an afterthought. It is part of the empirical object being tested.

7.1 Definition

Let B_𝓝(η) be the registered nuisance envelope around V_ℬ(η).

It bounds deviations attributable to ordinary non-CBR sources in the declared platform context. These may include:

detector drift,

calibration uncertainty,

decoherence-model uncertainty,

phase instability,

sampling variation,

background counts,

alignment errors,

η uncertainty,

visibility-estimator uncertainty,

timing-window uncertainty,

postselection uncertainty,

and finite-count effects.

A residual inside the nuisance envelope is not CBR support. It is ordinary platform uncertainty.

7.2 Relation to Baseline Uncertainty

The nuisance envelope must include or explicitly coordinate with uncertainty in the baseline model class 𝔅.

If baseline uncertainty is represented separately from B_𝓝(η), the dossier must specify how both enter the decision rule. If baseline uncertainty is absorbed into B_𝓝(η), the envelope must state that explicitly.

The test cannot double-count uncertainty to make failure impossible, and it cannot omit uncertainty to manufacture support.

7.3 Critical Nuisance Bound

For endpoint adjudication inside the declared critical regime, the dossier must define a critical nuisance bound.

A standard form is:

B_c = sup_{η ∈ I_c} B_𝓝(η).

If the endpoint uses N(η_c) rather than I_c, then:

B_c = sup_{η ∈ N(η_c)} B_𝓝(η).

For normalized, integrated, model-comparison, or morphology-sensitive endpoints, B_c must be defined in the corresponding registered endpoint units.

This condition is essential. A nuisance bound expressed in pointwise visibility units cannot automatically be used for an integrated, normalized, or morphology-sensitive endpoint unless a registered transformation maps it into the same endpoint space.

7.4 Principle — Endpoint-Units Consistency

B_c, ε_detect, Θ_c, T_c, and T_CBR must be expressed in the same registered endpoint units.

A pointwise visibility nuisance bound cannot adjudicate an integrated, normalized, morphology-sensitive, or model-comparison endpoint unless a registered transformation maps it into the same endpoint space.

If the endpoint statistic is:

a supremum residual, then B_c, ε_detect, Θ_c, T_c, and T_CBR must be expressed in supremum-residual units;

a normalized statistic, they must be expressed in normalized units;

an integrated statistic, they must be expressed in integrated-endpoint units;

a morphology-sensitive statistic, they must be expressed in the registered morphology-comparison units;

a model-comparison statistic, they must be expressed in the registered model-comparison scale.

Without endpoint-units consistency, the comparison among T_c, T_CBR, and Θ_c is not adjudicative.

7.5 Nuisance Construction Rule

The dossier must specify how B_𝓝(η) is constructed.

It must state:

which nuisance sources are included,

how each source is estimated or bounded,

the provenance label for each nuisance quantity,

how uncertainties are propagated,

whether nuisance terms are added linearly, in quadrature, by envelope maximization, or by another registered rule,

what confidence or error-control convention is used,

how η uncertainty enters the envelope,

how baseline uncertainty interacts with the envelope,

and how B_c is derived from B_𝓝(η) in endpoint-compatible units.

If these rules are missing, the nuisance envelope is not numerically adjudicative.

7.6 Definition — Baseline/Nuisance Degeneracy

A predicted residual Δ_CBR(η) is baseline/nuisance-degenerate if there exists an allowed baseline member V_ℬ(η; θ′) ∈ 𝔅, or an allowed nuisance deformation within B_𝓝(η), such that the predicted endpoint becomes indistinguishable from ordinary behavior under the registered endpoint functional and statistical rule.

In that case, the predicted residual may be mathematically defined, but it is not empirically identifiable as CBR-relevant.

A degenerate predicted endpoint cannot support CBR. It may indicate that the platform, endpoint statistic, nuisance model, or predicted morphology is insufficiently discriminating.

7.7 Nuisance Anti-Rescue Rule

B_𝓝(η) and B_c cannot be widened after data interpretation in order to absorb a residual, avoid support, or prevent failure.

Such widening may motivate a revised nuisance model. It may define a new dossier version. It does not alter the verdict of the original locked version.

The rule is:

A new nuisance envelope creates a new test object. It does not rescue the old one.

7.8 Nuisance Adequacy Condition

The nuisance envelope must be conservative enough to absorb ordinary platform uncertainty, but not so broad that it eliminates adjudication.

Too narrow creates false support.

Too broad creates non-falsifiability.

A valid nuisance envelope is therefore:

ordinary-effect complete, uncertainty-explicit, endpoint-compatible, provenance-labeled, non-degenerate, and non-adaptive.

Proposition 5 — Nuisance Adequacy

A nuisance envelope is adequate for a CBR residual test only if B_𝓝(η) is registered before endpoint evaluation, bounds ordinary non-CBR deviations across the declared critical regime, yields a critical nuisance bound B_c in the units of the endpoint statistic, uses provenance-labeled numerical quantities, does not make the predicted endpoint baseline/nuisance-degenerate, and cannot be widened after residual inspection to change the verdict.

Proof Sketch

A residual cannot support CBR if it lies within ordinary nuisance. A strong null cannot be established if nuisance bounds are undefined or unstable. If nuisance is widened after the result, the tested object changes. If nuisance is expressed in units incompatible with the endpoint statistic, the decision threshold is not meaningful. If the predicted endpoint is degenerate with nuisance, it cannot be identified as CBR-relevant. Therefore, nuisance adequacy requires a pre-registered envelope, endpoint-compatible critical bound, provenance discipline, non-degeneracy, and an anti-rescue rule.

7.9 Transition

The nuisance bound defines what ordinary deviations can absorb. The test also requires a detectability margin specifying how large a residual must be before the platform can distinguish it from baseline-plus-nuisance behavior.

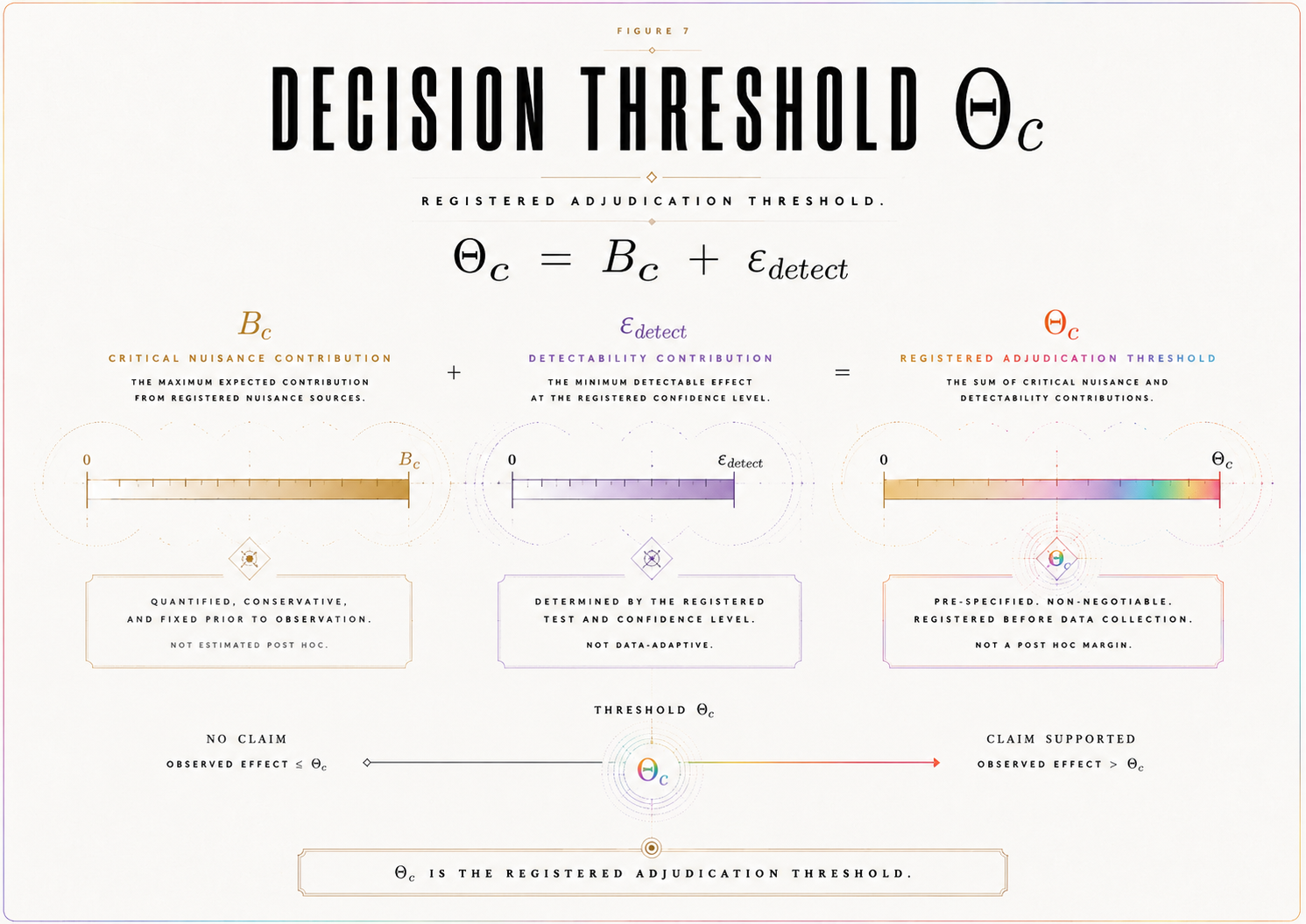

SECTION 8. Detectability and Decision Threshold

The detectability threshold specifies whether the platform is capable of distinguishing a CBR-relevant endpoint from ordinary baseline-plus-nuisance behavior. It prevents a null result from being mistaken for failure when the predicted effect was below the platform’s sensitivity.

Detectability is not a rhetorical claim that an effect is “large enough.” It is a registered numerical condition expressed in the same endpoint units as T_c, T_CBR, and B_c.

8.1 Detectability Threshold

Let ε_detect be the registered minimum detectable endpoint separation.

It must be defined in the same units as the endpoint statistic T_c and the predicted endpoint T_CBR. For a pointwise visibility endpoint, ε_detect may be expressed in visibility units. For a normalized, integrated, model-comparison, or morphology-sensitive endpoint, it must be expressed in the corresponding registered endpoint units.

The detectability threshold must be based on:

sampling density,

visibility resolution,

η uncertainty,

detector sensitivity,

systematic uncertainty,

statistical power,

endpoint morphology where applicable,

and the registered confidence or error-control convention.

Every numerical component entering ε_detect must carry a provenance label: measured, published, calibrated, derived, simulated, illustrative, assumed, or required for future testing.

8.2 Decision Threshold

The nuisance bound and detectability threshold combine into the registered decision threshold:

Θ_c = B_c + ε_detect.

This threshold is the minimum endpoint separation required for adjudication inside the declared critical accessibility regime.

It is not a visual margin. It is the registered boundary between residuals that remain absorbable by baseline-plus-nuisance behavior and residuals that exceed the platform’s ordinary allowance.

8.3 Endpoint-Units Discipline

The expression:

Θ_c = B_c + ε_detect

is meaningful only if B_c and ε_detect are expressed in the same registered endpoint units.

The comparison:

T_c > Θ_c

is meaningful only if T_c is expressed in those same units.

The comparison:

T_CBR > Θ_c

is meaningful only if T_CBR is expressed in those same units.

If endpoint-units consistency fails, the test is incomplete rather than adjudicative.

8.4 Detectability Condition

For registered failure to be possible, the predicted CBR endpoint must satisfy:

T_CBR > Θ_c.

If:

T_CBR ≤ Θ_c,

then the predicted endpoint is not distinguishable from the registered baseline-plus-nuisance-and-detectability allowance. In that case, a null result cannot fail the instantiation. The correct status is inconclusive for failure.

This protects CBR from unfair failure and protects the test from overclaiming.

8.5 Support Condition

For registered support to be possible, the observed endpoint must satisfy:

T_c > Θ_c

under valid conditions, and must match the registered residual morphology where applicable.

This condition is necessary but not automatically sufficient. Support also requires baseline separation, nuisance separation, non-degeneracy, valid η calibration, data adequacy, and satisfaction of the registered statistical rule and validity gates.

8.6 Detectability Registration

The dossier must specify:

how ε_detect is computed,

which uncertainty sources enter it,

the provenance label for each numerical input,

the power requirement for detecting T_CBR,

the sample size or η-density requirement,

the visibility-resolution requirement,

the endpoint-specific unit convention,

and the conditions under which detectability is considered achieved.

If ε_detect is not specified, the model is incomplete. If it is changed after data interpretation, the test object changes.

Proposition 6 — Detectability Discipline

A CBR test can fail a registered instantiation only if the predicted endpoint exceeds the registered decision threshold Θ_c, all threshold components are expressed in the same registered endpoint units, and the platform satisfies the registered sensitivity, sampling, calibration, and statistical conditions required to detect that endpoint.

Proof Sketch

Failure requires the absence of a predicted detectable endpoint. If T_CBR ≤ Θ_c, the predicted endpoint is not detectable under the registered conditions. If the threshold quantities are not expressed in the same endpoint units, the comparison is not meaningful. If the platform fails to satisfy the registered sensitivity conditions, the endpoint may be absent only because the test could not reveal it. Therefore, failure requires T_CBR > Θ_c, endpoint-units consistency, and achieved detectability.

8.7 Transition

The decision threshold prepares the model for endpoint adjudication. The next section defines the observed endpoint statistic T_c and the predicted endpoint T_CBR that are compared against Θ_c.

SECTION 9. Endpoint Statistic T_c and Predicted Endpoint T_CBR

The endpoint statistic is the rule that converts residual structure into an adjudicative quantity. It is the point at which the platform model becomes testable.

The key distinction is between the observed endpoint and the predicted endpoint.

T_c is computed from observed data.

T_CBR is generated by the registered CBR platform model.

A locked test requires both.

9.1 Observed Endpoint Statistic

Define:

T_c = 𝒯[V_obs(η) − V_ℬ(η), η ∈ I_c].

Here 𝒯 is the registered endpoint functional.

Possible endpoint functionals include:

supremum residual,

normalized supremum residual,

integrated residual,

localized kink statistic,

slope-change statistic,

curvature statistic,

morphology-sensitive statistic,

or model-comparison statistic.

For example:

T_c = sup_{η ∈ I_c} |V_obs(η) − V_ℬ(η)|

or:

T_c = sup_{η ∈ I_c} |V_obs(η) − V_ℬ(η)| / σ_total(η).

The endpoint functional must be selected before data interpretation.

9.2 Predicted Endpoint

Define:

T_CBR = 𝒯[Δ_CBR(η), η ∈ I_c].

This is the registered predicted endpoint.

Here:

Δ_CBR(η) = V_CBR(η) − V_ℬ(η).

Thus, T_CBR is not a free parameter. It is generated by the platform model through the chain:

η → ℛ_C^plat → Φ∗_C(η) → V_CBR(η) → Δ_CBR(η) → T_CBR.

If T_CBR is not generated by a registered rule, the model is not numerically adjudicable.

9.3 Endpoint Congruence

T_c and T_CBR must use the same endpoint functional 𝒯.

If T_CBR predicts a scalar magnitude, T_c must measure that scalar magnitude.

If T_CBR predicts a localized kink, slope change, curvature feature, or other morphology, T_c must test that morphology.

A scalar statistic cannot adjudicate a morphology-specific prediction unless the scalar statistic is registered as the correct reduction of that morphology. A morphology-sensitive statistic cannot be introduced after inspecting the residual curve.

9.4 Endpoint-Units Consistency

The endpoint statistic must define the units or comparison scale for:

T_c,

T_CBR,

B_c,

ε_detect,

and Θ_c.

If 𝒯 is changed, the units of these quantities may also change. Therefore, the endpoint functional and threshold quantities must be registered together.

A pointwise visibility threshold cannot be used to adjudicate a curvature statistic unless the dossier defines a registered transformation into curvature-endpoint units. A nuisance envelope in visibility units cannot adjudicate a model-comparison statistic unless mapped into the model-comparison scale.

Without this consistency, the decision rule is formally invalid.

9.5 Baseline/Nuisance Non-Degeneracy

The predicted endpoint T_CBR must not be degenerate with allowed baseline or nuisance variation.

The model must state whether there exists:

an allowed V_ℬ(η; θ′) ∈ 𝔅,

or an allowed nuisance deformation inside B_𝓝(η),

such that T_CBR becomes indistinguishable from ordinary behavior under 𝒯 and the registered statistical rule.

If such a degeneracy exists, the platform may still be useful for constraint-setting or model development, but it cannot produce registered support for CBR using that endpoint.

9.6 Primary Endpoint Rule

Only one primary endpoint statistic controls the decisive verdict.

Secondary endpoints may be registered for diagnostics, robustness checks, or exploratory analysis, but they cannot replace the primary endpoint after the result is known.

The locked rule is:

The decisive endpoint is the registered primary endpoint, not the most favorable endpoint discovered after data inspection.

9.7 Endpoint Statistical Rule

The endpoint functional must be paired with a statistical rule specifying:

the uncertainty convention,

how statistical and systematic errors enter T_c,

how T_c > Θ_c is adjudicated,

how T_c ≤ Θ_c is adjudicated,

whether confidence intervals or error-control thresholds are used,

how multiple comparisons are handled if secondary endpoints are reported,

and how morphology agreement is assessed if applicable.

Without this rule, the comparison among T_c, T_CBR, and Θ_c is qualitative rather than adjudicative.

9.8 Endpoint Lock Rule

The endpoint functional 𝒯, the primary statistic T_c, the predicted endpoint T_CBR, endpoint units, threshold mapping, and any registered morphology condition must be fixed before data interpretation.

If 𝒯 is selected after inspecting r(η), the result is exploratory.

If T_CBR is adjusted after observing T_c, the model is post hoc.

If morphology is introduced after the fact, the endpoint is not registered.

If endpoint units are changed after data inspection, the threshold comparison is invalid for the original dossier.

Proposition 7 — Endpoint Congruence

A platform-specific CBR endpoint is adjudicative only if the observed endpoint T_c and predicted endpoint T_CBR are generated by the same registered endpoint functional 𝒯, expressed in the same endpoint units as B_c, ε_detect, and Θ_c, and compared under the same statistical rule.

Proof Sketch

The test compares prediction and observation. If T_c and T_CBR are computed by different endpoint rules, then the comparison is not well-defined. If threshold components are expressed in different units from the endpoint statistic, the decision rule is invalid. If the endpoint rule changes after data interpretation, the verdict applies to a different object. Therefore, adjudication requires a shared pre-registered endpoint functional, endpoint-unit consistency, and statistical rule.

9.9 Transition

Once T_c and T_CBR are defined, the paper can state the numerical-completeness theorem: the condition under which the platform-specific instantiation becomes computable enough to support, fail, or remain non-adjudicative.

SECTION 10. Theorem 1 — Numerical Instantiation Completeness

The preceding sections define the objects required for a platform-specific CBR instantiation to become numerically executable. This section states the corresponding completeness theorem.

The theorem does not claim that CBR is true. It does not claim that the residual exists in nature. It states the conditions under which a declared platform model is complete enough to generate and adjudicate a predicted accessibility-critical endpoint.

Theorem 1 — Numerical Instantiation Completeness

A platform-specific CBR instantiation is numerically complete only if C, 𝒜(C), ≃_C, ℛ_C^plat, η, I_c or N(η_c), 𝔅, V_ℬ(η), B_𝓝(η), B_c, ε_detect, Θ_c, T_c, T_CBR, endpoint morphology where applicable, endpoint-units convention, statistical rule, parameter-provenance rule, data-adequacy rule, visibility-response map, validity gates, and verdict rule are fixed before data interpretation.

Equivalently:

numerical completeness requires a registered law-form domain, a computable burden proxy, a nontrivial accessibility bridge, a baseline model class, a nuisance envelope, a detectability threshold, endpoint-units consistency, an observed endpoint statistic, a predicted endpoint, a provenance-labeled parameter registry, and a verdict rule.

10.1 Proof Sketch

A CBR residual test requires a law-form object, an empirical bridge, an ordinary baseline, a nuisance allowance, a detectability threshold, an observed endpoint, a predicted endpoint, and a verdict rule.

The law-form object requires C, 𝒜(C), ≃_C, and ℛ_C^plat.

The empirical bridge requires η, I_c or N(η_c), a visibility-response map, and a rule generating Δ_CBR(η).

The baseline comparison requires 𝔅 and V_ℬ(η).

The nuisance and detectability structure requires B_𝓝(η), B_c, ε_detect, and Θ_c.

The endpoint comparison requires T_c, T_CBR, an endpoint functional, endpoint-unit consistency, endpoint congruence, and a statistical rule.

The numerical-status of the model requires a parameter-provenance rule distinguishing measured, published, calibrated, derived, simulated, illustrative, assumed, and future-required quantities.

The verdict structure requires data adequacy, validity gates, and support/failure/inconclusive rules.

If any of these are missing, the relation between prediction and observation cannot be adjudicated. If any are selected after observing data, the model is exploratory rather than registered.

Therefore, numerical completeness requires all listed objects to be fixed before data interpretation.

Corollary 1 — Incomplete Numerical Instantiation

If T_CBR, Θ_c, η calibration, 𝔅, B_𝓝(η), B_c, T_c, endpoint-units convention, visibility-response map, parameter-provenance registry, or statistical rule is missing, the model is not yet numerically adjudicable.

Proof Sketch

Each listed object is necessary for comparing a predicted endpoint with an observed endpoint under locked rules. Without T_CBR, there is no prediction. Without Θ_c, there is no decision threshold. Without η calibration, the accessibility bridge is undefined. Without 𝔅 or B_𝓝(η), ordinary explanations cannot be bounded. Without T_c, no observed endpoint exists. Without endpoint-units consistency, the threshold comparison is undefined. Without the visibility-response map, the model cannot generate Δ_CBR(η). Without parameter provenance, numerical values have unclear evidential status. Without the statistical rule, no verdict is adjudicative. Therefore, the instantiation is incomplete.

Corollary 2 — Numerical Completeness Is Not Confirmation

A numerically complete CBR instantiation is not thereby empirically confirmed. It is only complete enough to be simulated, constrained, supported, failed, or left inconclusive under registered conditions.

Proof Sketch

Completeness concerns the specification of the test object. Confirmation concerns the relation between that object and valid empirical data. A complete model may receive support, fail, or remain inconclusive depending on the observed endpoint and validity conditions. Therefore, numerical completeness is a precondition for adjudication, not a claim of truth.

Corollary 3 — Completeness Does Not Guarantee Identifiability

A numerically complete CBR instantiation may still be non-identifiable if Δ_CBR(η) is degenerate with an allowed baseline member, nuisance deformation, η-calibration error, or endpoint-statistic ambiguity.

Proof Sketch

Numerical completeness specifies all required objects. Identifiability asks whether the predicted endpoint can be distinguished from ordinary explanations under those objects. If an allowed baseline or nuisance deformation reproduces the predicted endpoint under the registered statistic, the prediction is not identifiable as CBR-relevant. Therefore, completeness is necessary but not sufficient for empirical discrimination.

10.2 Completeness Versus Identifiability

Numerical completeness is necessary but not sufficient.

A model may define every required object and still fail to be empirically identifiable if the predicted residual is degenerate with baseline variation, nuisance effects, η uncertainty, detector artifacts, or endpoint-unit ambiguity.

The next section therefore asks a further question:

Can the predicted residual be distinguished from ordinary platform behavior under the registered rules?

10.3 Transition

Numerical completeness fixes the objects required for adjudication. The predicted residual must also be identifiable. The next section states the identifiability and degeneracy conditions under which T_CBR can be distinguished from ordinary baseline-plus-nuisance behavior.

SECTION 11. Identifiability and Degeneracy Analysis

Numerical completeness is necessary, but it is not sufficient for empirical adjudication.

A platform-specific CBR model may define Δ_CBR(η), T_CBR, Θ_c, and T_c and still fail to produce an identifiable endpoint if the predicted residual is indistinguishable from allowed baseline variation, nuisance deformation, η miscalibration, estimator bias, or endpoint ambiguity.

The central question of this section is therefore:

Can the predicted accessibility-critical residual Δ_CBR(η) be distinguished from ordinary platform behavior under the registered baseline, nuisance, endpoint, and statistical rules?

If the answer is no, the model may remain mathematically complete, but it is not empirically discriminating in the declared platform.

11.1 Identifiability Problem

A predicted residual may be mathematically defined but empirically non-identifiable.

This can occur when Δ_CBR(η) is reproducible by:

an allowed baseline parameter shift,

a permitted nuisance deformation,

η calibration error,

decoherence-model uncertainty,

phase drift,

detector nonlinearity,

postselection artifacts,

finite-sampling fluctuation,

visibility-estimator bias,

endpoint ambiguity,

or statistical indistinguishability under the registered rule.

In such a case, the residual is not uniquely CBR-relevant. It may be a real feature of the data, but if it can be absorbed by ordinary registered effects, it cannot function as support for the CBR instantiation.

11.2 Definition — Degeneracy Operator

Let Deg_C(Δ) denote the class of ordinary baseline, nuisance, calibration, estimator, endpoint, and statistical transformations permitted by the locked dossier that can reproduce, absorb, or render indistinguishable a candidate residual Δ(η) under the registered endpoint functional 𝒯 and statistical rule A_stat.

More explicitly, Deg_C(Δ) includes all registered ordinary transformations under which there exists:

an allowed baseline member V_ℬ(η; θ′) ∈ 𝔅,

an allowed nuisance deformation δ_𝓝(η) bounded by B_𝓝(η),

an allowed η-calibration perturbation,

an allowed visibility-estimator deformation,

an allowed postselection or coincidence-window uncertainty,

or a registered statistical indistinguishability relation,

such that the endpoint generated by Δ(η) cannot be distinguished from ordinary behavior under 𝒯 and A_stat.

A predicted CBR residual is identifiable only if:

Δ_CBR ∉ Deg_C

under the locked rules.

This definition makes identifiability a registered mathematical condition rather than an interpretive judgment.

11.3 Identifiability Condition

A platform-specific CBR endpoint is identifiable only if both conditions hold.

First:

T_CBR > Θ_c.

The predicted endpoint must exceed the registered decision threshold.

Second:

Δ_CBR ∉ Deg_C.

The predicted residual must not be absorbable by the registered ordinary-degeneracy class.

In simple terms:

The predicted residual must be both large enough and different enough.

A residual below threshold is not detectable.

A residual absorbed by ordinary effects is not identifiable.

A residual identified only after changing the endpoint rule is not registered.

11.4 Degeneracy Classes

The main degeneracy classes include the following.

Baseline degeneracy.

The predicted residual can be absorbed by an allowed baseline member V_ℬ(η; θ′) ∈ 𝔅.

Nuisance degeneracy.

The predicted residual lies within or is reproducible by the registered nuisance envelope B_𝓝(η).

η-calibration degeneracy.

The predicted residual can be produced by an allowed shift, rescaling, uncertainty, or misassignment of η.

Decoherence-model degeneracy.

The predicted residual can be reproduced by ordinary uncertainty in the decoherence model.

Phase-drift degeneracy.

The residual shape can be generated by allowed phase instability or timing drift.

Detector-response degeneracy.

The residual can be reproduced by detector inefficiency, nonlinearity, dark counts, dead-time effects, or background-count behavior.

Postselection degeneracy.

The residual depends on data-inclusion, coincidence-window, or postselection choices rather than the registered CBR bridge.

Estimator degeneracy.

The residual arises from the visibility estimator, normalization procedure, binning rule, or finite-sampling behavior.

Endpoint degeneracy.

The residual appears significant under one statistic but not under the registered primary endpoint 𝒯.

Statistical degeneracy.

The residual cannot be distinguished from ordinary variation under the registered uncertainty convention, confidence rule, or error-control standard.

These degeneracy classes do not automatically defeat the model. They define what the model must separate itself from before support can be claimed.

11.5 Degeneracy Test

The dossier must include a degeneracy test.

The test asks:

Is there an allowed baseline member V_ℬ(η; θ′) ∈ 𝔅, an allowed nuisance deformation δ_𝓝(η) bounded by B_𝓝(η), an allowed η-calibration perturbation, or an allowed estimator/statistical transformation such that Δ_CBR(η) becomes indistinguishable from ordinary behavior under 𝒯 and A_stat?

If yes, then:

Δ_CBR ∈ Deg_C

and the endpoint is non-identifiable.

If no, and if T_CBR > Θ_c, then the endpoint is identifiable under the registered rules.

Theorem 2 — Endpoint Identifiability Condition

A platform-specific CBR endpoint is identifiable only if its predicted endpoint exceeds the registered decision threshold and its registered residual morphology is outside the locked degeneracy class Deg_C.

Equivalently:

Identifiability requires T_CBR > Θ_c and Δ_CBR ∉ Deg_C.

Proof Sketch

If T_CBR ≤ Θ_c, the predicted endpoint is below the registered decision threshold. It cannot support or fail the model because the platform is not committed to detecting it.

If Δ_CBR ∈ Deg_C, then the predicted residual can be absorbed by allowed baseline behavior, nuisance deformation, η-calibration uncertainty, estimator ambiguity, or statistical indistinguishability under the locked rules. In that case, the residual is not uniquely CBR-relevant.

Therefore, identifiability requires both threshold separation and non-degeneracy.

Corollary — Mathematical Definition Is Not Empirical Identification

A mathematically defined Δ_CBR(η) does not by itself define an identifiable CBR endpoint. Identification requires non-degeneracy against 𝔅, B_𝓝(η), η uncertainty, endpoint mapping, estimator behavior, and the registered statistical rule.

Transition

After establishing identifiability, the paper must state how numerical values are assigned without allowing illustrative, simulated, assumed, or published quantities to acquire false empirical authority.

SECTION 12. Parameter Provenance and Value Classification

A platform-specific numerical model must state where every numerical value comes from.

This requirement is not cosmetic. It prevents the model from treating illustrative values as measurements, simulated values as empirical results, or missing values as if they had already been supplied by the platform.

CBR’s numerical execution must therefore distinguish clearly between values that are measured, published, calibrated, derived, simulated, illustrative, assumed, or required for future testing.

12.1 Principle — Parameter Provenance

Every numerical quantity entering the platform-specific CBR instantiation must be assigned a provenance label before data interpretation.

Permissible labels are:

measured,

published,

calibrated,

derived,

simulated,

illustrative,

assumed,

or required for future testing.

A quantity with unclear provenance cannot support adjudication.

If a value is illustrative, it may help explain the model but cannot support or fail CBR.

If a value is simulated, it may support detectability analysis but cannot count as empirical confirmation.

If a value is published, it must be used within the limits of the published context and not overextended.

If a value is required for future testing, it must be named as missing rather than silently assumed.

12.2 Required Parameter Classes

The paper must classify the provenance of all primary quantities, including:

η range,

η calibration uncertainty,

η sampling density,

I_c or N(η_c),

baseline parameters θ ∈ Θ_ℬ,

baseline visibility V_ℬ(η),

nuisance parameters,

B_𝓝(η),

B_c,

ε_detect,

Θ_c,

endpoint functional 𝒯,

T_CBR,

sampling requirements,

statistical rule,

validity gates,

data-adequacy requirements,

and the degeneracy test for Deg_C.

A model that leaves the provenance of these quantities unstated is not yet numerically adjudicable.

12.3 No-Invented-Data Rule

The paper must not present illustrative, assumed, or simulated numbers as if they were measured data.

The rule is:

Illustrative values illustrate.

Simulated values simulate.

Assumed values define conditional models.

Measured or published values constrain empirical claims.

If the paper uses symbolic values, they should be identified as symbolic.

If the paper uses illustrative values, it should say that the values are not empirical results.

If the paper uses published values, it must state whether they are directly applicable to the declared platform context or only used as approximate ranges.

12.4 Public-Data Use

If values are drawn from published experiments, the paper must classify what those values can support.

Possible uses include:

numerical illustration,

simulation input,

pilot constraint,

test-design guidance,

or adjudicative testing.

A published value supports adjudication only if it is adequate for the locked CBR object under the declared context. Existing data may lack η calibration, raw counts, nuisance budgets, baseline uncertainty, endpoint-compatible units, degeneracy controls, or sampling inside I_c. In that case, the data may remain useful for modeling or constraint-setting but not decisive testing.

12.5 Provenance and Verdict Status

Parameter provenance affects verdict status.

If all required numerical quantities are measured, published, calibrated, or derived under valid rules, adjudication may be possible.

If key quantities are simulated or illustrative, the result is simulation or model demonstration, not empirical support.

If key quantities are assumed, the result is conditional.

If key quantities are required for future testing, the instantiation is incomplete for adjudication.

Theorem 3 — Provenance-Limited Verdict

A numerical CBR instantiation cannot receive a stronger verdict status than the least adjudicative provenance class among the quantities required for T_CBR, Θ_c, T_c, Deg_C, and the registered statistical rule.

Equivalently:

The weakest necessary input limits the strongest legitimate verdict.

If any necessary quantity is illustrative, the result cannot exceed illustrative model demonstration.

If any necessary quantity is simulated, the result cannot exceed simulation-based detectability or robustness analysis.

If any necessary quantity is assumed, the result is conditional on that assumption.

If any necessary quantity is required for future testing, the result is incomplete for adjudication.

If all necessary quantities are measured, published, calibrated, or derived under valid rules, empirical adjudication may be possible, subject to identifiability, validity gates, and the registered decision rule.

Proof Sketch

The verdict is computed from the numerical objects that define the predicted endpoint, observed endpoint, threshold, degeneracy status, and statistical comparison. If any required object lacks adjudicative provenance, the verdict cannot exceed that object’s evidential status. An illustrative threshold cannot support empirical failure. A simulated residual cannot support empirical confirmation. A missing η calibration prevents adjudication. Therefore, the strongest legitimate verdict is limited by the least adjudicative necessary input.

Proposition 8 — Provenance Discipline

A platform-specific CBR model is numerically adjudicable only if every quantity entering 𝔅, V_ℬ(η), B_𝓝(η), B_c, ε_detect, Θ_c, T_c, T_CBR, Deg_C, and the statistical rule has a registered provenance label sufficient for the verdict claimed.

Proof Sketch

The evidential status of a numerical model depends on the status of its inputs. If a quantity is illustrative, it cannot establish empirical support. If a quantity is simulated, it can test detectability but not nature. If a quantity is missing, adjudication is incomplete. Therefore, the verdict cannot outrun the provenance of the quantities from which it is computed.

12.6 Transition

With provenance discipline in place, the paper can present a minimum viable numerical instantiation while making clear whether its values are symbolic, illustrative, simulated, published, or empirically adjudicative.

SECTION 13. Minimum Viable Platform Model

This section gives a minimum viable platform model.

Its purpose is to show that the numerical instantiation can be made explicit. The model may be symbolic, illustrative, simulated, or based on published ranges, but its evidential status must be stated before any conclusion is drawn.

The minimum viable model is not a claim of empirical confirmation. It is a worked instantiation of the locked CBR numerical structure.

13.1 Purpose

The minimum viable platform model must demonstrate that the following chain is executable:

C → 𝒜(C) → ℛ_C^plat → Φ∗_C(η) → V_CBR(η) → Δ_CBR(η) → T_CBR.

It must also define the ordinary comparison chain:

𝔅 → V_ℬ(η) → B_𝓝(η) → B_c → ε_detect → Θ_c.

Together, these chains allow the model to specify what would count as support, failure, inconclusive exposure, incomplete registration, or exploratory status.

13.2 Minimal Model Objects

At minimum, the model must define:

η ∈ [0,1], or another registered accessibility range;

I_c = [η₁, η₂], or N(η_c);

𝔅 = {V_ℬ(η; θ) : θ ∈ Θ_ℬ};

the selected or bounded V_ℬ(η);

B_𝓝(η);

B_c;

ε_detect;

Θ_c = B_c + ε_detect;

ℛ_C^plat(Φ; η);