Simulation Scenarios for Constraint-Based Realization: A Synthetic Stress-Test of the Locked C_RAI v0.1 Decision Procedure

Abstract

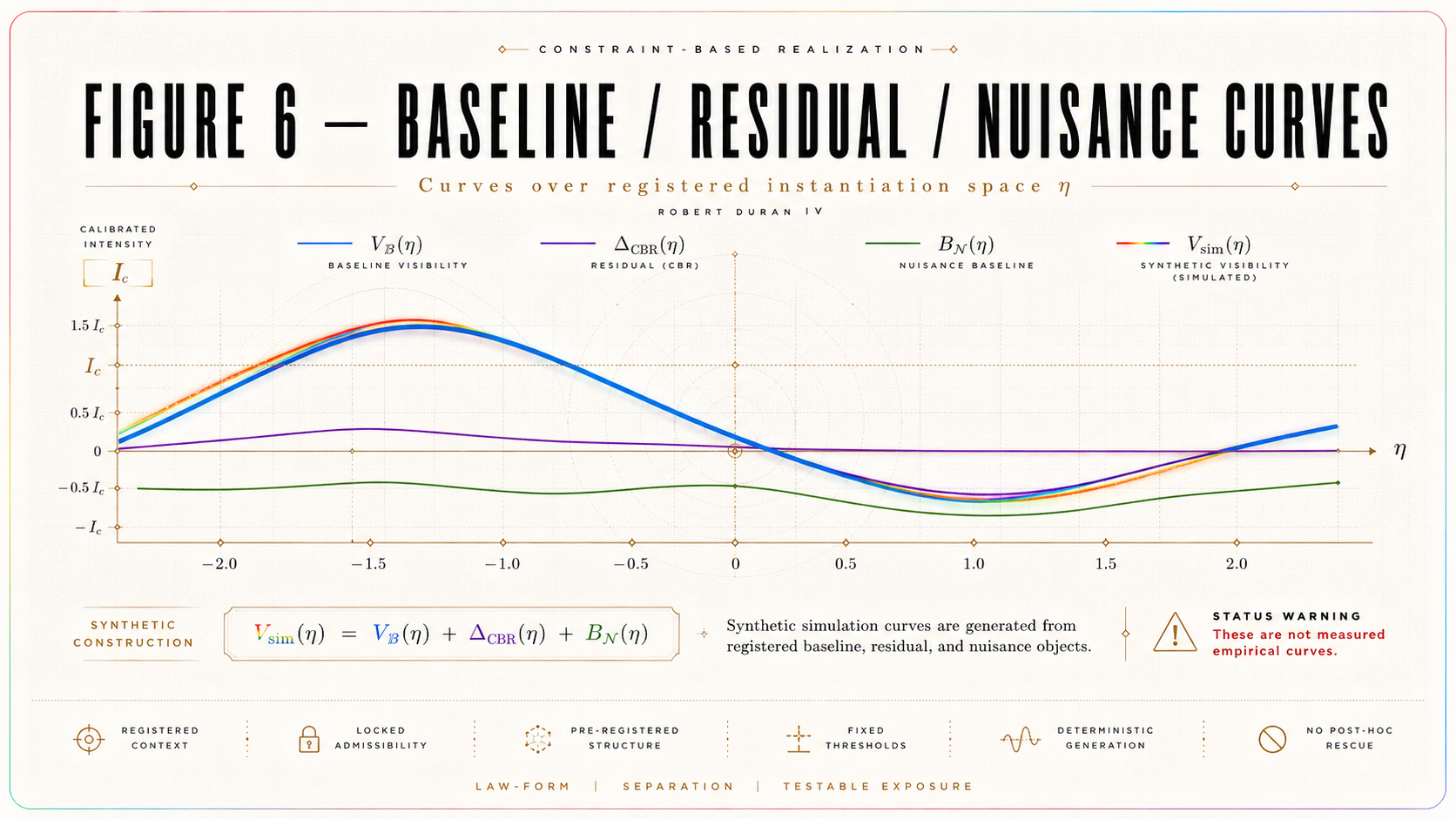

Constraint-Based Realization (CBR) treats quantum outcome realization as a distinct explanatory target from probability assignment, decoherent record formation, and ordinary measurement registration. A preceding platform-specific numerical instantiation fixed a locked C_RAI v0.1 dossier for a record-accessibility interferometric context, specifying an accessibility coordinate η, critical regime I_c, ordinary baseline V_ℬ(η), nuisance envelope B_𝓝(η), decision threshold Θ_c, synthetic residual family Δ_CBR(η), primary endpoint 𝒯_sup, degeneracy operator Deg_C, statistical rule A_stat, scenario certificates Scert, output-register requirements, and version-boundary rules. The present paper imports that dossier without revision and asks a strictly methodological question: whether the locked C_RAI v0.1 machinery behaves coherently when executed as a synthetic decision procedure.

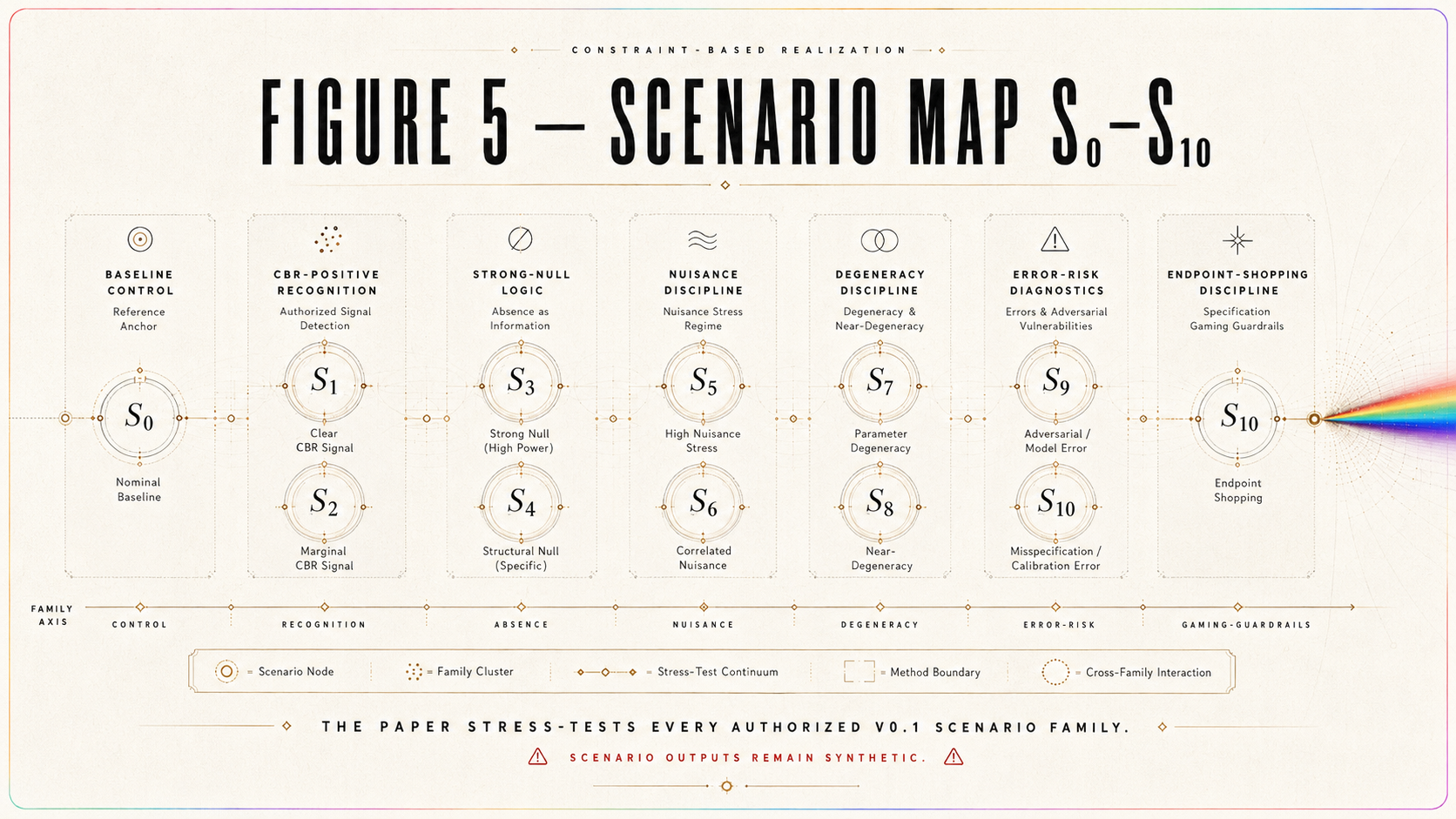

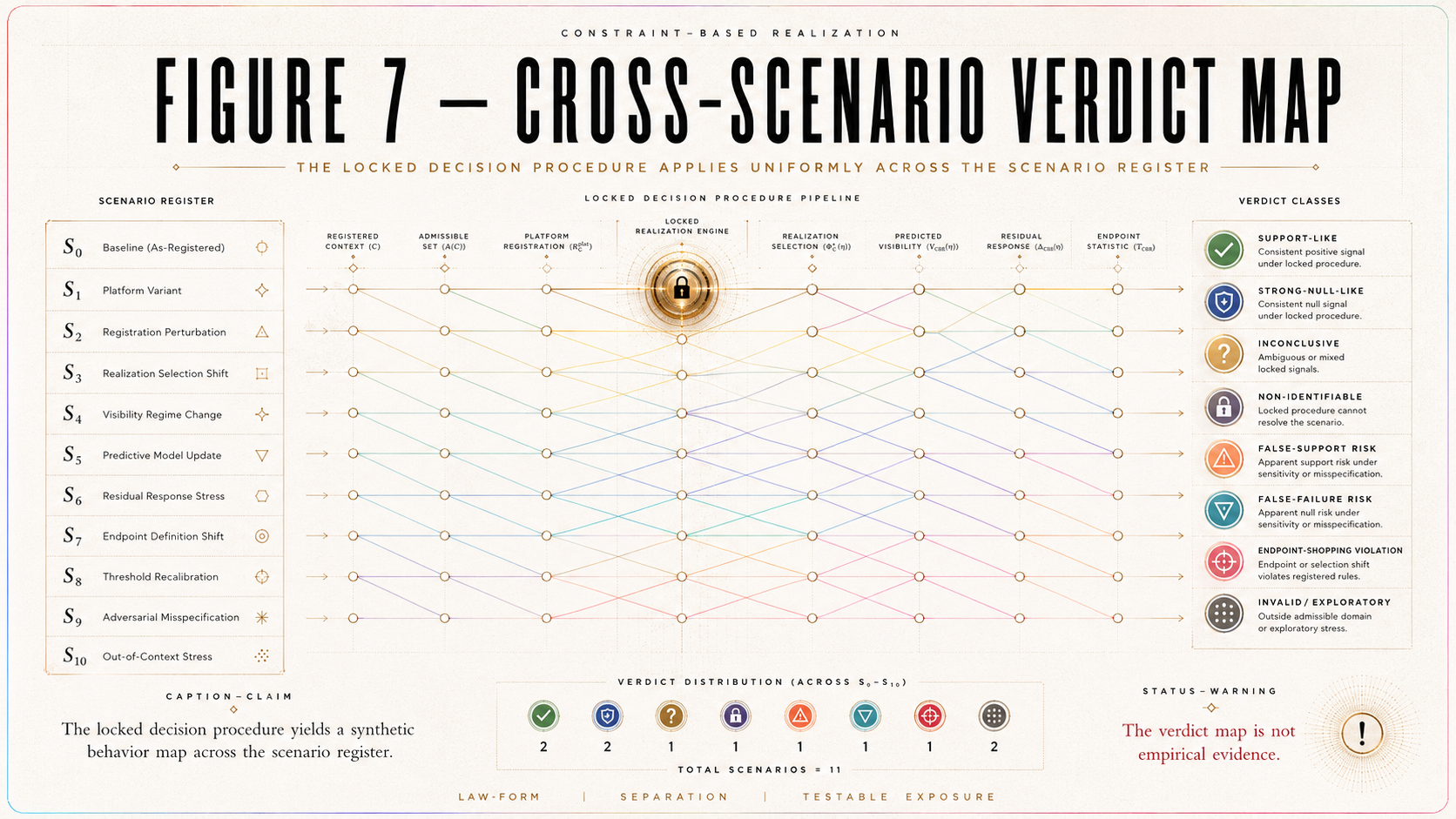

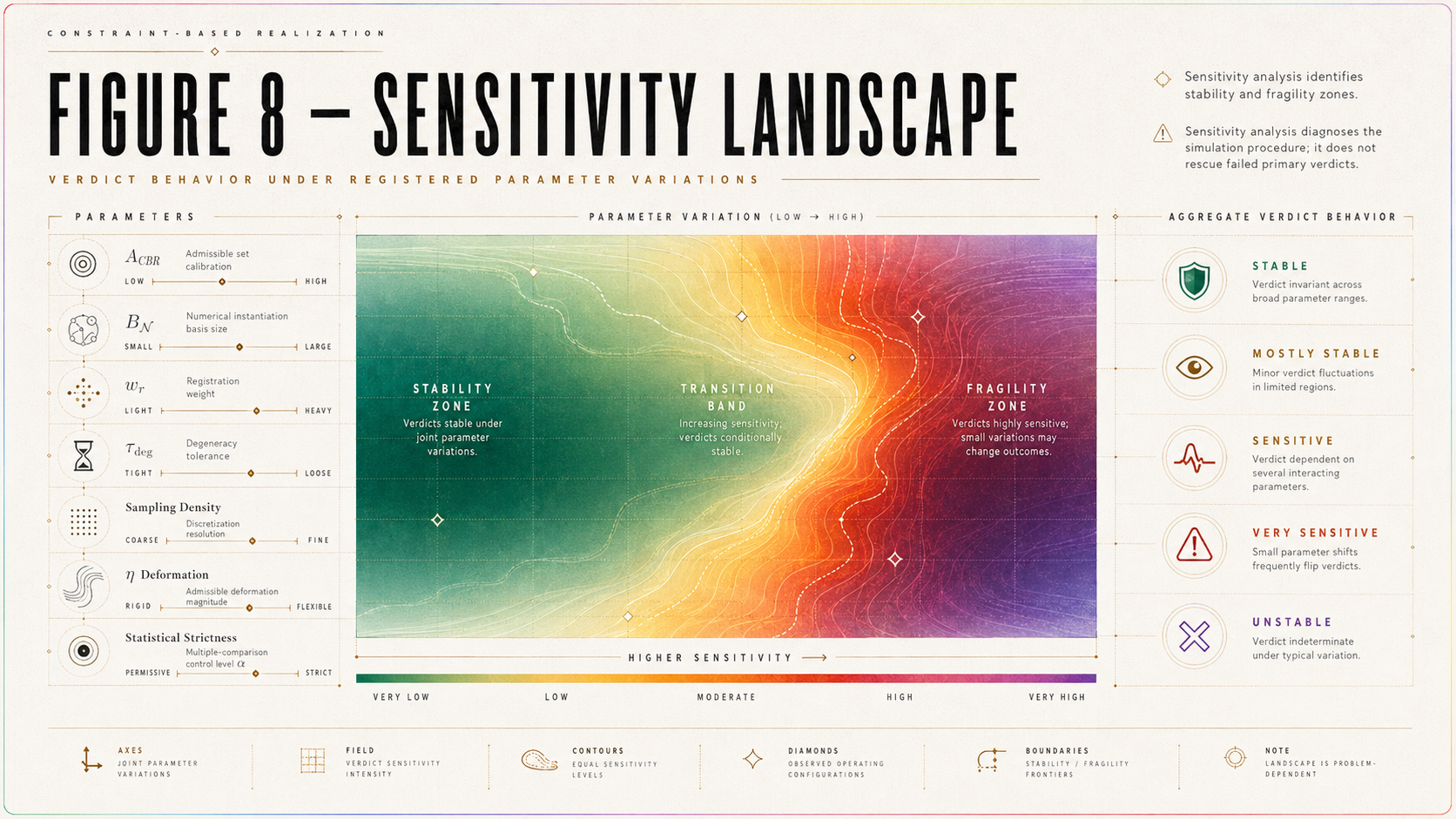

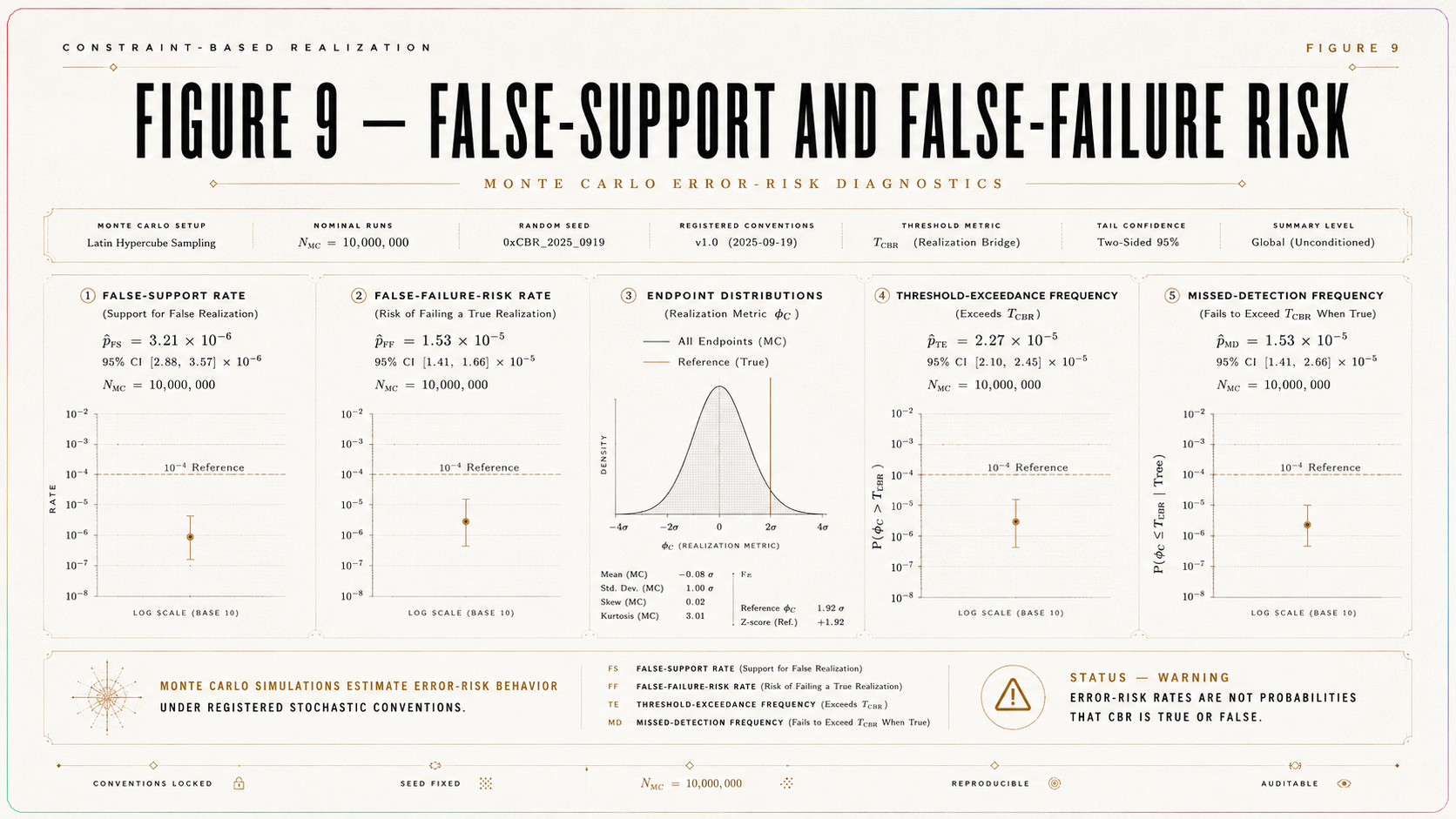

The paper develops a complete simulation stress-test across authorized scenarios S₀–S₁₀: baseline-only control, detectable CBR-positive recognition, below-threshold inconclusiveness, strong-null logic, wide-nuisance inconclusiveness, baseline degeneracy, η-miscalibration, sampling degeneracy, false-support risk, false-failure risk, and endpoint-shopping discipline. Each scenario is evaluated through registered endpoint computation, threshold comparison, degeneracy certification, validity gates, scenario certificates, output-register traceability, and version-status classification. Deterministic simulations test rule behavior under controlled constructions, while Monte Carlo simulations estimate false-support and false-failure vulnerabilities under registered stochastic conventions. Sensitivity analyses then examine amplitude, residual width, sign, nuisance width, degeneracy tolerance, sampling density, η-axis deformation, and statistical strictness.

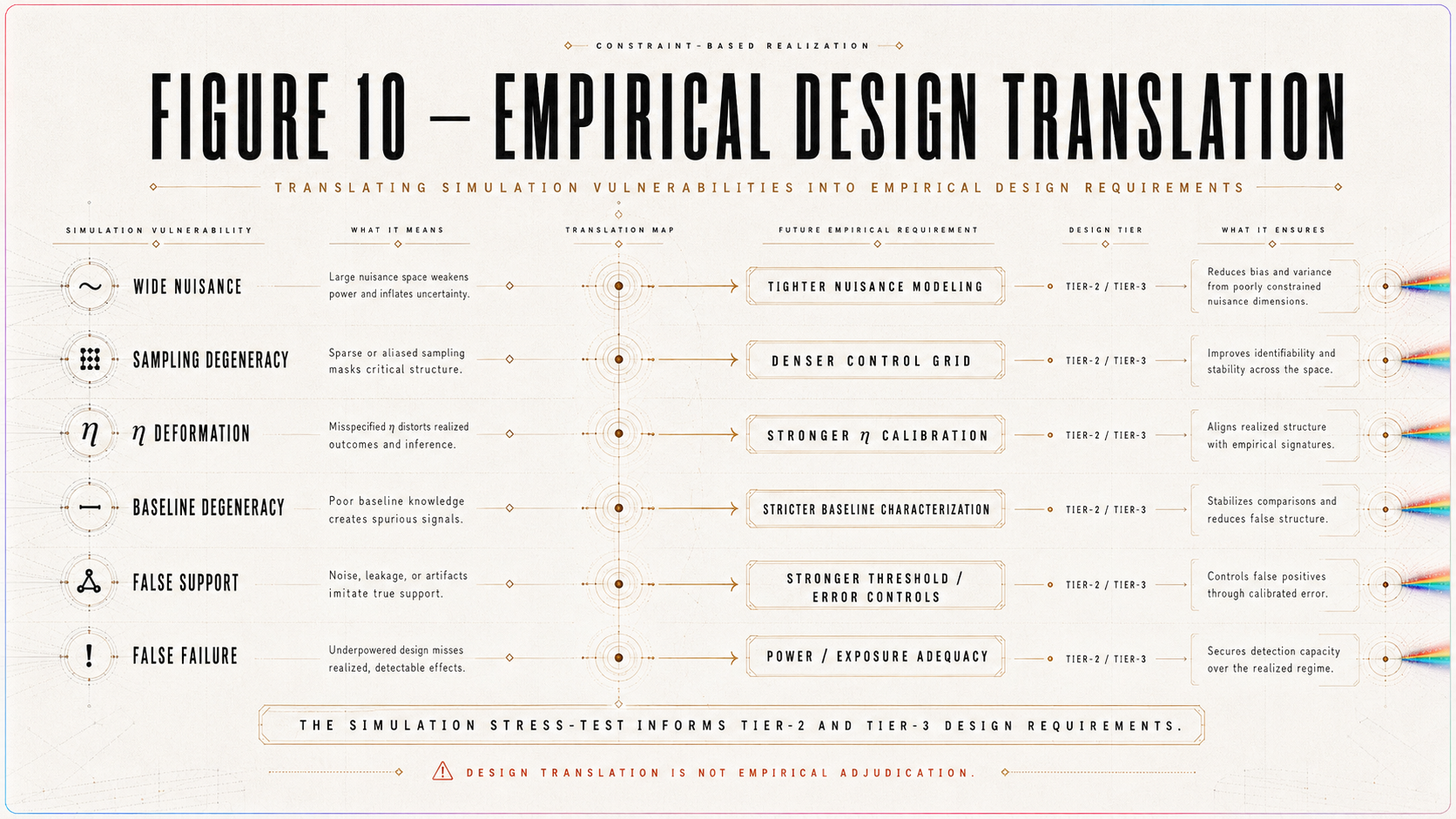

The central result is procedural rather than empirical: C_RAI v0.1 is shown to be not merely a locked registry, but an executable decision procedure whose synthetic behavior can be simulated, audited, stress-tested, bounded, and translated into empirical-design requirements. The paper establishes a complete behavior map for support-like, strong-null-like, inconclusive, non-identifiable, false-support-risk, false-failure-risk, and endpoint-shopping classifications within the registered simulation regime. It does not establish that CBR is true, empirically supported, empirically failed, directly observed, or that ordinary quantum theory is false. Its contribution is instead to expose the decision machinery to controlled failure modes and to specify what future Tier-2 author-data reconstruction or Tier-3 locked experimentation would require: calibrated η, fixed I_c, validated baseline and nuisance models, adequate sampling, degeneracy exclusions, threshold margins, uncertainty propagation, a pre-registered endpoint hierarchy, and fixed verdict rules.

1. Introduction

1.1 Role of This Paper

The preceding paper, A Platform-Specific Numerical Instantiation of Constraint-Based Realization, constructed a locked, simulation-ready, pilot-data-grounded dossier for a record-accessibility interferometric context:

C_RAI.

That dossier fixed the platform context, accessibility register, ordinary baseline model, nuisance envelope, decision threshold, residual family, endpoint functional, degeneracy operator, statistical rule, scenario register, simulation export register, and version boundary. It also demonstrated pilot public-data contact through a Kim–Ham endpoint reconstruction while preserving the strict status boundary that the dossier is not empirically adjudicated.

The present paper is the next step.

It does not revise the C_RAI v0.1 dossier.

It does not complete missing objects.

It does not repair the platform instantiation after seeing simulation behavior.

It does not introduce a new empirical claim.

It imports the locked dossier and stress-tests the registered decision procedure across the authorized simulation scenarios S₀–S₁₀.

In this sense, the relationship between the two papers is direct: “A Platform-Specific Numerical Instantiation of Constraint-Based Realization | A Simulation-Ready and Public-Data Pilot C_RAI Dossier for Record-Accessibility Interferometry” builds the locked C_RAI v0.1 dossier.

This paper tests how the locked dossier behaves.

The object of simulation is therefore not “CBR in general.” It is the registered C_RAI v0.1 decision machinery: its endpoint functional, threshold rule, degeneracy operator, statistical rule, validity gates, scenario certificates, output register, and verdict categories.

1.2 Central Question

The central question of this paper is not: Is Constraint-Based Realization true?

Nor is it: Has CBR been empirically confirmed?

Nor is it: Has ordinary quantum theory failed?

The central question is narrower and more exact: If the C_RAI v0.1 dossier is held fixed exactly as registered, how does its endpoint-threshold-degeneracy-statistical verdict machinery behave across the full authorized simulation space?

This question is methodological rather than evidential. It asks whether the registered decision procedure behaves coherently under baseline-only, CBR-positive, strong-null, inconclusive, non-identifiable, false-support, false-failure, and endpoint-shopping regimes.

A coherent decision procedure should not call every residual support. It should not fail a model when the predicted residual is below threshold. It should not issue support when ordinary baseline variation or nuisance can reproduce the residual. It should not allow a secondary endpoint to rescue a failed primary endpoint. It should classify false-support and false-failure risks as diagnostic properties of the decision procedure rather than as evidence for or against CBR in nature.

1.3 Status of the Paper

This paper is: synthetic, simulation-based, decision-procedure focused, version-bounded, non-empirical, non-confirmatory, non-falsifying, and non-revisionary with respect to the imported dossier.

The paper may produce support-like simulation outcomes. Such outcomes are not empirical support.

The paper may produce strong-null-like simulation outcomes. Such outcomes are not empirical failure.

The paper may produce false-support and false-failure rates. Such rates describe vulnerabilities of the registered decision procedure under synthetic conditions. They do not adjudicate the physical law candidate.

The status of this paper is therefore: simulation stress-test, not empirical adjudication.

1.4 Main Contribution

The main contribution of this paper is a complete synthetic behavior map of the locked C_RAI v0.1 decision procedure.

The paper contributes:

a simulation engine for the imported C_RAI v0.1 dossier,

a pre/post simulation separation rule,

a worked central demonstration case,

a baseline-only demonstration variant,

a simulation output register,

a reproducibility and code register,

a deterministic-versus-Monte Carlo simulation distinction,

scenario certificates Scert(S_i),

degeneracy certificates Dcert(Δ_CBR),

cross-scenario verdict mapping,

sensitivity analysis,

false-support diagnostics,

false-failure diagnostics,

and empirical-design lessons for Tier-2 author-data reconstruction and Tier-3 locked experimentation.

The paper’s achievement is not that it finds a CBR effect. Its achievement is that it tests whether the registered machinery can classify synthetic outcomes without changing the rules after seeing the result.

1.5 Simulation Discipline

A simulation paper in the CBR program must satisfy a stricter standard than merely producing curves.

It must identify what is imported, what is fixed, what is varied, what is computed, what is certified, what is expected before simulation, what is produced after simulation, and what status the result carries.

A simulated residual is not automatically support-like.

A threshold exceedance is not automatically support-like.

A non-observation is not automatically failure-like.

A diagnostic secondary endpoint is not a replacement for the registered primary endpoint.

A scenario outside the authorized register is not silently part of v0.1.

These constraints are not rhetorical. They are the conditions under which a simulation becomes auditable.

1.6 Transition

The first step is to declare the imported dossier and state the no-new-primary-objects rule.

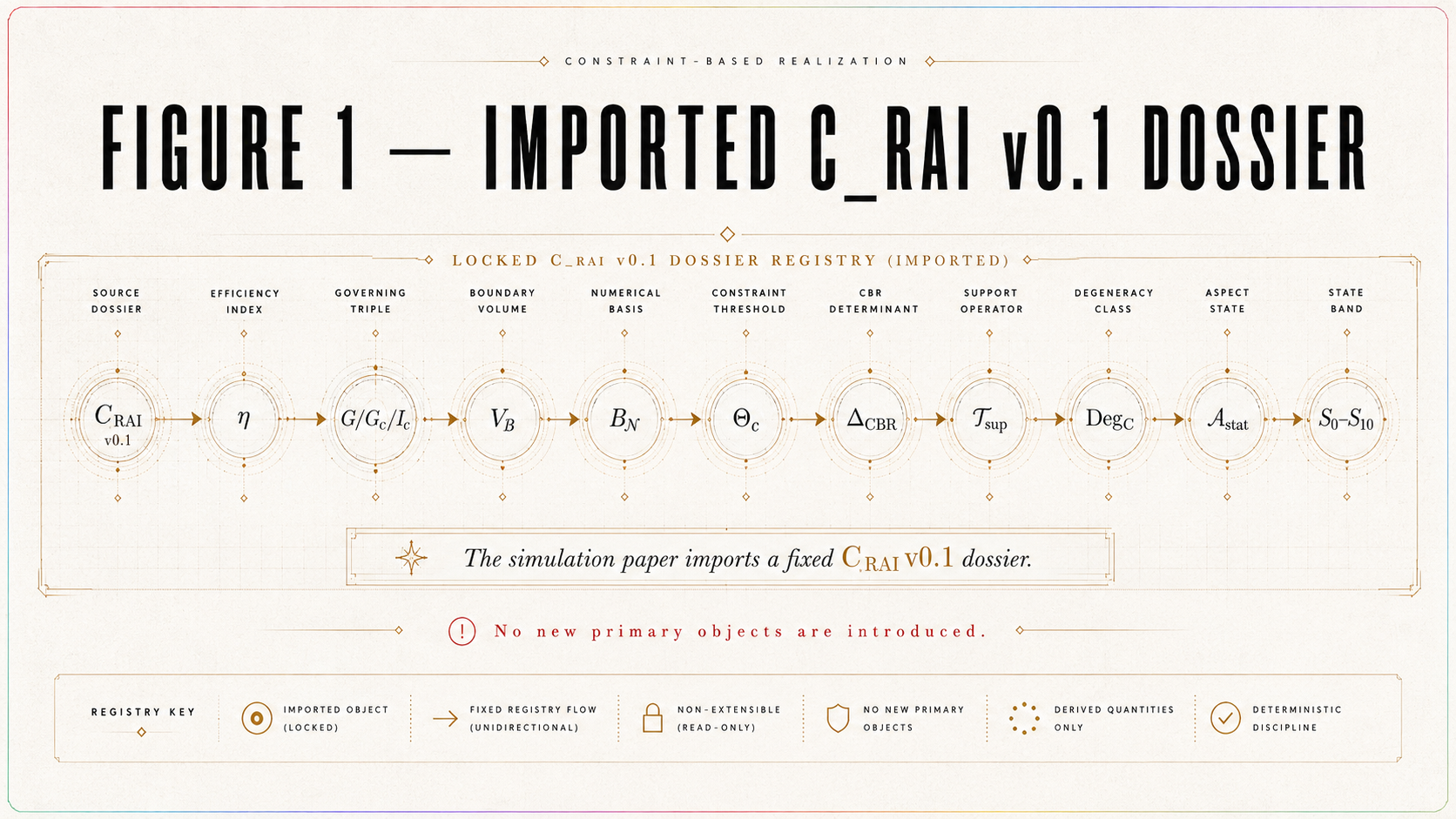

2. Imported C_RAI v0.1 Dossier

2.1 Import Declaration

This paper imports the locked C_RAI v0.1 dossier from the platform-specific numerical instantiation.

The imported platform context is:

C_RAI = record-accessibility interferometric context.

The imported object set includes:

C_RAI,

η,

G,

G_c,

I_c,

V_ℬ(η; θ_ℬ),

𝔅,

Θ_ℬ,

B_𝓝(η),

B_c,

ε_detect,

Θ_c,

Δ_CBR(η),

T_CBR,

𝒯_sup,

T_c^sim,

Deg_C,

τ_deg,

Dcert,

A_stat,

Scert,

validity gates,

provenance labels,

version-boundary rules,

and authorized scenario classes S₀–S₁₀.

These objects constitute the simulation registry. The present paper may vary registered parameters within authorized scenario ranges, but it may not introduce new primary objects while still claiming to simulate C_RAI v0.1.

2.2 Imported Accessibility Register

The imported accessibility variable is:

η ∈ [0,1].

The imported grid is:

G = {η_j = j/(N_η − 1) : j = 0, …, N_η − 1}.

The default grid size is:

N_η = 101.

The imported critical accessibility regime is:

I_c = [0.4, 0.6].

The grid-restricted critical region is:

G_c = G ∩ I_c.

The accessibility register is simulation-registered. It is not empirically calibrated. The simulations in this paper therefore test decision-procedure behavior on a declared synthetic accessibility axis, not a measured accessibility variable.

2.3 Imported Baseline Register

The ordinary baseline class is:

𝔅 = {V_ℬ(η; θ_ℬ) : θ_ℬ ∈ Θ_ℬ}.

The imported synthetic baseline form is:

V_ℬ(η; θ_ℬ) = V₀(1 − qη)exp(−κη)(1 − ρη) + λ₀ + λ₁η.

The central baseline parameter values are:

V₀ = 0.90,

q = 0.10,

κ = 0.20,

ρ = 0.03,

λ₀ = 0,

λ₁ = 0.

The registered baseline parameter ranges are:

V₀ ∈ [0.75, 1.00],

q ∈ [0, 0.30],

κ ∈ [0, 0.50],

ρ ∈ [0, 0.10],

λ₀ ∈ [−0.01, 0.01],

λ₁ ∈ [−0.02, 0.02].

All baseline simulations must satisfy the physical visibility constraint:

0 ≤ V_ℬ(η; θ_ℬ) ≤ 1

for all registered grid values, up to explicitly declared numerical tolerance.

2.4 Imported Nuisance and Threshold Register

The imported nuisance regimes are:

B_𝓝^narrow = 0.0075,

B_𝓝^moderate = 0.0175,

B_𝓝^wide = 0.0375.

The central nuisance case is:

B_𝓝 = B_𝓝^moderate = 0.0175.

The critical nuisance bound is:

B_c = sup_{η ∈ I_c} B_𝓝(η).

On the grid:

B_c^G = max_{η_j ∈ G_c} B_𝓝(η_j).

The detectability threshold is:

ε_detect = z_detect σ_T.

The decision threshold is:

Θ_c = B_c + ε_detect.

The central simulations use:

z_detect = 2.

Conservative stress tests may use:

z_detect = 3.

For symbolic demonstrations, Θ_c may remain symbolic and residual amplitudes may be expressed as multiples of Θ_c. For numerical runs, σ_T, ε_detect, and Θ_c must be instantiated and reported in the output register.

The threshold is therefore computed from registered nuisance and detectability objects. It is not chosen after observing simulated endpoints.

2.5 Imported Residual Register

The imported residual family is:

Δ_CBR(η) = A_CBR s exp[−(η − η_c)²/(2w_r²)].

The imported central location is:

η_c = 0.5.

The registered width family is:

w_r ∈ {0.025, 0.05, 0.10}.

The registered sign family is:

s ∈ {+1, −1}.

The registered amplitude family is:

A_CBR ∈ {0, 0.5Θ_c, Θ_c, 1.5Θ_c, 2Θ_c, 3Θ_c}.

The residual family is simulation-registered. It is not an empirical discovery. It defines the synthetic CBR-side residual used to test the decision procedure.

2.6 Imported Endpoint Register

The imported primary endpoint functional is:

𝒯_sup[x(η), η ∈ I_c] = sup_{η ∈ I_c}|x(η)|.

On the grid:

𝒯_sup^G[x] = max_{η_j ∈ G_c}|x(η_j)|.

The predicted endpoint is:

T_CBR = 𝒯_sup[Δ_CBR(η), η ∈ I_c].

The simulated observed endpoint is:

T_c^sim = 𝒯_sup[V_obs^sim(η) − V_ℬ(η), η ∈ I_c].

All endpoint quantities are expressed in visibility units.

2.7 Imported Degeneracy and Statistical Register

The imported degeneracy operator is:

Deg_C = Deg_𝔅 ∪ Deg_𝓝 ∪ Deg_η ∪ Deg_est ∪ Deg_post ∪ Deg_phase ∪ Deg_samp ∪ Deg_stat ∪ Deg_end.

The imported degeneracy tolerance is:

τ_deg.

The imported degeneracy certificate is:

Dcert(Δ_CBR).

Possible Dcert statuses are:

non-degenerate,

degenerate,

not evaluable,

requires future testing.

The imported statistical adjudication rule is:

A_stat.

In v0.1, the primary convention is the envelope-threshold rule using Θ_c. More sophisticated uncertainty objects may be named as part of the registry, but they do not override the envelope-threshold convention unless a successor statistical version is declared.

2.8 No-New-Primary-Objects Rule

This paper may not introduce a new: platform context, accessibility variable, critical regime, baseline class, nuisance envelope, threshold rule, endpoint functional, residual morphology, degeneracy operator, statistical rule, support rule, failure rule, or verdict class.

If a simulation requires any of these, it is no longer a C_RAI v0.1 simulation. It is an exploratory variation or a successor dossier version.

2.9 Import Lock Principle

Principle 2.1 — Import Lock.

This paper tests the imported C_RAI v0.1 dossier. It does not complete, repair, rescue, or revise it. Any new primary object creates a successor dossier version.

2.10 Export-to-Import Continuity

The simulation paper begins from the exact object package exported by the platform-specific numerical instantiation. The imported object set functions as the registry boundary for every simulation run.

A run remains inside v0.1 only if it uses the imported objects or registered scenario variations. A run that changes primary objects must be labeled as exploratory, outside v0.1, or a successor version.

2.11 Transition

With the imported object set fixed, the paper distinguishes expected scenario commitments from post-simulation results.

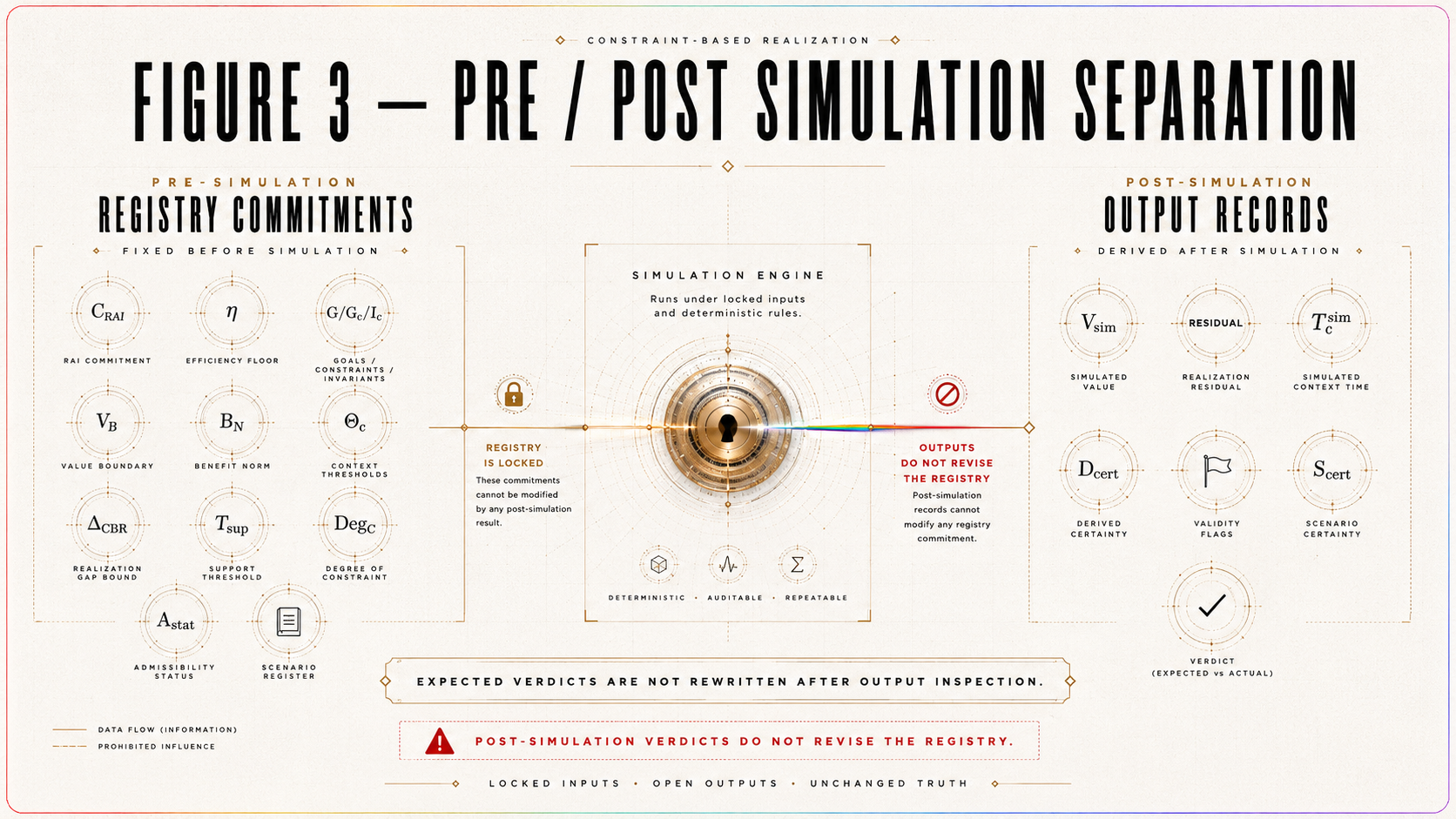

3. Pre-Simulation Registry and Post-Simulation Results

3.1 Purpose

A simulation study can fail by moving its interpretive target after seeing output. In the present context, that would mean changing the expected verdict, threshold rule, degeneracy rule, endpoint convention, scenario definition, or validity gate after observing a simulated result.

This section prevents that error.

The paper distinguishes: pre-simulation registry commitments from post-simulation results.

Expected verdicts belong to the registry.

Post-simulation verdicts belong to the simulation output.

The paper may compare them, but it may not rewrite the registry after observing the output.

3.2 Pre-Simulation Registry Commitments

Before running any simulation, the paper must declare:

scenario ID,

fixed objects,

varied objects,

variation ranges,

provenance labels,

expected verdict class,

validity gates,

degeneracy rule,

threshold convention,

randomness convention,

run count,

and version status.

These commitments determine what the simulation is testing.

For example, if S₁ is declared as a detectable CBR-positive scenario with T_CBR > Θ_c and Δ_CBR ∉ Deg_C, then the expected verdict is support-like if validity gates pass. If the simulation output fails to produce support-like classification, that result must be reported as decision-procedure behavior, validity failure, or parameter sensitivity. The paper may not redefine S₁ after the fact.

3.3 Post-Simulation Results

After running simulations, the paper reports:

generated baseline,

generated nuisance,

generated residual,

computed V_obs^sim,

computed T_CBR,

computed T_c^sim,

computed B_c,

computed ε_detect,

computed Θ_c,

computed Dcert,

computed Scert,

validity-gate result,

post-simulation verdict,

false-support status if applicable,

false-failure status if applicable,

endpoint-shopping status if applicable,

and version status.

The post-simulation verdict may match or deviate from the expected verdict. A mismatch is not hidden. It is a result.

3.4 Expected Verdicts Versus Post-Simulation Verdicts

The expected verdict is a registry prediction about how the decision procedure should classify a scenario under ideal satisfaction of its defining conditions.

The post-simulation verdict is the result produced by the actual simulation run after endpoint computation, threshold comparison, degeneracy evaluation, and validity-gate assessment.

For example:

A baseline-only scenario S₀ is expected to produce no-support. If stochastic nuisance produces T_c^sim > Θ_c, the post-simulation classification is false-support risk. The expected verdict is not rewritten.

A strong-null scenario S₃ is expected to produce strong-null-like classification when the prediction is detectable, non-degenerate, and absent from valid simulated observation. If the run fails power or validity gates, the post-simulation classification becomes inconclusive or false-failure risk. The expected strong-null logic is not rewritten.

An endpoint-shopping scenario S₁₀ is expected to reject rescue by secondary endpoints. If a secondary morphology statistic appears favorable while 𝒯_sup does not pass the registered rule, the post-simulation classification remains no-support under the primary endpoint.

3.5 Pre/Post Separation Principle

Principle 3.1 — Pre/Post Simulation Separation.

Expected verdicts are registry commitments. Post-simulation verdicts are simulation outputs. The paper may not rewrite expected verdicts, scenario definitions, thresholds, degeneracy rules, endpoint conventions, or validity gates after observing simulation behavior.

3.6 Consequence for Paper Structure

The paper must therefore report each scenario in two layers.

First, it states the registered scenario structure and expected verdict.

Second, it reports the actual output and post-simulation classification.

This structure preserves the no-rescue discipline of the CBR program in simulation form.

3.7 Transition

With pre/post discipline fixed, the simulation engine can be defined.

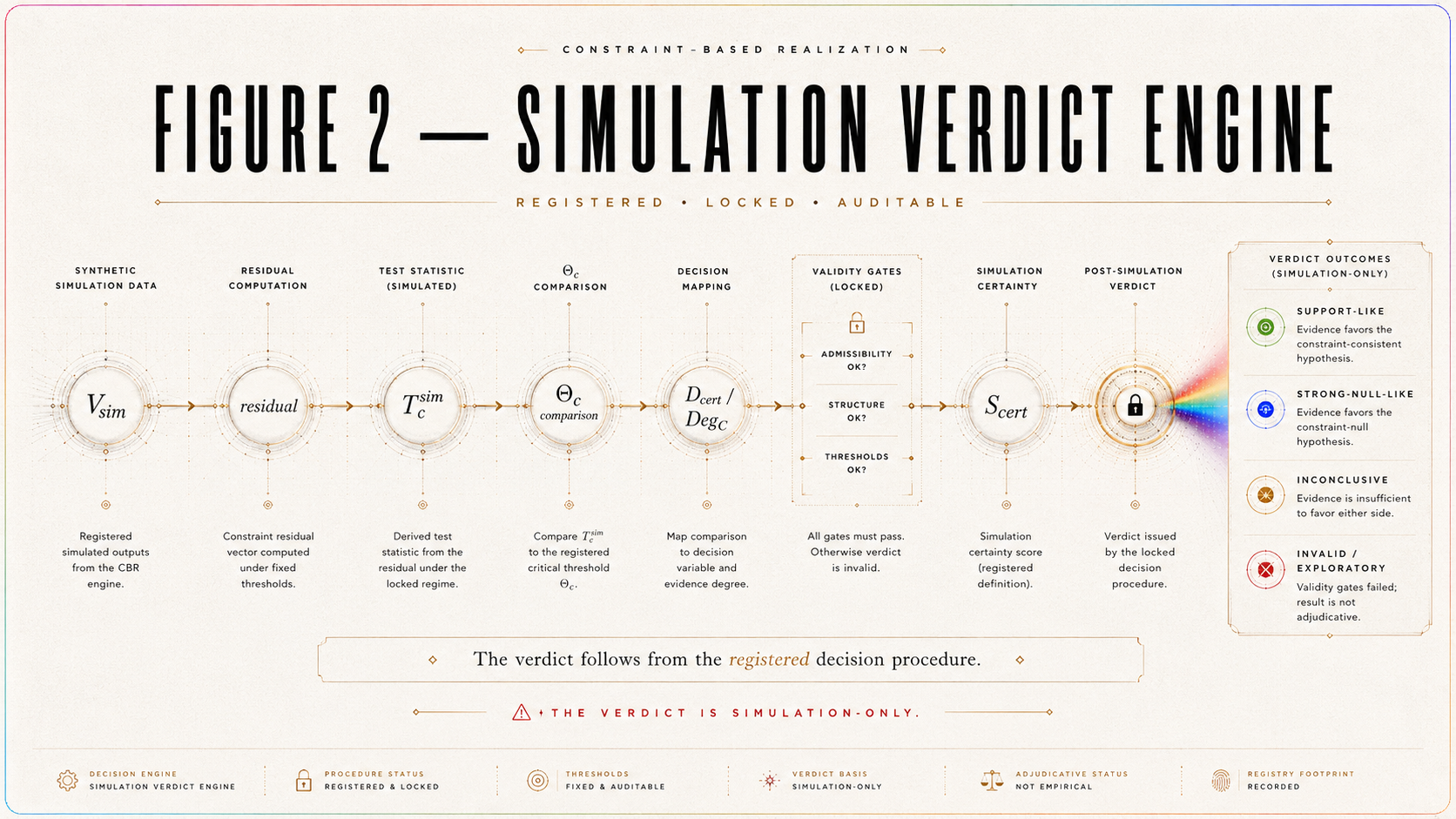

4. Simulation Method and Verdict Engine

4.1 Purpose

This section defines the simulation method used throughout the paper. It specifies how baseline curves, nuisance terms, residuals, simulated observations, endpoints, thresholds, certificates, validity gates, and verdicts are generated from the imported C_RAI v0.1 dossier.

The method is deliberately constrained. It does not optimize the model after output inspection. It does not fit the residual to a desired verdict. It does not change the endpoint. It does not widen or narrow the nuisance envelope after seeing results. It implements the imported dossier.

4.2 Simulation Grid

The simulation uses the imported accessibility grid:

η ∈ [0,1]

with:

N_η = 101

and:

G = {η_j = j/(N_η − 1) : j = 0, …, N_η − 1}.

The critical regime is:

I_c = [0.4, 0.6].

The grid-restricted critical regime is:

G_c = G ∩ I_c.

All primary endpoint computations are performed on G_c unless a registered sensitivity run changes grid density.

4.3 Baseline Generation

The synthetic ordinary baseline is:

V_ℬ(η; θ_ℬ) = V₀(1 − qη)exp(−κη)(1 − ρη) + λ₀ + λ₁η.

The central parameter vector is:

θ_ℬ^0 = (0.90, 0.10, 0.20, 0.03, 0, 0).

Thus:

V_ℬ^0(η) = 0.90(1 − 0.10η)exp(−0.20η)(1 − 0.03η).

This central baseline is simulation-registered. It is not an empirical measurement and is not fitted to public data.

All simulations must preserve physical visibility admissibility:

0 ≤ V_ℬ(η; θ_ℬ) ≤ 1

for all simulated grid points, up to registered numerical tolerance.

4.4 Nuisance Generation

The simulation uses registered nuisance regimes:

B_𝓝^narrow = 0.0075,

B_𝓝^moderate = 0.0175,

B_𝓝^wide = 0.0375.

The central case uses:

B_𝓝^moderate = 0.0175.

Synthetic nuisance is represented by δ_𝓝(η) satisfying the registered scenario conditions. Depending on the scenario, δ_𝓝(η) may be deterministic or stochastic.

For deterministic rule-behavior demonstrations, δ_𝓝(η) may be set to a controlled value, including zero, provided the run is labeled deterministic.

For Monte Carlo error-risk runs, δ_𝓝(η) is sampled according to a registered stochastic convention, with seeds recorded in the output register.

The nuisance term must not duplicate ordinary effects already included in V_ℬ unless a registered uncertainty decomposition is supplied.

4.5 Threshold Generation

For each run, compute the critical nuisance bound:

B_c = sup_{η ∈ I_c} B_𝓝(η).

On the grid:

B_c^G = max_{η_j ∈ G_c} B_𝓝(η_j).

The detectability threshold is:

ε_detect = z_detect σ_T.

The decision threshold is:

Θ_c = B_c + ε_detect.

Central simulations use:

z_detect = 2.

Conservative stress tests use:

z_detect = 3.

For symbolic demonstrations, Θ_c may remain symbolic and amplitudes may be expressed as multiples of Θ_c. For numerical runs, σ_T, ε_detect, and Θ_c must be instantiated and reported in the output register.

If σ_T is instantiated numerically in a scenario, the instantiation must be recorded. If σ_T remains symbolic or scenario-defined, the run must report Θ_c under that scenario convention.

4.6 Residual Generation

The synthetic CBR residual is:

Δ_CBR(η) = A_CBR s exp[−(η − η_c)²/(2w_r²)].

The central location is:

η_c = 0.5.

Registered widths are:

w_r ∈ {0.025, 0.05, 0.10}.

Registered signs are:

s ∈ {+1, −1}.

Registered amplitudes are:

A_CBR ∈ {0, 0.5Θ_c, Θ_c, 1.5Θ_c, 2Θ_c, 3Θ_c}.

Because the Gaussian peak lies inside I_c, the predicted endpoint under 𝒯_sup is:

T_CBR = |A_CBR|

for the normalized central residual.

This residual is synthetic and simulation-registered. It is not claimed to exist in nature.

4.7 Simulated Visibility

The simulated visibility is:

V_sim(η) = V_ℬ(η) + Δ_CBR(η) + δ_𝓝(η).

This expression creates the simulation-side quantity from the ordinary baseline, the registered synthetic CBR residual, and the registered nuisance structure.

Because this paper is not an empirical data analysis, the term V_sim is preferred for the generated simulation curve. The endpoint formerly denoted T_c^sim remains the simulated endpoint computed from the simulated residual relative to baseline.

If V_sim(η) exits the physical visibility interval [0,1], the run must be handled under a registered rule. For default v0.1 runs, invalidation is preferred over post hoc clipping unless clipping is explicitly part of the pre-simulation registry. No clipping rule may be introduced after observing the run.

4.8 Endpoint Computation

The primary endpoint functional is:

𝒯_sup[x(η), η ∈ I_c] = sup_{η ∈ I_c}|x(η)|.

On the grid:

𝒯_sup^G[x] = max_{η_j ∈ G_c}|x(η_j)|.

Compute the predicted endpoint:

T_CBR = 𝒯_sup[Δ_CBR(η), η ∈ I_c].

Compute the simulated endpoint:

T_c^sim = 𝒯_sup[V_sim(η) − V_ℬ(η), η ∈ I_c].

All endpoint comparisons must be made in visibility units.

4.9 Degeneracy Certificate

For each scenario, compute or assign:

Dcert(Δ_CBR).

The possible statuses are:

non-degenerate,

degenerate,

not evaluable,

requires future testing.

Support-like and strong-null-like classifications require:

Dcert(Δ_CBR) = non-degenerate.

If the residual is baseline-degenerate, nuisance-degenerate, η-degenerate, sampling-degenerate, statistically degenerate, or endpoint-degenerate, the verdict must be downgraded to non-identifiable or inconclusive according to the registered rule.

4.10 Scenario Certificate

Each run receives:

Scert(S_i).

The scenario certificate records:

scenario ID,

fixed objects,

varied objects,

variation range,

provenance labels,

expected verdict,

post-simulation verdict,

validity-gate status,

degeneracy status,

output-register status,

and version status.

A simulation run without Scert(S_i) is not part of the registered v0.1 stress-test.

4.11 Validity Gates

A scenario must pass the following validity gates before a support-like or strong-null-like verdict is available:

endpoint congruence,

sampling adequacy,

baseline admissibility,

nuisance non-duplication,

threshold computability,

degeneracy evaluability,

registered statistical rule,

provenance consistency,

and version consistency.

If any validity gate fails, the run is inconclusive, invalid, exploratory, or non-identifiable. It cannot be counted as support-like or strong-null-like.

4.12 Verdict Engine

The verdict engine classifies each run into one of the following statuses:

simulation-only support-like,

simulation-only strong-null-like,

simulation-only inconclusive,

simulation-only non-identifiable,

false-support risk,

false-failure risk,

endpoint-shopping violation,

invalid scenario,

or exploratory outside-v0.1 scenario.

The verdict engine is rule-governed. It does not interpret outcomes by narrative preference.

4.13 Threshold Equality Convention

The equality cases:

T_c^sim = Θ_c

and:

T_CBR = Θ_c

are treated conservatively.

Under v0.1:

T_c^sim = Θ_c is non-exceedance.

T_CBR = Θ_c is threshold-borderline and is not sufficient for strong-null failure.

Strict exceedance requires:

T_c^sim > Θ_c

or:

T_CBR > Θ_c,

depending on the verdict rule being evaluated.

4.14 No Unrecorded Numerical Claims

Any numerical result reported in the main text must be traceable to an output record.

Unrecorded runs may inform exploration, but they may not support a reported verdict, rate, sensitivity claim, or design recommendation.

Principle 4.1 — No Record, No Reported Verdict.

A simulation result may be discussed as part of the paper’s findings only if it is traceable to a registered output record.

4.15 Transition

Before entering the full scenario register, the paper gives one concrete worked demonstration case showing how the engine works.

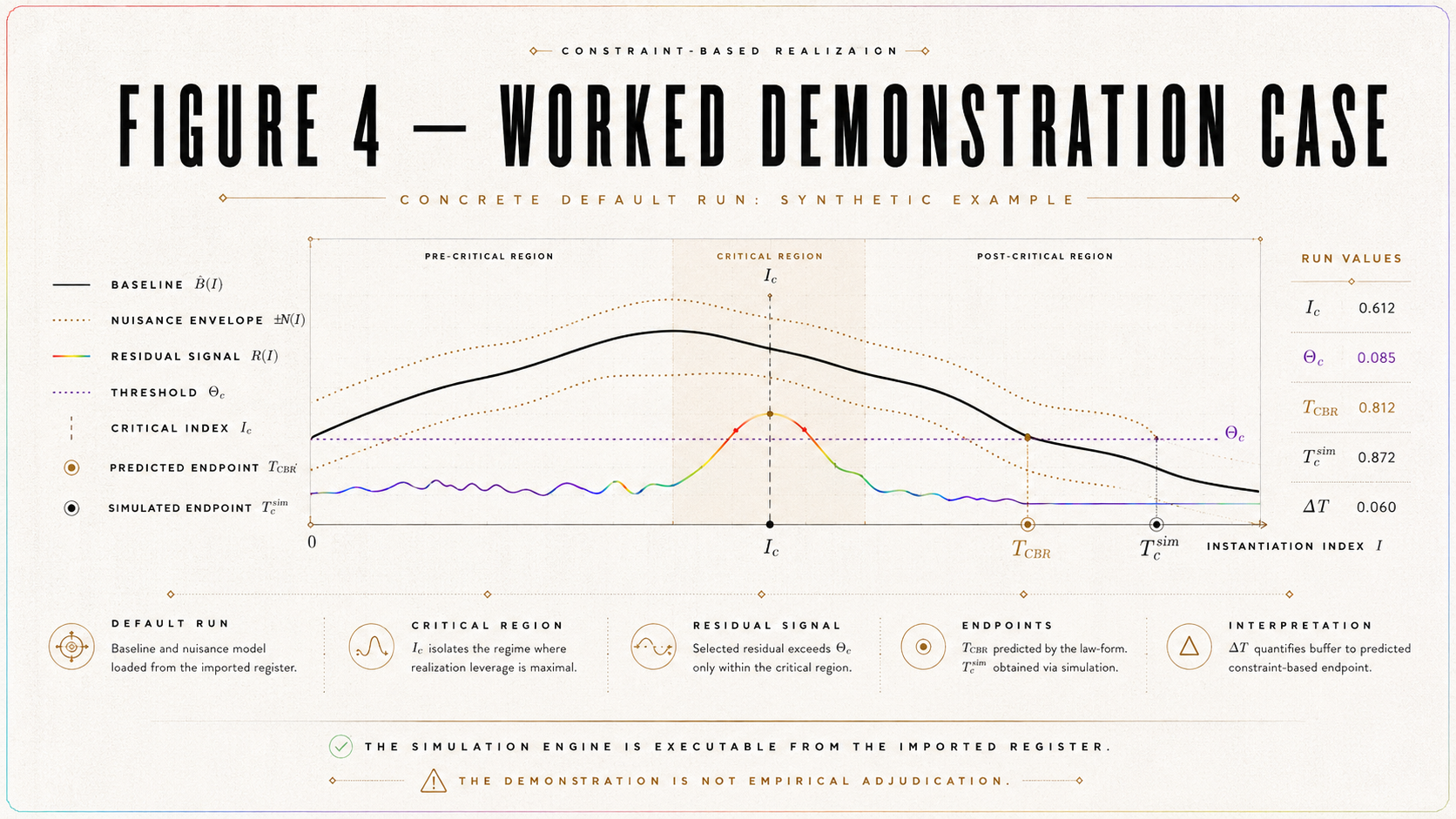

5. Worked Demonstration Case

5.1 Purpose

This section provides one complete worked example of the simulation engine before the paper enters the authorized scenario register.

The purpose is readability and auditability. A reader should see a single default run containing the central baseline, nuisance regime, residual setting, endpoint computation, threshold computation, output record, degeneracy certificate, scenario certificate, validity-gate result, and verdict classification.

This section does not introduce a new scenario class. It demonstrates the imported engine.

5.2 Demonstration Run D₀

Define the main demonstration run:

D₀ = central deterministic support-like demonstration.

This run uses the central imported values:

N_η = 101,

G = {0, 0.01, …, 1},

I_c = [0.4, 0.6],

V₀ = 0.90,

q = 0.10,

κ = 0.20,

ρ = 0.03,

λ₀ = 0,

λ₁ = 0,

B_𝓝 = B_𝓝^moderate = 0.0175,

η_c = 0.5,

w_r = 0.05,

s = +1.

For the demonstration residual, set:

A_CBR = 1.5Θ_c.

This places the demonstration in the detectable synthetic regime, provided physical visibility admissibility and validity gates pass.

For the deterministic demonstration, set:

δ_𝓝(η) = 0.

This means that the nuisance envelope is registered as the ordinary allowance, but the demonstration run uses no realized nuisance perturbation. This is a rule-behavior demonstration, not a Monte Carlo error-risk run.

5.3 Central Baseline

The central baseline is:

V_ℬ^0(η) = 0.90(1 − 0.10η)exp(−0.20η)(1 − 0.03η).

This curve is the ordinary comparator for D₀.

Its role is not to represent a measured laboratory apparatus. It is the registered central ordinary baseline inherited from C_RAI v0.1.

5.4 Demonstration Residual

The demonstration residual is:

Δ_CBR^D₀(η) = 1.5Θ_c exp[−(η − 0.5)²/(2 · 0.05²)].

Since the peak lies at η = 0.5 ∈ I_c, the predicted endpoint is:

T_CBR^D₀ = 1.5Θ_c.

This satisfies:

T_CBR^D₀ > Θ_c.

Therefore, the prediction side is detectable under the registered threshold convention.

5.5 Demonstration Simulated Visibility

With δ_𝓝(η) = 0, the simulated visibility is:

V_sim^D₀(η) = V_ℬ^0(η) + Δ_CBR^D₀(η).

The simulated residual relative to baseline is:

V_sim^D₀(η) − V_ℬ^0(η) = Δ_CBR^D₀(η).

Thus:

T_c^sim,D₀ = 𝒯_sup[Δ_CBR^D₀(η), η ∈ I_c].

Because the residual is normalized and peaked inside I_c:

T_c^sim,D₀ = 1.5Θ_c.

Therefore:

T_c^sim,D₀ > Θ_c.

5.6 Demonstration Certificates

The demonstration run receives a degeneracy certificate:

Dcert(Δ_CBR^D₀) = non-degenerate

provided the run is declared as a non-degenerate rule-behavior example and no baseline, nuisance, η, sampling, statistical, or endpoint degeneracy is activated.

The demonstration run receives a scenario certificate:

Scert(D₀) = valid central deterministic demonstration

provided all validity gates pass.

The validity gates for D₀ are:

endpoint congruence: pass,

sampling adequacy: pass for default N_η = 101 and w_r = 0.05,

baseline admissibility: pass if V_sim^D₀ ∈ [0,1],

nuisance non-duplication: pass,

threshold computability: pass,

degeneracy evaluability: pass by demonstration construction,

registered statistical rule: pass,

provenance consistency: pass,

version consistency: pass.

If physical admissibility fails for any numerical instantiation of Θ_c, the run must be marked invalid or re-expressed using a lower registered amplitude. No post hoc clipping is allowed unless pre-registered.

5.7 Demonstration Verdict

Under the deterministic assumptions above:

T_CBR^D₀ = 1.5Θ_c,

T_c^sim,D₀ = 1.5Θ_c,

T_c^sim,D₀ > Θ_c,

and:

Dcert(Δ_CBR^D₀) = non-degenerate.

If validity gates pass, the post-simulation verdict is:

simulation-only support-like demonstration.

This verdict is not empirical support. It shows that the decision engine recognizes a detectable, non-degenerate synthetic residual under a central deterministic construction.

5.8 Demonstration Output Record

The demonstration output record contains:

run ID: D₀,

construction label: central deterministic support-like demonstration,

scenario relation: demonstration linked to S₁,

baseline parameter vector: θ_ℬ^0 = (0.90, 0.10, 0.20, 0.03, 0, 0),

nuisance regime: B_𝓝^moderate = 0.0175,

realized nuisance: δ_𝓝(η) = 0,

residual amplitude: A_CBR = 1.5Θ_c,

residual width: w_r = 0.05,

residual sign: s = +1,

grid density: N_η = 101,

critical regime: I_c = [0.4, 0.6],

predicted endpoint: T_CBR = 1.5Θ_c,

simulated endpoint: T_c^sim = 1.5Θ_c,

decision threshold: Θ_c,

degeneracy certificate: non-degenerate,

scenario certificate: valid central deterministic demonstration,

expected verdict: support-like demonstration,

post-simulation verdict: simulation-only support-like demonstration,

version status: inside C_RAI v0.1.

5.9 Optional Baseline-Only Demonstration Variant D₀′

For completeness, the paper may include a minimal baseline-only demonstration variant:

D₀′ = central deterministic baseline-only demonstration.

Set:

A_CBR = 0,

and:

δ_𝓝(η) = 0.

Then:

Δ_CBR^D₀′(η) = 0,

V_sim^D₀′(η) = V_ℬ^0(η),

T_CBR^D₀′ = 0,

and:

T_c^sim,D₀′ = 0.

The expected verdict is:

simulation-only baseline / no-support.

The post-simulation verdict is:

simulation-only baseline / no-support

provided validity gates pass.

This optional variant gives the reader the simplest null case alongside the central support-like demonstration.

5.10 Demonstration Boundary

Neither D₀ nor D₀′ establishes support, failure, or empirical status.

They are worked examples of the decision procedure.

Principle 5.1 — Demonstration Non-Adjudication.

A worked demonstration case illustrates the simulation engine. It does not add a new scenario class, alter the imported register, or establish empirical support or failure.

5.11 Transition

With one full run displayed and a baseline-only variant defined, the paper defines the standardized output record required for every simulation.

6. Simulation Output Register

6.1 Purpose

The Simulation Output Register makes the simulation study reproducible and auditable.

Every run must produce a standardized output record. Without such a register, the paper would contain scenario descriptions but not a disciplined simulation study.

The output register links each verdict to the exact scenario, parameter state, endpoint computation, threshold computation, degeneracy certificate, validity-gate result, scenario certificate, and version status that produced it.

6.2 Required Output Fields

Every simulation run must output:

scenario ID S_i,

run ID,

random seed or deterministic construction label,

baseline parameter vector θ_ℬ,

nuisance regime,

nuisance realization label,

A_CBR,

w_r,

s,

τ_deg,

grid density,

η deformation if any,

T_CBR,

T_c^sim,

B_c,

ε_detect,

Θ_c,

Dcert,

Scert,

validity-gate status,

expected verdict,

post-simulation verdict,

false-support status if applicable,

false-failure status if applicable,

endpoint-shopping status if applicable,

and version status.

No simulation verdict is complete without these fields or a justified statement that a field is not applicable.

6.3 Scenario ID and Run ID

The scenario ID identifies the authorized scenario family:

S₀, S₁, …, S₁₀.

The run ID identifies the individual simulation run inside that scenario family.

A deterministic run may use labels such as:

S₁-D1,

S₃-D1,

or S₁₀-D1.

A Monte Carlo run may use labels such as:

S₀-MC-0001,

S₈-MC-0420,

or S₉-MC-1000.

The run ID must be unique.

6.4 Parameter State

Each output record must identify the parameter state used in the run.

At minimum, this includes:

baseline parameter vector θ_ℬ,

nuisance regime,

residual amplitude A_CBR,

residual width w_r,

residual sign s,

degeneracy tolerance τ_deg,

grid density,

and any η-axis deformation.

For baseline-degenerate, η-miscalibrated, sampling-degenerate, false-support, and false-failure scenarios, the output record must explicitly identify the deformation, degeneracy, or failure mechanism being tested.

6.5 Endpoint and Threshold Values

Each output record must report:

T_CBR,

T_c^sim,

B_c,

ε_detect,

and Θ_c.

These quantities must be expressed in the same endpoint units. If endpoint congruence fails, the run cannot receive support-like or strong-null-like classification.

6.6 Certificate Values

Each run must report:

Dcert(Δ_CBR)

and:

Scert(S_i).

The degeneracy certificate determines whether the residual is identifiable under the registered degeneracy rule.

The scenario certificate determines whether the run is a valid v0.1 scenario, a stress scenario, an exploratory variation, or a successor-version case.

6.7 Validity-Gate Status

Each run must report the status of the validity gates: endpoint congruence, sampling adequacy, baseline admissibility, nuisance non-duplication, threshold computability, degeneracy evaluability, registered statistical rule, provenance consistency, and version consistency.

Each gate should be marked as: pass, fail, not applicable, or not evaluable.

If any required gate fails, support-like and strong-null-like classifications are unavailable.

6.8 Expected and Post-Simulation Verdicts

Each output record must contain both: expected verdict, and post-simulation verdict.

The expected verdict is declared before simulation.

The post-simulation verdict is produced after endpoint computation, threshold comparison, degeneracy evaluation, and validity-gate assessment.

A mismatch between expected and post-simulation verdicts is a result. It must be reported and explained.

6.9 Error-Risk Fields

For false-support and false-failure scenarios, the output record must include error-risk fields.

For false-support runs, report: threshold-exceedance status, false-support flag, endpoint magnitude, nuisance regime,

and whether any degeneracy or validity failure explains the exceedance.

For false-failure runs, report: missed-detection status, false-failure flag, underpowering diagnosis, sampling diagnosis, nuisance-swallowing diagnosis, and validity-gate status.

6.10 Version Status

Each run must report one of the following version statuses:

inside C_RAI v0.1,

registered v0.1 stress scenario,

exploratory outside v0.1,

successor version required,

or invalid.

A run cannot silently change status after output inspection.

6.11 No Record, No Reported Claim

Any numerical result reported in the main text must be traceable to an output record.

Unrecorded runs may inform exploration, but they may not support a reported verdict, rate, sensitivity claim, or design recommendation.

Principle 6.1 — No Record, No Reported Claim.

A simulation result may be used as a reported finding only if it is traceable to a registered output record.

6.12 Output Register Principle

Principle 6.2 — Output Traceability.

Every simulation verdict must be traceable to a registered scenario, parameter state, endpoint computation, threshold computation, degeneracy certificate, validity-gate result, scenario certificate, expected verdict, post-simulation verdict, and version status.

6.13 Output Package Recommendation

The final paper should provide or describe an output package containing: scenario-level summary file, run-level output file,

parameter register file, verdict register file, figure-generation file, seed list or deterministic construction list, and version manifest.

If the package is archived, its identifier should be cited. If it is not archived, the paper must still specify enough structure for reproduction.

6.14 Transition

The output register is complemented by a reproducibility and code register.

7. Reproducibility and Code Register

7.1 Purpose

The reproducibility register defines how the simulation study can be repeated, audited, extended, or upgraded.

A simulation paper is not reproducible merely because it states formulas. It must preserve the complete path from imported dossier to post-simulation verdict: parameters, scenario definitions, seeds or deterministic construction labels, endpoint values, threshold values, degeneracy certificates, validity-gate outcomes, output records, and version status.

This section therefore specifies the minimum reproducibility standard for the C_RAI v0.1 simulation study.

The paper does not need to claim immediate public code release unless code is actually archived. However, it must state what a reproducible package contains and must avoid reporting any numerical claim that cannot be traced to a registered output record.

7.2 Reproducibility Objects

A complete reproducibility package contains the following objects:

version label,

imported dossier manifest,

simulation parameter file,

scenario definitions,

random seed list,

deterministic construction labels,

Monte Carlo run counts,

simulation script or pseudocode,

run-level output files,

scenario-level summary files,

figure-generation script,

environment notes,

archive or repository information,

and version manifest.

The version label identifies the simulation as a C_RAI v0.1 stress-test or as an exploratory/successor version.

The imported dossier manifest identifies every object inherited from the platform-specific numerical instantiation, including C_RAI, η, G, I_c, V_ℬ, B_𝓝, Θ_c, Δ_CBR, 𝒯_sup, Deg_C, A_stat, Scert, and S₀–S₁₀.

The simulation parameter file records all numerical and symbolic parameters used in each run.

The scenario definitions state the pre-simulation commitments for each scenario family.

The seed list and deterministic construction labels allow the run to be reproduced.

The output files preserve endpoint values, thresholds, certificates, validity-gate statuses, expected verdicts, post-simulation verdicts, and version statuses.

The figure-generation script ensures that figures are drawn from the registered outputs rather than manually reconstructed after the fact.

The version manifest states whether the run remains inside C_RAI v0.1, is a registered stress scenario, is exploratory outside v0.1, or requires a successor dossier.

7.3 Random Seed Discipline

For stochastic simulations, every run must record its seed.

For deterministic simulations, every run must record its construction label.

The distinction matters. A deterministic construction is reproducible because it is fully specified by its parameters and construction rule. A stochastic run is reproducible because its random seed, sampling convention, and parameter state are recorded.

No stochastic simulation may be reported without:

random seed,

nuisance sampling rule,

run count,

parameter state,

and output record.

No deterministic simulation may be reported without:

construction label,

parameter state,

deterministic nuisance convention,

endpoint values,

certificate values,

and output record.

A run without enough information to reproduce its endpoint and verdict may be exploratory, but it may not support a reported finding.

7.4 Monte Carlo Run-Count Discipline

For every stochastic simulation class, the Monte Carlo run count must be registered before simulation.

Denote the Monte Carlo run count by:

N_MC.

For any Monte Carlo scenario, N_MC must be reported with the seed list or seed-generation rule.

If multiple values of N_MC are explored, each value is treated as a separate sensitivity condition. The paper may not choose a preferred N_MC after observing the most favorable false-support, false-failure, or recognition-rate behavior.

Principle 7.1 — Monte Carlo Count Lock.

The Monte Carlo run count N_MC is part of the pre-simulation registry. Changing N_MC after observing error-risk behavior creates a sensitivity condition or successor run class, not a revised primary result.

7.5 Code Status

If code is archived, the archive must be cited.

If code is not archived at first submission, the paper should state:

The simulation procedure is specified by the imported v0.1 register, scenario definitions, output register, and algorithmic pseudocode. A code archive may be supplied in a successor version.

The paper must not claim code availability unless code is actually available.

If code is private, unavailable, or in preparation, the paper should say so plainly. It may still be reproducible at the level of algorithmic specification if the imported register, scenario definitions, pseudocode, parameter states, seeds, output schema, and figure-generation rules are supplied.

If numerical results are reported without a public code archive, the paper should still provide enough output tables, seeds, parameter states, and pseudocode for independent reconstruction of the reported verdicts.

A future code archive should include:

source code,

parameter files,

seed files,

output files,

figure scripts,

environment specification,

license statement,

and archive identifier.

7.6 Algorithmic Pseudocode Requirement

Even if code is not archived, the paper should provide algorithmic pseudocode sufficient to reproduce the simulation logic.

At minimum, the pseudocode must specify the following sequence:

import C_RAI v0.1 dossier,

select scenario S_i,

load fixed objects,

load varied objects,

instantiate parameter state,

generate V_ℬ(η),

generate δ_𝓝(η),

generate Δ_CBR(η),

compute V_sim(η),

compute T_CBR,

compute T_c^sim,

compute B_c, ε_detect, and Θ_c,

evaluate Dcert,

evaluate validity gates,

generate Scert,

assign expected verdict,

assign post-simulation verdict,

write output record,

and update scenario-level summary.

This pseudocode ensures that the simulation paper remains auditable even before a public software archive exists.

7.7 Reproducibility Boundary

Reproducibility does not upgrade the evidential status of the paper.

A perfectly reproducible simulation remains a simulation. It does not become empirical support. It does not become empirical failure. It demonstrates that the decision procedure can be rerun, checked, and audited under its synthetic assumptions.

The purpose of reproducibility is therefore not confirmation of CBR. It is auditability of the simulation stress-test.

7.8 Reproducibility Principle

Principle 7.2 — Reproducibility Discipline.

A simulation paper must preserve enough information to reproduce every reported verdict, including parameters, seeds or deterministic construction labels, endpoint values, thresholds, certificates, validity-gate outcomes, output records, and version status.

7.9 Transition

The paper next distinguishes deterministic rule-behavior tests from Monte Carlo error-risk estimates.

8. Deterministic and Monte Carlo Simulation Classes

8.1 Purpose

Not every scenario has the same simulation logic.

Some scenarios test whether the verdict engine behaves correctly under controlled construction. These are deterministic rule-behavior simulations.

Other scenarios estimate how often the decision procedure produces a false-support or false-failure result under stochastic nuisance. These are Monte Carlo error-risk simulations.

The paper therefore distinguishes:

deterministic simulations

from

Monte Carlo simulations.

This distinction prevents a common simulation error: treating a single constructed example as if it measured a frequency, or treating a stochastic error-rate estimate as if it defined a formal rule.

8.2 Deterministic Simulations

Deterministic simulations test whether the verdict engine behaves as expected under controlled conditions.

They are appropriate when the purpose is to verify classification logic rather than estimate frequency.

Deterministic simulations are especially appropriate for:

S₁ detectable CBR-positive recognition,

S₂ below-threshold inconclusiveness,

S₃ strong-null logic,

S₄ wide-nuisance illustration,

S₅ baseline degeneracy,

S₆ η-miscalibration,

S₇ sampling degeneracy,

and S₁₀ endpoint-shopping discipline.

A deterministic run must specify:

construction label,

fixed parameter values,

varied parameter values,

realized nuisance convention,

endpoint values,

threshold values,

certificates,

validity-gate outcomes,

expected verdict,

and post-simulation verdict.

Its purpose is to show whether the registered rules classify the constructed case correctly.

8.3 Monte Carlo Simulations

Monte Carlo simulations estimate frequency or risk under repeated stochastic trials.

They are especially important for:

S₀ baseline-only false-support risk,

S₈ false-support stress testing,

S₉ false-failure stress testing,

and sensitivity runs involving stochastic nuisance.

Monte Carlo simulations should be used when the paper asks questions such as:

How often does baseline-plus-nuisance exceed Θ_c?

How often does a detectable residual fail to exceed Θ_c under underpowered conditions?

How often does nuisance create a false-support-like endpoint?

How often do validity gates fail under a given parameter regime?

These questions require repeated trials. They cannot be answered by a single deterministic demonstration.

8.4 Monte Carlo Output

Monte Carlo simulations must report:

number of runs,

seed range or seed list,

nuisance sampling convention,

false-support rate,

false-failure rate,

mean endpoint,

endpoint distribution,

threshold-exceedance frequency,

validity-gate failure frequency,

degeneracy-certificate frequency,

scenario-certificate summary,

and version-status summary.

If the simulation estimates an error-risk rate, it should also report the denominator:

false-support rate = number of false-support runs / total valid baseline-only or ordinary-degenerate runs.

false-failure rate = number of missed-detection or invalid-exposure runs / total runs in the relevant CBR-positive exposure class.

If invalid runs are excluded from a rate calculation, the exclusion rule must be stated before simulation.

8.5 Monte Carlo Uncertainty Intervals

Whenever Monte Carlo rates are reported, the paper should include uncertainty intervals or binomial standard errors for the estimated rates.

For an estimated rate p̂ from N eligible runs, the binomial standard error is:

SE(p̂) = √[p̂(1 − p̂)/N].

Thus, if FSR₀ is the false-support rate in S₀, the paper should report:

FSR₀,

N_MC,

and SE(FSR₀),

or an equivalent pre-registered interval convention.

If the rate is exactly zero or one, the paper should avoid overclaiming certainty. It should report the run count and interval convention rather than saying the risk is impossible.

Principle 8.1 — Monte Carlo Uncertainty Discipline.

A Monte Carlo rate is an estimate under a registered stochastic convention. It must be reported with run count and uncertainty information, not treated as an exact property of nature.

8.6 Deterministic and Monte Carlo Outputs Are Not Interchangeable

A deterministic run may show that the rule behaves correctly in a constructed case. It does not estimate how often that case occurs.

A Monte Carlo run may estimate frequency under a stochastic convention. It does not define the formal verdict rule.

Therefore:

a deterministic S₁ run can show support-like recognition,

but it cannot establish recognition probability by itself;

a Monte Carlo S₀ study can estimate false-support frequency,

but it does not change the baseline-only verdict rule;

a deterministic S₃ run can show strong-null-like logic,

but it does not establish empirical failure;

and a Monte Carlo S₉ study can estimate false-failure risk,

but it does not rescue invalid exposure.

8.7 Recommended Simulation Allocation

The paper should use deterministic simulations for rule clarity and Monte Carlo simulations for risk estimation.

Recommended allocation:

S₀: deterministic baseline-only control plus Monte Carlo false-support estimate.

S₁: deterministic detectable CBR-positive recognition plus optional Monte Carlo robustness.

S₂: deterministic below-threshold boundary cases plus optional stochastic boundary sensitivity.

S₃: deterministic strong-null construction plus optional Monte Carlo robustness.

S₄: deterministic wide-nuisance demonstration plus optional stochastic nuisance trials.

S₅: deterministic baseline-degeneracy construction plus optional parameter scan.

S₆: deterministic η-deformation construction plus optional deformation-size scan.

S₇: deterministic grid-density comparison.

S₈: Monte Carlo false-support stress test.

S₉: Monte Carlo false-failure stress test.

S₁₀: deterministic endpoint-shopping construction.

8.8 Simulation-Class Boundary Principle

Principle 8.2 — Simulation-Class Discipline.

Deterministic simulations test rule behavior. Monte Carlo simulations estimate error-risk behavior. The paper must not treat a single deterministic demonstration as an error-rate estimate, and it must not treat a Monte Carlo frequency as a new verdict rule.

8.9 Transition

With simulation classes defined, the paper runs the authorized scenario register.

9. Scenario S₀ — Baseline-Only Control

9.1 Scenario Definition

Scenario S₀ is the baseline-only control.

Set:

Δ_CBR(η) = 0.

Therefore:

T_CBR = 0.

The simulated visibility is generated from:

V_sim(η) = V_ℬ(η) + δ_𝓝(η).

There is no CBR residual in S₀. Any nonzero simulated residual relative to the baseline arises from ordinary nuisance structure or numerical construction.

The simulated endpoint is:

T_c^sim = 𝒯_sup[V_sim(η) − V_ℬ(η), η ∈ I_c].

Since:

V_sim(η) − V_ℬ(η) = δ_𝓝(η),

the endpoint is controlled by the realized nuisance term.

9.2 Purpose

The purpose of S₀ is to test false-support risk under ordinary baseline-plus-nuisance conditions.

A baseline-only simulation should not generate a support-like verdict. If it produces an endpoint exceeding Θ_c, the correct interpretation is not CBR support. It is a false-support stress event or a false-support risk diagnostic.

This scenario is essential because a decision procedure that frequently produces support-like classifications under baseline-only conditions is not reliable.

9.3 Pre-Simulation Expected Verdict

The expected verdict is:

simulation-only baseline / no-support.

Because Δ_CBR(η) = 0, there is no prediction-side CBR residual and no basis for a support-like CBR classification.

If a stochastic nuisance realization produces:

T_c^sim > Θ_c,

the post-simulation classification is:

false-support risk

rather than:

simulation-only support-like.

This distinction must be maintained even when the endpoint exceeds threshold. Threshold exceedance under a baseline-only scenario is not evidence for CBR.

9.4 Deterministic Baseline-Only Control

The deterministic S₀ control sets:

Δ_CBR(η) = 0

and:

δ_𝓝(η) = 0.

Then:

V_sim(η) = V_ℬ(η),

T_CBR = 0,

and:

T_c^sim = 0.

The expected and post-simulation verdict are both:

simulation-only baseline / no-support.

This deterministic run verifies the most basic null behavior of the verdict engine: when no residual and no nuisance perturbation are present, the endpoint is zero and no support-like verdict is available.

9.5 Monte Carlo Baseline-Only Runs

The Monte Carlo S₀ component samples δ_𝓝(η) under the registered nuisance convention.

The Monte Carlo run count N_MC must be fixed before simulation. If several N_MC values are explored, each value is treated as a separate sensitivity condition.

For each run, the output record must report:

random seed,

nuisance regime,

nuisance realization label,

T_c^sim,

Θ_c,

threshold-exceedance status,

validity-gate status,

and post-simulation verdict.

The central quantity is the false-support frequency:

FSR₀ = #{S₀ runs with T_c^sim > Θ_c and validity gates otherwise pass} / #{valid S₀ runs}.

The paper should report:

FSR₀,

N_MC,

and SE(FSR₀) = √[FSR₀(1 − FSR₀)/N_MC],

or a pre-registered equivalent interval convention.

This rate estimates how often ordinary baseline-plus-nuisance conditions produce a threshold-exceeding endpoint under the registered stochastic convention.

9.6 Degeneracy and Validity Status

Because Δ_CBR = 0 in S₀, Dcert is not a CBR-identifiability certificate. Any threshold exceedance is classified through the false-support-risk rule, not through a CBR support rule.

If a run produces T_c^sim > Θ_c, the correct classification depends on the output structure:

If the exceedance is produced by ordinary nuisance inside the baseline-only scenario, classify it as false-support risk.

If physical admissibility fails, classify the run as invalid.

If endpoint congruence fails, classify the run as invalid or not evaluable.

If the run changes the nuisance convention outside the imported register, classify it as exploratory or successor-version.

9.7 Output Requirements

The S₀ section must report:

deterministic baseline-only output,

Monte Carlo run count N_MC,

seed list or seed range,

nuisance regime,

endpoint distribution,

mean T_c^sim,

maximum observed T_c^sim,

threshold-exceedance frequency,

false-support rate FSR₀,

uncertainty interval or standard error for FSR₀,

validity-gate failure frequency,

and Scert(S₀) summary.

The Scert(S₀) summary should state whether the scenario remained inside C_RAI v0.1, whether any runs were invalid, and whether any runs were exploratory.

9.8 Establishment

S₀ establishes whether the registered decision machinery avoids false positive behavior under ordinary baseline-plus-nuisance conditions.

A well-behaved S₀ result supports the procedural reliability of the simulation rule. It does not support CBR physically. It shows only that the decision procedure does not automatically produce support-like verdicts when the CBR residual is absent.

9.9 Transition

Having established the baseline-only control, the paper next tests whether the decision procedure recognizes a detectable, non-degenerate synthetic CBR residual.

10. Scenario S₁ — Detectable CBR-Positive Case

10.1 Scenario Definition

Scenario S₁ is the detectable CBR-positive case.

Set the prediction-side condition:

T_CBR > Θ_c

and the degeneracy condition:

Δ_CBR ∉ Deg_C.

In the registered residual family, typical detectable amplitudes are:

A_CBR = 1.5Θ_c,

A_CBR = 2Θ_c,

and:

A_CBR = 3Θ_c.

For the normalized central residual, because the peak lies at η_c ∈ I_c:

T_CBR = |A_CBR|.

Therefore, these amplitudes satisfy:

T_CBR > Θ_c.

10.2 Purpose

The purpose of S₁ is to test whether the decision procedure recognizes a synthetic CBR-positive residual when the residual is detectable, non-degenerate, and evaluated under valid simulation conditions.

This scenario tests recognition behavior.

It asks:

When the dossier is given a detectable, non-degenerate synthetic residual, does the verdict engine classify it as simulation-only support-like?

It does not ask whether nature contains such a residual.

10.3 Pre-Simulation Expected Verdict

If validity gates pass and:

T_c^sim > Θ_c,

with:

Dcert(Δ_CBR) = non-degenerate,

the expected verdict is:

simulation-only support-like.

This verdict is synthetic. It is a decision-procedure classification inside the registered simulation environment. It is not empirical support.

10.4 Deterministic Recognition Runs

The deterministic S₁ runs should use controlled constructions such as:

A_CBR = 1.5Θ_c,

A_CBR = 2Θ_c,

A_CBR = 3Θ_c,

w_r = 0.05,

s = +1,

and δ_𝓝(η) = 0.

For each deterministic run:

V_sim(η) = V_ℬ(η) + Δ_CBR(η).

Then:

T_c^sim = T_CBR = |A_CBR|.

If physical admissibility and validity gates pass, the post-simulation verdict is:

simulation-only support-like.

These deterministic runs verify that the verdict engine recognizes the simplest detectable CBR-positive cases.

10.5 Optional Monte Carlo Recognition Robustness

The paper may also run Monte Carlo S₁ trials by sampling δ_𝓝(η) under registered nuisance regimes.

The purpose of these stochastic runs is not to estimate empirical support. It is to test recognition robustness under ordinary nuisance.

For each amplitude and nuisance regime, the paper may report:

recognition rate,

endpoint distribution,

mean T_c^sim,

threshold-exceedance frequency,

validity-gate failure frequency,

degeneracy-certificate stability,

and uncertainty interval or standard error for the recognition rate.

The recognition rate may be defined as:

RR₁ = #{valid S₁ runs classified simulation-only support-like} / #{valid S₁ runs}.

If RR₁ is reported from N_MC stochastic trials, the paper should also report:

SE(RR₁) = √[RR₁(1 − RR₁)/N_MC],

or an equivalent pre-registered interval convention.

The recognition rate is a simulation-diagnostic quantity, not the probability that CBR is true and not the probability of empirical support.

10.6 Degeneracy Certificate

A support-like S₁ verdict requires:

Dcert(Δ_CBR) = non-degenerate.

If a run satisfies T_c^sim > Θ_c but the residual is baseline-degenerate, nuisance-degenerate, η-degenerate, sampling-degenerate, or endpoint-degenerate, the verdict must be downgraded to:

simulation-only non-identifiable

or:

simulation-only inconclusive

according to the registered degeneracy rule.

This prevents threshold exceedance from being mistaken for CBR-identifiability.

10.7 Validity Gates

A support-like S₁ classification requires all relevant validity gates to pass.

In particular:

endpoint units must be congruent,

sampling must resolve the residual,

the baseline must remain physically admissible,

nuisance must not duplicate baseline effects,

Θ_c must be computable,

Dcert must be evaluable,

the statistical rule must be registered,

provenance labels must remain synthetic,

and the run must remain inside C_RAI v0.1.

If any required gate fails, the run is not support-like even if T_c^sim > Θ_c.

10.8 Output Requirements

The S₁ section must report:

deterministic recognition outputs,

amplitude values tested,

width values tested if varied,

sign values tested if varied,

nuisance regime,

T_CBR,

T_c^sim,

Θ_c,

recognition rate if Monte Carlo trials are used,

Monte Carlo run count N_MC if used,

uncertainty interval or standard error for recognition rate if reported,

endpoint distribution under nuisance,

minimum amplitude yielding stable support-like classification,

degeneracy-certificate status,

validity-gate status,

and Scert(S₁) summary.

10.9 Establishment

S₁ establishes support-like recognition behavior of the registered decision procedure.

It shows whether the imported C_RAI v0.1 machinery can identify a synthetic, detectable, non-degenerate residual under valid simulation conditions.

It does not establish empirical support.

10.10 Transition

The next scenario tests the opposite boundary: a CBR-positive residual that exists synthetically but is below or at the registered decision threshold.

11. Scenario S₂ — Undetectable CBR-Positive Case

11.1 Scenario Definition

Scenario S₂ is the undetectable CBR-positive case.

Set:

T_CBR ≤ Θ_c.

Typical amplitudes are:

A_CBR = 0.5Θ_c

and:

A_CBR = Θ_c.

For the normalized central residual:

T_CBR = |A_CBR|.

Thus A_CBR = 0.5Θ_c is below threshold, while A_CBR = Θ_c lies exactly at the threshold boundary.

11.2 Equality Convention

The equality case:

T_CBR = Θ_c

is threshold-borderline under v0.1.

It is not sufficient for strong-null failure.

Likewise, a simulated endpoint satisfying:

T_c^sim = Θ_c

is non-exceedance under the conservative equality rule.

Strict exceedance requires:

T_c^sim > Θ_c.

A detectable prediction requires:

T_CBR > Θ_c.

Therefore, S₂ is designed to test below-threshold and boundary discipline.

11.3 Purpose

The purpose of S₂ is to test whether the decision procedure refuses to support or fail a residual that is below or merely equal to the decision threshold.

A CBR-positive residual may exist in the synthetic construction, but if it does not exceed Θ_c, the registered decision machinery must not treat non-observation as failure.

Likewise, it must not treat a below-threshold endpoint as support.

The correct classification is inconclusive.

11.4 Pre-Simulation Expected Verdict

The expected verdict is:

simulation-only inconclusive.

This is because the predicted residual is not detectably separated from the ordinary-plus-detectability threshold under the registered rule.

If T_CBR ≤ Θ_c, then strong-null-like classification is unavailable.

If T_c^sim ≤ Θ_c, support-like classification is unavailable.

If stochastic nuisance pushes T_c^sim > Θ_c, the result must be evaluated carefully. Depending on scenario structure, it may be boundary sensitivity, false-support risk, or an invalid/exploratory stress event. It is not automatically support-like.

11.5 Deterministic Below-Threshold Runs

The deterministic S₂ runs should include:

A_CBR = 0.5Θ_c

and:

A_CBR = Θ_c.

With:

δ_𝓝(η) = 0,

the simulated endpoint satisfies:

T_c^sim = T_CBR.

Thus, for A_CBR = 0.5Θ_c:

T_c^sim = 0.5Θ_c < Θ_c.

For A_CBR = Θ_c:

T_c^sim = Θ_c.

Under the conservative equality convention, both cases are non-exceedance for support-like classification. The post-simulation verdict is:

simulation-only inconclusive.

11.6 Optional Stochastic Boundary Sensitivity

The paper may run optional stochastic nuisance trials near the threshold boundary.

These runs test how fragile the decision procedure is when A_CBR is close to Θ_c.

If stochastic trials are run, N_MC must be fixed before simulation. If multiple N_MC values are explored, each is treated as a separate sensitivity condition.

The paper should report:

threshold-crossing frequency,

endpoint distribution,

frequency of boundary cases,

false-support risk if nuisance drives exceedance,

validity-gate outcomes,

and uncertainty intervals or standard errors for any reported rates.

These runs do not change the formal rule. They diagnose boundary sensitivity.

11.7 Degeneracy and Validity Status

Because S₂ is below or at threshold, degeneracy may be less central than detectability, but it must still be recorded if evaluated.

If Dcert is non-degenerate, the verdict remains inconclusive because the residual is not detectably separated.

If Dcert is degenerate, the verdict becomes non-identifiable or inconclusive by degeneracy.

If validity gates fail, the run becomes invalid, exploratory, or inconclusive according to the registered rule.

11.8 Output Requirements

The S₂ section must report:

amplitude cases tested,

threshold equality status,

T_CBR,

T_c^sim,

Θ_c,

endpoint distribution if stochastic trials are used,

Monte Carlo run count N_MC if used,

threshold-crossing frequency,

uncertainty interval or standard error for any reported stochastic rate,

boundary sensitivity,

degeneracy-certificate status,

validity-gate status,

and Scert(S₂) summary.

11.9 Establishment

S₂ establishes below-threshold discipline.

It shows that the registered decision procedure does not issue support-like or strong-null-like classifications when the synthetic residual is below or merely equal to the detectability threshold.

11.10 Transition

The next scenario tests synthetic strong-null logic: a detectable, non-degenerate prediction that fails to appear in simulated observation under valid exposure.

12. Scenario S₃ — Strong-Null Scenario

12.1 Scenario Definition

Scenario S₃ is the strong-null scenario.

The prediction side satisfies:

T_CBR > Θ_c

and:

Δ_CBR ∉ Deg_C.

The simulation-side endpoint satisfies:

T_c^sim ≤ Θ_c.

Power or exposure adequacy must pass, and validity gates must pass.

This scenario therefore separates prediction-side detectability from simulation-side non-observation.

The synthetic CBR residual is registered as detectable and non-degenerate, but the simulated endpoint remains inside the ordinary-plus-detectability threshold.

12.2 Purpose

The purpose of S₃ is to test synthetic failure logic.

It asks whether the registered verdict machinery can issue a strong-null-like classification when all failure-side conditions are satisfied:

the prediction is detectable,

the prediction is non-degenerate,

exposure is valid,

power or exposure adequacy is sufficient,

and the simulated endpoint does not exceed threshold.

This scenario tests the failure rule. It does not fail CBR in nature.

12.3 Pre-Simulation Expected Verdict

The expected verdict is:

simulation-only strong-null-like.

This classification is available only when:

T_CBR > Θ_c,

Dcert(Δ_CBR) = non-degenerate,

validity gates pass,

power or exposure adequacy is sufficient,

and T_c^sim ≤ Θ_c.

If any of these conditions fail, the verdict is not strong-null-like. It is inconclusive, invalid, non-identifiable, or false-failure risk depending on the failure mode.

12.4 Constructing the Strong-Null Case

To construct S₃, the paper must distinguish between the registered prediction-side residual and the simulation-side non-observation.

The prediction side may set:

A_CBR = 1.5Θ_c

or another detectable amplitude, so that:

T_CBR > Θ_c.

The simulated observation side is then constructed so that the predicted residual does not appear in V_sim(η).

For example:

prediction-side object:

Δ_CBR^pred(η) = 1.5Θ_c exp[−(η − η_c)²/(2w_r²)],

but simulation-side visibility:

V_sim(η) = V_ℬ(η) + δ_𝓝(η),

with no realized CBR residual in the simulated visibility.

Then:

T_c^sim = 𝒯_sup[δ_𝓝(η), η ∈ I_c].

If:

T_c^sim ≤ Θ_c,

and all validity gates pass, the post-simulation verdict is:

simulation-only strong-null-like.

This construction tests what the decision rule would do if a detectable registered prediction failed to appear.

12.5 Deterministic Strong-Null Run

The simplest deterministic S₃ run sets:

prediction-side:

T_CBR = 1.5Θ_c,

Dcert(Δ_CBR) = non-degenerate,

and simulation-side:

δ_𝓝(η) = 0,

V_sim(η) = V_ℬ(η).

Then:

T_c^sim = 0.

Since:

0 ≤ Θ_c,

the observation side satisfies:

T_c^sim ≤ Θ_c.

If validity gates and power or exposure-adequacy conditions pass, the post-simulation verdict is:

simulation-only strong-null-like.

This is a deterministic test of the failure logic.

12.6 Optional Monte Carlo Strong-Null Robustness

The paper may also run Monte Carlo S₃ trials by sampling ordinary nuisance while omitting the predicted residual from the simulation-side visibility.

These trials test whether ordinary nuisance can produce threshold exceedances that prevent strong-null-like classification.

For each run:

V_sim(η) = V_ℬ(η) + δ_𝓝(η).

The paper reports how often:

T_c^sim ≤ Θ_c

versus:

T_c^sim > Θ_c.

If nuisance produces threshold exceedance, the run does not yield strong-null-like classification. It may become inconclusive, false-support risk, or validity-sensitive depending on scenario structure.

If stochastic trials are used, N_MC must be registered before simulation, and the paper must report uncertainty intervals or standard errors for any reported frequency.

12.7 Boundary of Strong-Null Simulation

A simulation-only strong-null-like result does not fail:

CBR as a general program,

the C_RAI platform class,

the realization-law thesis,

or standard quantum theory.

It tests only the failure logic of the registered C_RAI v0.1 decision procedure.

The correct interpretation is:

If an analogous empirical dossier had a pre-registered detectable, non-degenerate prediction and a valid observation satisfying T_c ≤ Θ_c, the verdict machinery would classify that instantiation as failed.

The simulation itself is not that empirical dossier.

12.8 False-Failure Boundary

A strong-null-like classification is unavailable if exposure is invalid.

If the simulated visibility fails to show the residual because sampling is inadequate, nuisance is too wide, power is insufficient, η is miscalibrated, or validity gates fail, the correct verdict is not strong-null-like. It is:

simulation-only inconclusive

or:

false-failure risk.

This distinction prevents the paper from treating failed detection under invalid conditions as meaningful failure.

12.9 Proposition — Synthetic Strong-Null Non-Adjudication

Proposition 12.1 — Synthetic Strong-Null Non-Adjudication.

A simulation-only strong-null-like verdict tests the failure logic of the registered C_RAI v0.1 decision procedure, but it does not constitute empirical failure of CBR, the C_RAI platform class, or the realization-law thesis.

Proof Sketch

A strong-null-like simulation uses synthetic registered objects rather than calibrated empirical data. It can show that the verdict engine would classify a valid non-observation of a detectable, non-degenerate prediction as failure-like under the registered rule. However, because no empirical apparatus, calibrated η, validated nuisance model, or real observed endpoint is being adjudicated, the simulation does not fail CBR in nature. It tests decision behavior, not physical truth.

12.10 Output Requirements

The S₃ section must report:

prediction-side amplitude,

prediction-side T_CBR,

simulation-side construction,

whether the predicted residual is omitted from V_sim,

T_c^sim,

Θ_c,

power or exposure-adequacy status,

degeneracy-certificate status,

validity-gate status,

post-simulation verdict,

and Scert(S₃) summary.

If Monte Carlo trials are used, report:

number of runs N_MC,

seed list or seed range,

endpoint distribution,

threshold-exceedance frequency,

strong-null-like classification frequency,

inconclusive frequency,

false-failure-risk frequency,

validity-gate failure frequency,

and uncertainty interval or standard error for each reported rate.

12.11 Establishment

S₃ establishes the synthetic behavior of the failure rule.

It shows whether the registered C_RAI v0.1 verdict machinery can classify a valid non-observation of a detectable, non-degenerate prediction as simulation-only strong-null-like.

It does not establish empirical failure.

12.12 Transition

Having tested baseline-only behavior, detectable recognition, below-threshold discipline, and strong-null logic, the paper next turns to nuisance, degeneracy, sampling, false-support, false-failure, and endpoint-shopping scenarios.

13. Scenario S₄ — Wide-Nuisance Inconclusiveness

13.1 Scenario Definition

Scenario S₄ is the wide-nuisance inconclusiveness scenario.

Use the registered wide-nuisance regime:

B_𝓝^wide = 0.0375

or otherwise instantiate a registered nuisance condition such that the predicted residual cannot be separated from ordinary allowance.

The scenario is defined by the condition that the residual is absorbed, obscured, or rendered non-decisive by the nuisance envelope or decision threshold. In endpoint terms, this occurs when:

T_CBR ≤ Θ_c

or when the residual morphology falls inside the ordinary nuisance allowance:

|Δ_CBR(η)| ≤ B_𝓝(η) + ε_detect

through the relevant critical regime.

The key point is that S₄ is not a denial that a residual may be present synthetically. It is a test of whether the registered decision procedure refuses to overclaim when the residual is not distinguishable from ordinary nuisance.

13.2 Purpose

The purpose of S₄ is to test nuisance discipline.

A scientifically usable decision procedure must not classify a residual as support-like merely because a residual appears in the synthetic construction. The residual must exceed the registered ordinary allowance and survive degeneracy and validity checks.

If ordinary nuisance is wide enough to swallow the residual, then the correct verdict is not support. It is inconclusive or non-identifiable, depending on whether the nuisance merely obscures the residual or actively reproduces its morphology.

This scenario therefore asks:

When ordinary nuisance is broad enough to absorb or obscure the residual, does the C_RAI v0.1 verdict engine refuse support-like classification?

13.3 Wide Nuisance Versus Nuisance Degeneracy

This scenario distinguishes two related but different cases.